10/23/2015 1

CSE 473: Artificial Intelligence

Autumn 2015

1

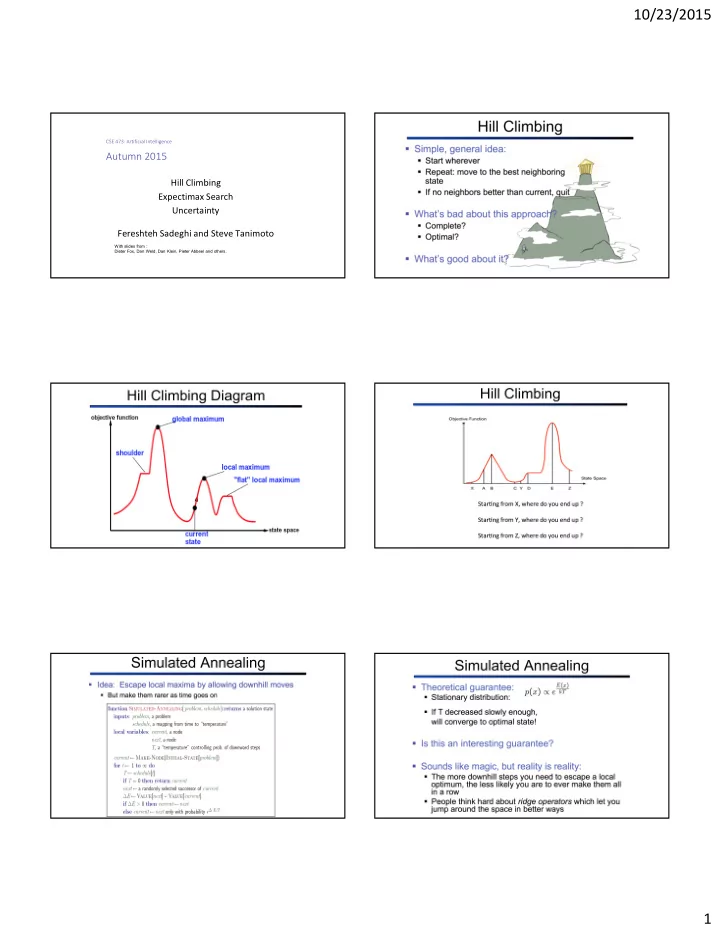

Hill Climbing Expectimax Search Uncertainty Fereshteh Sadeghi and Steve Tanimoto

With slides from : Dieter Fox, Dan Weld, Dan Klein, Pieter Abbeel and others.

10/23/2015 CSE 473: Artificial Intelligence Autumn 2015 Hill - - PDF document

10/23/2015 CSE 473: Artificial Intelligence Autumn 2015 Hill Climbing Expectimax Search Uncertainty Fereshteh Sadeghi and Steve Tanimoto With slides from : Dieter Fox, Dan Weld, Dan Klein, Pieter Abbeel and others. 1 1 10/23/2015 2

CSE 473: Artificial Intelligence

1

With slides from : Dieter Fox, Dan Weld, Dan Klein, Pieter Abbeel and others.

10 10 9 100

max chance

variable is the average, weighted by the probability distribution over outcomes

0.25 0.50 0.25 Probability: 20 min 30 min 60 min Time:

x x x

10 10 9 100

max min

10 10 9 100

max chance

probabilistic model of how the opponent (or environment) will behave in any state

(roll a die)

great deal of computation

are likely!

magically comes along with probabilities that specify the distribution over its

respond erratically

wheels might slip

10 4 5 7

max chance

10 10 9 100

A1 A2

expected utilities

children

10 4 5 7 10 10 9 100 [Demo: min vs exp (L7D1,2)]

max chance

def value(state): if the state is a terminal state: return the state’s utility if the next agent is MAX: return max-value(state) if the next agent is EXP: return exp-value(state) def exp-value(state): initialize v = 0 for each successor of state: p = probability(successor) v += p * value(successor) return v def max-value(state): initialize v = -∞ for each successor of state: v = max(v, value(successor)) return v

def exp-value(state): initialize v = 0 for each successor of state: p = probability(successor) v += p * value(successor) return v

v = (1/2) (8) + (1/3) (24) + (1/6) (-12) = 5 7 8 24

1/2 1/3 1/6

10 10

maximizes its expected utility, given its knowledge

sense?

described by utilities?

to real numbers that describe an agent’s preferences

1)

goals

preferences can be summarized as a utility function

behaviors emerge

utilities?

behaviors?

Getting ice cream Get Single Get Double Oops Whe w!

among:

uncertain prizes

p 1-p

such as:

be induced to give away all of its money

) ( ) ( ) ( C A C B B A

Theorem: Rational preferences imply behavior describable as maximization of expected utility

valued function U such that:

lotteries!

ever representing or manipulating utilities and probabilities

How much you would pay to avoid a a risk? What value people would place on their own lives?

How much you would pay to avoid a a risk? What value people would place on their own lives? Perhaps tens of thousands of dollars…??

How much you would pay to avoid a a risk? What value people would place on their own lives? Perhaps tens of thousands of dollars…??

How much you would pay to avoid a a risk? What value people would place on their own lives? Perhaps tens of thousands of dollars…??

The actual human behavior reflects a much lower monetary value for a micromort!!!

How much you would pay to avoid a a risk? What value people would place on their own lives? Perhaps tens of thousands of dollars…??

The actual human behavior reflects a much lower monetary value for a micromort!!! Driving for 230 miles incurs a risk of one micromort!! Over the life of your car (~92k miles) that’s 400 micromorts!! People are willing to pay $10k for a car that halves the risk of death!!

useful for paying to reduce product risks, etc.

medical decisions involving substantial risk

transformation

choices), only ordinal utility can be determined, i.e., total order on prizes

0.999999 0.000001

utilities:

talk about the utility of having money (or being in debt)

$0]

($500)

lottery

premium

people will pay to reduce their risk

needed!

and the insurance company would rather have the lottery (their utility curve is flat and they have many lotteries)

56