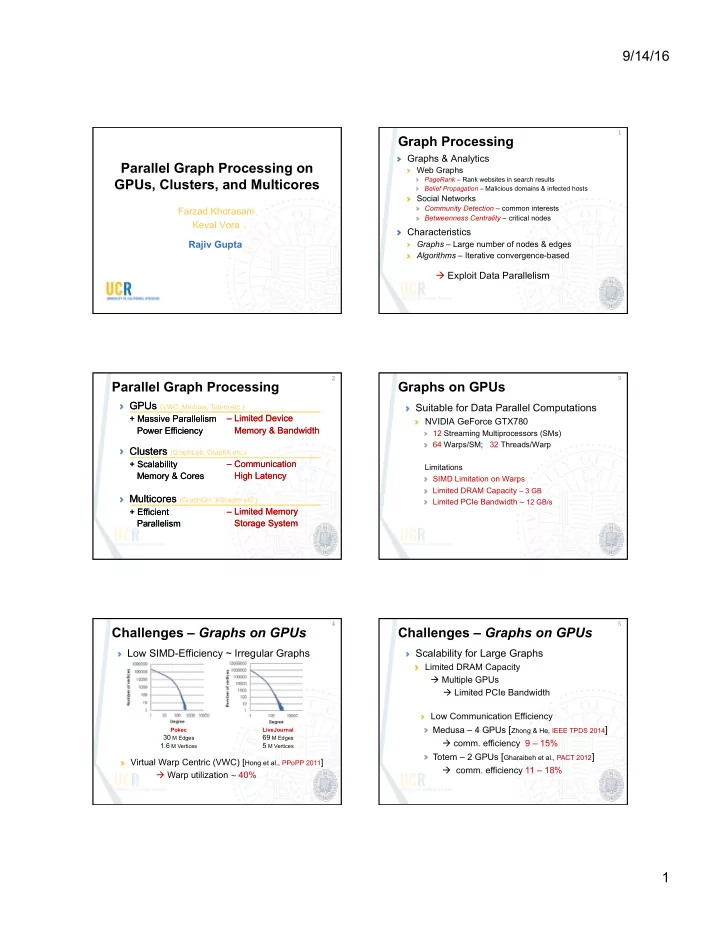

9/14/16 1 Parallel Graph Processing on GPUs, Clusters, and Multicores

Farzad Khorasani Keval Vora Rajiv Gupta

Graph Processing

Graphs & Analytics

Web Graphs

PageRank – Rank websites in search results Belief Propagation – Malicious domains & infected hosts

Social Networks

Community Detection – common interests Betweenness Centrality – critical nodes

Characteristics

Graphs – Large number of nodes & edges Algorithms – Iterative convergence-based

à Exploit Data Parallelism

1 ¡

Parallel Graph Processing

2 ¡

– Limited Memory Storage System – Limited Device Memory & Bandwidth – Communication High Latency

GPUs

+ Massive Parallelism Power Efficiency

Clusters

+ Scalability Memory & Cores

Multicores

+ Efficient Parallelism – Limited Memory Storage System – Limited Device Memory & Bandwidth – Communication High Latency

GPUs (VWC, Medusa, Totem etc.)

+ Massive Parallelism Power Efficiency

Clusters (GraphLab, GraphX etc.)

+ Scalability Memory & Cores

Multicores (GraphChi, XStream etc.)

+ Efficient Parallelism

Graphs on GPUs

Suitable for Data Parallel Computations

NVIDIA GeForce GTX780

12 Streaming Multiprocessors (SMs) 64 Warps/SM; 32 Threads/Warp Limitations SIMD Limitation on Warps Limited DRAM Capacity – 3 GB Limited PCIe Bandwidth – 12 GB/s

3 ¡

Challenges – Graphs on GPUs

Low SIMD-Efficiency ~ Irregular Graphs

Pokec

30 M Edges 1.6 M Vertices

LiveJournal

69 M Edges 5 M Vertices

Virtual Warp Centric (VWC) [Hong et al., PPoPP 2011] à Warp utilization ~ ¡40%

4 ¡

Challenges – Graphs on GPUs

Scalability for Large Graphs

Limited DRAM Capacity à Multiple GPUs à Limited PCIe Bandwidth Low Communication Efficiency Medusa – 4 GPUs [Zhong & He, IEEE TPDS 2014] à comm. efficiency 9 – 15% Totem – 2 GPUs [Gharaibeh et al., PACT 2012] à comm. efficiency 11 – 18%

5 ¡