A bit of background

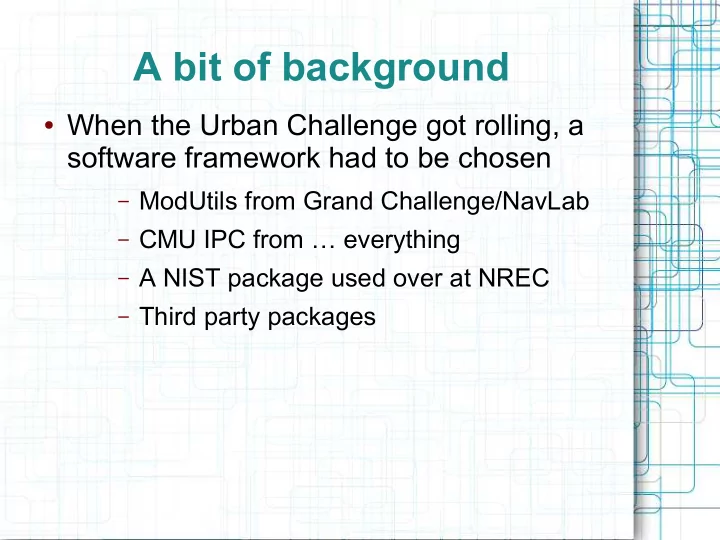

- When the Urban Challenge got rolling, a

software framework had to be chosen

– ModUtils from Grand Challenge/NavLab – CMU IPC from … everything – A NIST package used over at NREC – Third party packages

A bit of background When the Urban Challenge got rolling, a - - PowerPoint PPT Presentation

A bit of background When the Urban Challenge got rolling, a software framework had to be chosen ModUtils from Grand Challenge/NavLab CMU IPC from everything A NIST package used over at NREC Third party packages ModUtils

– ModUtils from Grand Challenge/NavLab – CMU IPC from … everything – A NIST package used over at NREC – Third party packages

– Grand Challenge/NavLab provide

– It was written in the wild west – Internal implementations of marshalling,

– Tracking down bugs is intimidating

– Developed reputation for having a steep

– Ad-hoc, simple infrastructure for relatively

– Support packages like MicroRaptor (a

– Lots of arcane text files to configure minor

– No evidence of high performance uses – Design choices that are obviously not

– Limited capabilities

– First have to understand system, then

– Not shopping for a new model, just an

– Config files - ruby – Marshalling – boost::serialization – Comms – CMU IPC – Log file format – Berkeley DB – UI – QT integrated with interface

– task library to glue it all together

– Performs common functions all tasks

– Ruby can either contain static values, or

– C++ virtual classes, implementations

– Not every interface uses the channel, as

– It internally marshalls or unmarshalls T to

– Readily available

– Simple to use

– Multiple trips across network – Serializes everything thru one bottleneck

Central Mode Machine One T1 T2 T1 T2 Machine Two T1 T2 T3 T4 Machine Three T1 T2 T5 T6 Central

– It *cough* works *cough* – Still necessitates multiple network trips

Direct Mode Machine One T1 T2 T1 T2 Machine Two T1 T2 T3 T4 Machine Three T1 T2 T5 T6 Central

Dead Lock Task Central

– Frees tasks from network comms/multiple

– One delivery per host

SimpleComms Machine One T1 T2 Machine Two T3 T4 Machine Three T5 T6 SCS SCS SCS

– Trade off between throughput and

– Compatible with UDP

– Originally selected for the simplicity of having

– Each task and SCS binds to a socket in Linux's

– Down side with datagrams is discrepancy in

– Blocking I/O is used to avoiding spinning

– The workaround was sufficient, otherwise SCS

– “zero conf” as the SCS daemons discover

– Default port, and ethernet interface used

– eth0 is masked /16, the facility network – eth0:1 is masked /24, limited to robot

– Concerns about TCP delays from dropped

– Losing messages to UDP, or developing

– A pair of TCP connections between each

– A reading thread polls for input and distributes messages

to subscribed queues

– A writing thread empties the queues when sockets flag

available

Dedicated threads servicing buffers Task SCS Read Write

Unix Client

– Services the incoming messages even when the task is

busy

– Messages are sent immediately

Dedicated threads servicing buffers Task SCS Read Write

Unix Client

– Use vectored I/O to deliver directly to

Vectored I/O Peek Header recvmsg Fragment

One Copy

sendmsg recv Header Fragment

Copy #1

Fragment

Copy #2