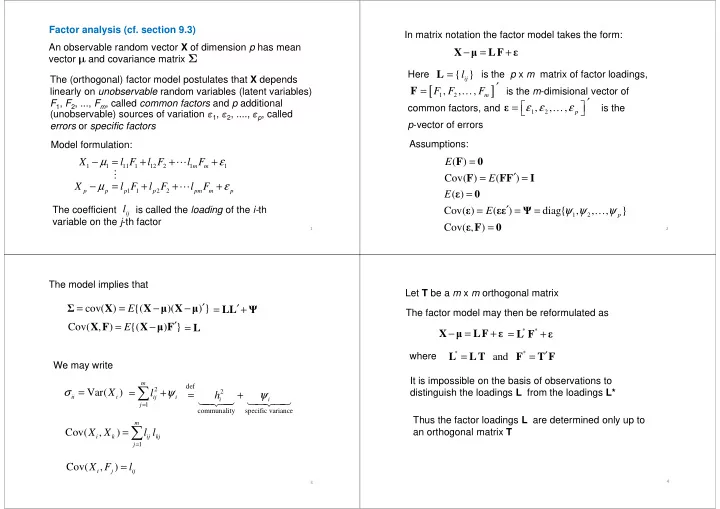

An observable random vector X of dimension p has mean vector and covariance matrix Σ The (orthogonal) factor model postulates that X depends linearly on unobservable random variables (latent variables) F1, F2, ..., Fm, called common factors and p additional (unobservable) sources of variation ε1, ε2, ...., εp, called errors or specific factors Factor analysis (cf. section 9.3)

1

Model formulation:

1 1 11 1 12 2 1 1 m m

X l F l F l F µ ε − = + + + ⋯

errors or specific factors

1 1 2 2 p p p p pm m p

X l F l F l F µ ε − = + + + ⋯

The coefficient is called the loading of the i-th variable on the j-th factor

ij

l ⋮ − = + X

- LF

ε

In matrix notation the factor model takes the form: Here is the p x m matrix of factor loadings, is the m-dimisional vector of common factors, and is the p-vector of errors

{ }

ij

l = L

[ ]

1 2

, , ,

m

F F F ′ = F …

1 2

, , ,

p

ε ε ε ′ = ε …

2

( ) Cov( ) ( ) E E = ′ = = F F FF I

p-vector of errors Assumptions:

1 2

( ) Cov( ) ( ) diag{ , , , }

p

E E ψ ψ ψ = ′ = = = ε ε εε Ψ … Cov( , ) = ε F

The model implies that

cov( ) {( )( ) } E ′ = = − − Σ X X X

- ′

= + LL Ψ

We may write

m

Cov( , ) {( ) } E ′ = − X F X F = L

3

Var( )

ii i

X σ =

2 1 m ij i j

l ψ

=

= +

∑

2

def communality specific variance

i i

h ψ = +

- 1

Cov( , )

m i k ij kj j

X X l l

=

=∑ Cov( , )

i j ij

X F l =

Let T be a m x m orthogonal matrix

− = + X

- LF

ε

The factor model may then be reformulated as

* *

= + L F ε

where

* *

and ′ = = L LT F T F

It is impossible on the basis of observations to

4