9/19/2017 1

Recognizing object categories

Kristen Grauman UT-Austin Wed Sept 13, 2017

Announcements

- Reminders:

- Assignment 1 due Sept 22 11:59 pm on Canvas

- No laptops, phones, tablets, etc. in class

- Thoughts on review sharing?

- Questions about presentations, experiments,

discussion proponent/opponent?

Last time: Recognizing instances Last time: Recognizing instances

- 1. Basics in feature extraction: filtering

- 2. Invariant local features

- 3. Recognizing object instances

Instance recognition: remaining issues

- How to summarize the content of an entire

image? And gauge overall similarity?

- How large should the vocabulary be? How to

perform quantization efficiently?

- Is having the same set of visual words enough to

identify the object/scene? How to verify spatial agreement?

Kristen Grauman

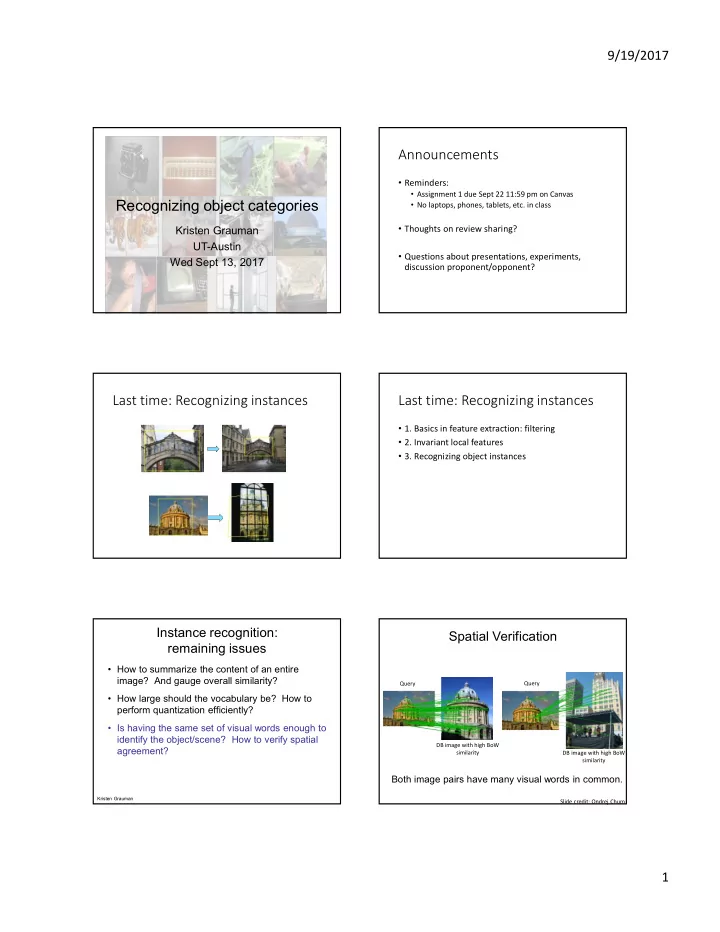

Spatial Verification

Both image pairs have many visual words in common.

Slide credit: Ondrej Chum Query Query DB image with high BoW similarity DB image with high BoW similarity