4/18/2013 1

Assessment and Grading

Emad Mansour

eam0028@auburn.edu

26 Feb., 2013

In 2-3 minutes, list all the words you know that are related to assessment (whether you know well or not)

Goals for Today

- Differentiate between Assessment & Evaluation

- Differentiate between formative and summative

assessment of students’ learning

- Identify and apply some classroom assessment

techniques (CATs)

- Identify different types of test items

- Differentiate between validity and reliability

- Contrast grading policies

- Create and use rubrics for grading

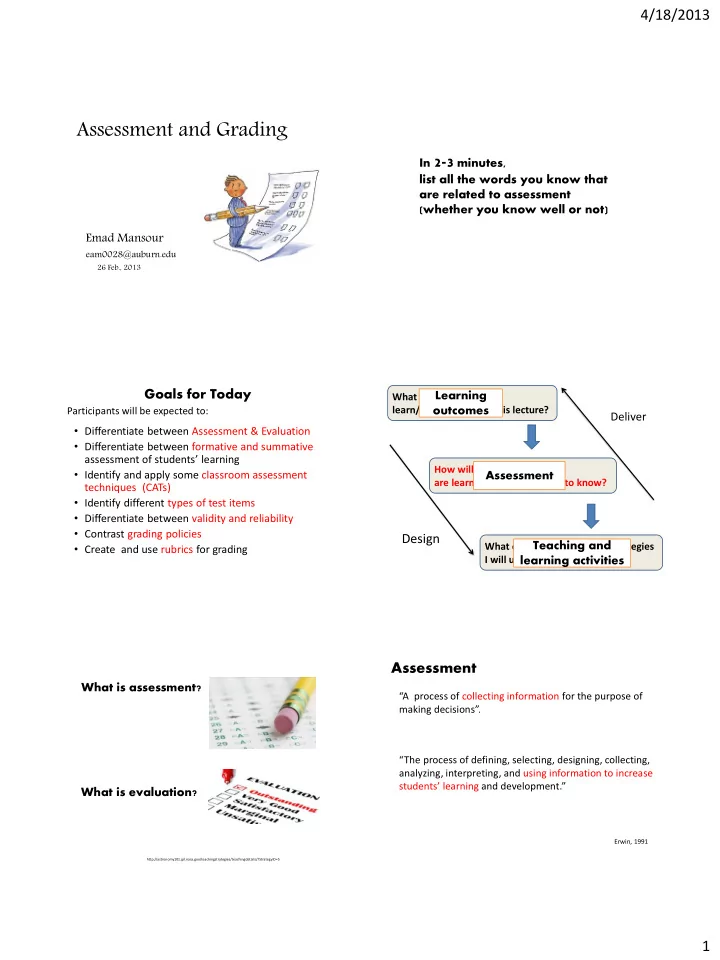

Participants will be expected to: What should students learn/take away from this lecture? How will I know if students are learning what they need to know? What content and teaching strategies I will use?

Design

Deliver Learning

- utcomes

Assessment Teaching and learning activities What is assessment?

http://astronomy101.jpl.nasa.gov/teachingstrategies/teachingdetails/?StrategyID=5

What is evaluation?

“A process of collecting information for the purpose of making decisions”.

Assessment

“The process of defining, selecting, designing, collecting, analyzing, interpreting, and using information to increase students’ learning and development.”

Erwin, 1991