Asymptotic Analysis

Pedro Ribeiro

DCC/FCUP

2018/2019

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 1 / 79

Asymptotic Analysis Pedro Ribeiro DCC/FCUP 2018/2019 Pedro - - PowerPoint PPT Presentation

Asymptotic Analysis Pedro Ribeiro DCC/FCUP 2018/2019 Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 1 / 79 Motivational Example - TSP Traveling Salesman Problem (Euclidean TSP version) Input : a set S of n points in the plane Output

DCC/FCUP

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 1 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 2 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 3 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 4 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 5 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 6 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 7 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 8 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 9 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 10 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 11 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 12 / 79

◮ 10x faster would still take 500 years ◮ 5,000x would still take 1 year ◮ 1,000,000x faster would still take two days, but

n = 21 would take more than a month n = 22 would take more than a year!

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 13 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 14 / 79

◮ Each simple operation (ex: +, −, ←, If) takes 1 step ◮ Loops and procedures, for example, are not simple instructions! ◮ Each access to memory takes also 1 step

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 15 / 79

A counting example

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 16 / 79

A counting example

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 17 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 18 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 19 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 20 / 79

Definitions

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 21 / 79

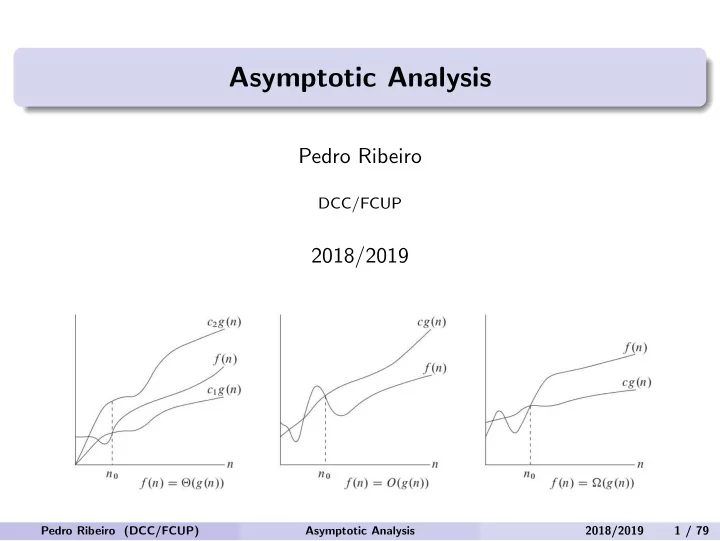

A graphical depiction

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 22 / 79

Formalization

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 23 / 79

Analogy

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 24 / 79

A few consequences

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 25 / 79

A few practical rules

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 26 / 79

Dominance

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 27 / 79

A practical view

log n n n log n n2 n3 2n n! 10

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

20

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

77 years 30

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

1.07s 40

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

18.3 min 50

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

13 days 100

< 0.01s < 0.01s < 0.01s < 0.01s < 0.01s

1013years 103

< 0.01s < 0.01s < 0.01s < 0.01s

1s 104

< 0.01s < 0.01s < 0.01s

0.1s 16.7 min 105

< 0.01s < 0.01s < 0.01s

10s 11 days 106

< 0.01s < 0.01s

0.02s 16.7 min 31 years 107

< 0.01s

0.01s 0.23s 1.16 days 108

< 0.01s

0.1s 2.66s 115 days 109

< 0.01s

1s 29.9s 31 years

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 28 / 79

Common Functions

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 29 / 79

Drawing functions

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 30 / 79

A few more examples

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 31 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 32 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 33 / 79

q

2

n

2

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 34 / 79

2

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 35 / 79

n

2

2

2n2 + 1 2n.

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 36 / 79

n

6

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 37 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 38 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 39 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 40 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 41 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 42 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 43 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 44 / 79

MergeSort

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 45 / 79

Technicalities

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 46 / 79

Solving

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 47 / 79

Unrolling Method

2

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 48 / 79

Substitution method

◮ With weak induction we assume it is valid for n and then we prove

n + 1

◮ With strong induction we assume it is valid for all n0 < n and we

prove it for n.

◮ We have an exact guess, with no ”unknowns” (ex: 3n2 − n) ◮ We only have an idea of the class it belongs to (ex: cn2)

◮ If we have an exact formula, just use it ◮ Else, it may be ”easier” to separately prove O and Ω ⋆ Ex: to prove O we can show it is less than c.g(n) ⋆ Ex: to prove Ω we can show it is more than c.g(n) Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 49 / 79

Substitution method

(n+1)n 2

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 50 / 79

Substitution method

2

n(n−1) 2

n2−n 2

n2−n+2n 2

n2+n 2

(n+1)n 2

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 51 / 79

Substitution method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 52 / 79

Substitution method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 53 / 79

Substitution Method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 54 / 79

Substitution Method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 55 / 79

Substitution Method - Subtleties

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 56 / 79

Substitution Method - Subtleties

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 57 / 79

Substitution Method - Subtleties

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 58 / 79

Recursion Tree Method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 59 / 79

Recursion Tree Method

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 60 / 79

Master Theorem

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 61 / 79

Intuition behind it

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 62 / 79

Intuition behind it

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 63 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 64 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 65 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 66 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 67 / 79

Revisiting the examples

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 68 / 79

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 69 / 79

Matrix Multiplication

n

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 70 / 79

Matrix Multiplication

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 71 / 79

Matrix Multiplication

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 72 / 79

Matrix Multiplication

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 73 / 79

Matrix Multiplication

S1 = B12 − B22, S2 = A11 + A12, S3 = A21 + A22, S4 = B21 − B11, S5 = A11 + A22, S6 = B11 + B22, S7 = A12 − A22, S8 = B21 + B22, S9 = A11 − A21, S10 = B11 + B12 10x add/subtract matrices of size n/2: Θ(n2) P1 = A11 · S1, P2 = S2 · B22, P3 = S3 · B11 P4 = A22 · S4, P5 = S5 · S6, P6 = S7 · S8 P7 = S9 · S10 7 multiplications of matrices of size n/2: 7T(n/2) C11 = P5 + P4 − P2 + P6 C12 = P1 + P2 C21 = P3 + P4 C22 = P5 + P1 − P3 − P7 8x add/subtract matrices of size n/2: Θ(n2)

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 74 / 79

Matrix Multiplication

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 75 / 79

Matrix Multiplication

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 76 / 79

A Puzzle

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 77 / 79

A Puzzle

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 78 / 79

A Puzzle

Pedro Ribeiro (DCC/FCUP) Asymptotic Analysis 2018/2019 79 / 79