Bio-Inspired Computing for Music Charles Martin - Univ. Oslo, Dept. - - PowerPoint PPT Presentation

Bio-Inspired Computing for Music Charles Martin - Univ. Oslo, Dept. - - PowerPoint PPT Presentation

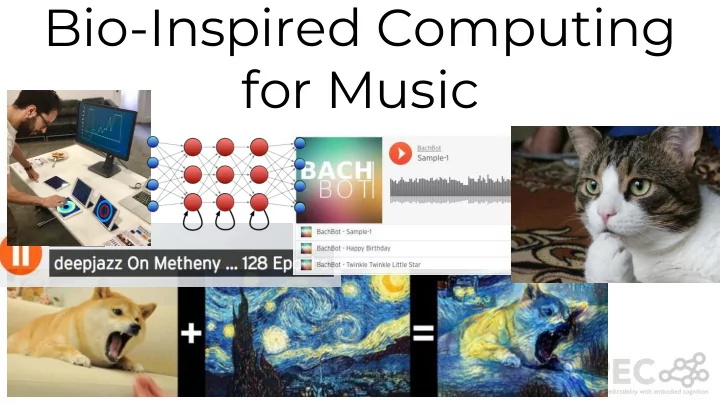

Bio-Inspired Computing for Music Charles Martin - Univ. Oslo, Dept. Informatics https://folk.uio.no/charlepm https://charlesmartin.com.au But why?? Predictive Musical Interaction - How can musical instruments be more intelligent ? -

Charles Martin - Univ. Oslo, Dept. Informatics

https://folk.uio.no/charlepm https://charlesmartin.com.au

But why??

Predictive Musical Interaction

- How can musical instruments

be more “intelligent”?

- What would that mean for

musicians, music making, and music?

microjam.info

Music and Research

we make new musical instruments, measure musical experiences, find new ways to express

- urselves with technology.

Why?

- Expression and creativity is important.

- Music is everywhere; people care about it.

- Music is hard; realtime, high standards.

- It’s fun.

You don’t have to be a professional musician to do a great musical project!

Space: the final frontier. These are the voyages

Models

Then... Now...

Mozer (1994) Eck & Schmidhuber (2003)

Music Generation: Predicting note-by-note

Magenta (Google) 2016-2017

Representations of Music

Thin Medium? Thick

folkRNN Performance RNN WaveNet

Theme: Creative Machine Learning

- Using Deep Learning to represent

and create music and art!

- Compose music for video games?

- Mobile apps that generate music

endlessly!

- MIREX Competition Tasks - music

information retrieval

- Techniques: Deep Learning,

Recurrent Networks, Convolutional Networks,

Theme: Intelligent Instruments

How can we integrate ML/AI into NIMEs?

- Help users make better music.

- “Guess” intentions (key, scale,

harmony).

- Generate extra sounds (ensemble

experience) using ANNs or evolution.

- Make sound/music in response to

sensors/cameras (sonification).

- Challenge/fun here is interacting with

ML algorithms.

Data → ML → Interaction!

Theme: Embedded Instruments

- Use microcontrollers /

single board computers to make stand-alone NIMEs

- Includes prototyping,

hardware creation, programming.

- Could be for augmented

instruments as well?

- e.g.,

Theme: Social Music Making

How can music bring people together?

- Make “ensemble” playing easier.

- Instruments that change to

support “parts” in a group.

- Asynchronous music making:

performing as a group via facebook or the web

Mixture Density RNN

Neural Touchscreen Ensemble

Neural iPad Ensemble

Embodied Musical Predictions

EMPI: Embodied Musical Predictive Instrument

RITMO: Centre for Interdisciplinary Studies in Rhythm, Time and Motion

MusicLab vol. 3: Rhythm

MusicLab Vol. 3 explores the phenomenon of rhythm

- in music and in the body - all within MusicLab’s

unique blend of research and edutainment through and intellectual warm-up with world-leading experts, music-dance performances and data jockeying with

- ur house DJ and anyone else interested.

Time and place: Nov. 15, 2018 7:00 PM, Escape, Ole Johan Dahls hus

https://www.hf.uio.no/ritmo/english/n ews-and-events/events/musiclab/201 8/rhythm/index.html