Slides adapted with thanks from: Dr. Marie desJardin

Artificial Intelligence

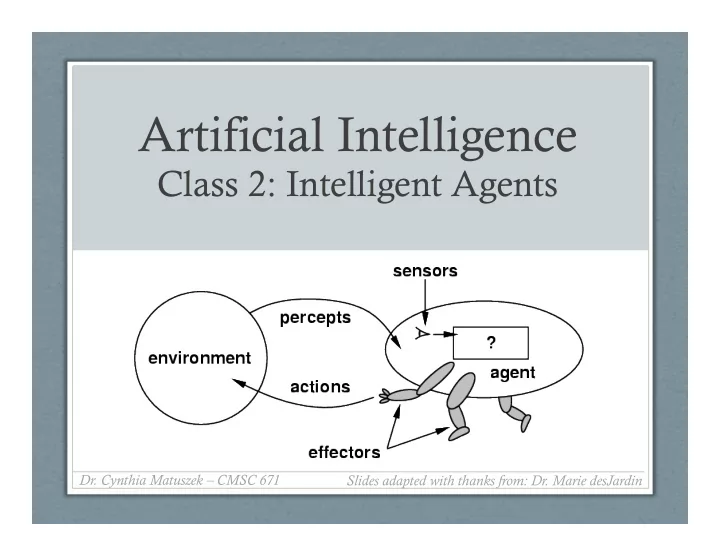

Class 2: Intelligent Agents

- Dr. Cynthia Matuszek – CMSC 671

Bookkeeping Due last night: Introduction survey If you havent - - PowerPoint PPT Presentation

Artificial Intelligence Class 2: Intelligent Agents Dr. Cynthia Matuszek CMSC 671 Slides adapted with thanks from: Dr. Marie desJardin Bookkeeping Due last night: Introduction survey If you havent Academic integrity done

Slides adapted with thanks from: Dr. Marie desJardin

2

If you haven’t done these, do!

3

use to characterize different problem spaces?

4

environment

via the environment

5

(olfaction), neuromuscular system (proprioception), …

…), auditory streams (pitch, loudness, direction), …

6

(olfaction), neuromuscular system (proprioception), …

…), auditory streams (pitch, loudness, direction), …

7

to be carefully defined

levels of abstraction!

input, microphone, GPS, …

customers, …

agent programs!

8

action(s) that maximize its expected performance

resources required, effect on environment, constraints met, user satisfaction, …

9

priori decisions

10

11

12

13

15

16

17

19

20

21

22

the environment, the environment is fully observable

23

unpredictable outcomes.

need not deal with uncertainty.

24

depend on what actions occurred in previous episodes.

connected episodes.

25

environment is discrete, otherwise it is continuous.

needs to be concerned about strategic, game-theoretic aspects of the environment (for either cooperative or competitive agents)

properties, whereas most social and economic systems get their complexity from the interactions of (more or less) rational agents.

26

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire Backgammon Taxi driving Internet shopping Medical diagnosis

27

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Taxi driving Internet shopping Medical diagnosis

28

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Yes No No Yes Yes No Taxi driving Internet shopping Medical diagnosis

29

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Yes No No Yes Yes No Taxi driving No No No No No No Internet shopping Medical diagnosis

30

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Yes No No Yes Yes No Taxi driving No No No No No No Internet shopping No No No No Yes No Medical diagnosis

31

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Yes No No Yes Yes No Taxi driving No No No No No No Internet shopping No No No No Yes No Medical diagnosis No No No No No Yes

32

Fully

Deterministic? Episodic? Static? Discrete? Single agent?

Solitaire No Yes Yes Yes Yes Yes Backgammon Yes No No Yes Yes No Taxi driving No No No No No No Internet shopping No No No No Yes No Medical diagnosis No No No No No Yes

33

→ Lots of (most?) real-world domains fall into the hardest case! ←

34

35