1

20070322 chap4 1

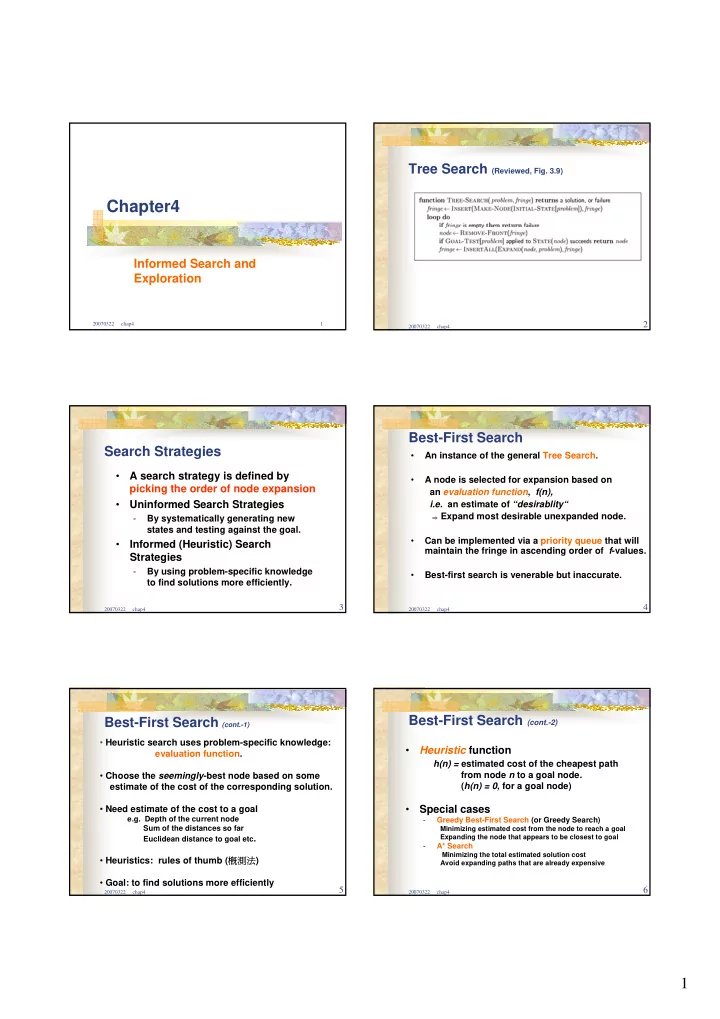

Chapter4

Informed Search and Exploration

20070322 chap4

2

Tree Search (Reviewed, Fig. 3.9)

20070322 chap4

3

Search Strategies

- A search strategy is defined by

picking the order of node expansion

- Uninformed Search Strategies

- By systematically generating new

states and testing against the goal.

- Informed (Heuristic) Search

Strategies

- By using problem-specific knowledge

to find solutions more efficiently.

20070322 chap4

4

- An instance of the general Tree Search.

- A node is selected for expansion based on

an evaluation function, f(n), i.e. an estimate of “desirablity“

⇒ Expand most desirable unexpanded node.

- Can be implemented via a priority queue that will

maintain the fringe in ascending order of f-values.

- Best-first search is venerable but inaccurate.

Best-First Search

20070322 chap4

5

Best-First Search (cont.-1)

- Heuristic search uses problem-specific knowledge:

evaluation function.

- Choose the seemingly-best node based on some

estimate of the cost of the corresponding solution.

- Need estimate of the cost to a goal

e.g. Depth of the current node Sum of the distances so far Euclidean distance to goal etc.

- Heuristics: rules of thumb (概測法)

- Goal: to find solutions more efficiently

20070322 chap4

6

- Heuristic function

h(n) = estimated cost of the cheapest path from node n to a goal node. (h(n) = 0, for a goal node)

- Special cases

- Greedy Best-First Search (or Greedy Search)

Minimizing estimated cost from the node to reach a goal Expanding the node that appears to be closest to goal

- A* Search

Minimizing the total estimated solution cost Avoid expanding paths that are already expensive