Class of

Computer Networks M

Antonio Corradi Academic year 2015/2016 Group issues and policies

University of Bologna Dipartimento di Informatica – Scienza e Ingegneria (DISI) Engineering Bologna Campus

Groups issues & policies 1

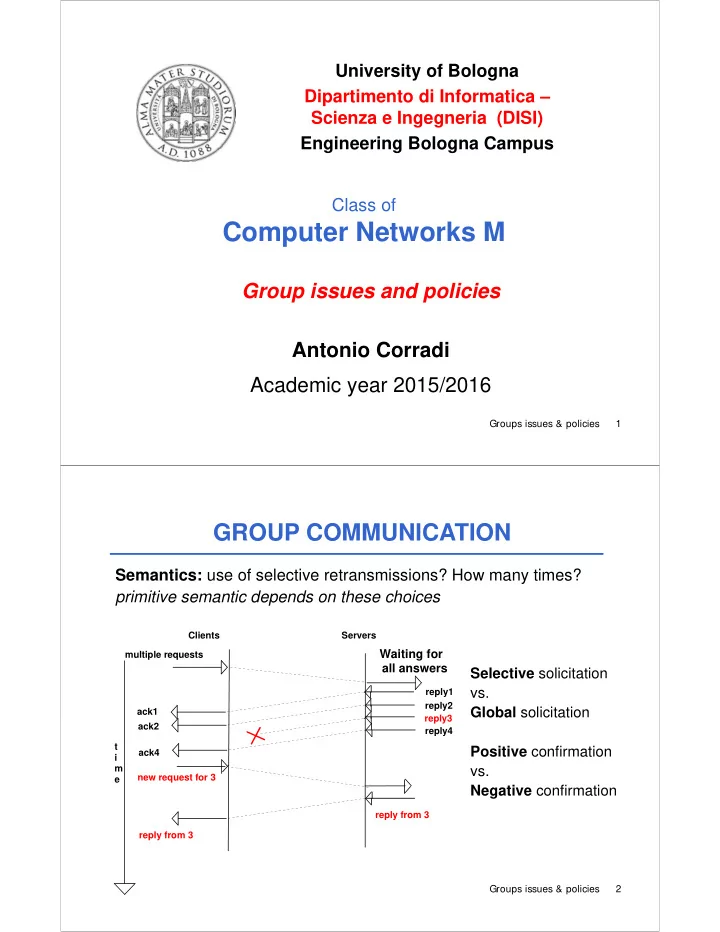

Semantics: use of selective retransmissions? How many times? primitive semantic depends on these choices

GROUP COMMUNICATION

Waiting for

Servers t i m e Clients multiple requests

all answers

ack1 ack2 ack4 new request for 3 reply from 3 reply from 3 reply1 reply2 reply3 reply4

Selective solicitation vs. Global solicitation Positive confirmation vs. Negative confirmation

Groups issues & policies 2