CPU Scheduling

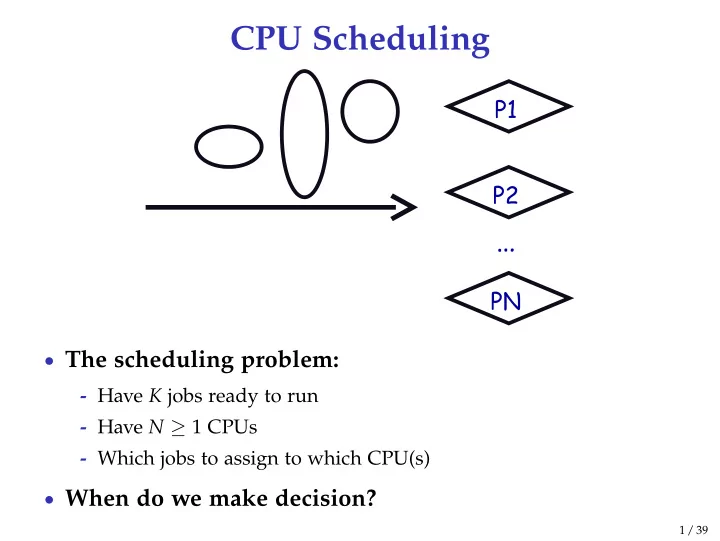

- The scheduling problem:

- Have K jobs ready to run

- Have N ≥ 1 CPUs

- Which jobs to assign to which CPU(s)

- When do we make decision?

1 / 39

CPU Scheduling The scheduling problem: - Have K jobs ready to run - - - PowerPoint PPT Presentation

CPU Scheduling The scheduling problem: - Have K jobs ready to run - Have N 1 CPUs - Which jobs to assign to which CPU(s) When do we make decision? 1 / 39 CPU Scheduling new admitted exit terminated interrupt ready running

1 / 39

new ready waiting running terminated

I/O or event completion I/O or event wait scheduler dispatch interrupt exit admitted

2 / 39

3 / 39

3 / 39

4 / 39

5 / 39

6 / 39

7 / 39

8 / 39

9 / 39

10 / 39

10 / 39

11 / 39

12 / 39

12 / 39

13 / 39

14 / 39

15 / 39

15 / 39

16 / 39

16 / 39

17 / 39

18 / 39

19 / 39

20 / 39

20 / 39

21 / 39

22 / 39

1See library.stanford.edu for off-campus access

23 / 39

24 / 39

25 / 39

26 / 39

27 / 39

28 / 39

29 / 39

30 / 39

31 / 39

j

32 / 39

j

32 / 39

33 / 39

34 / 39

35 / 39

j

36 / 39

37 / 39

38 / 39

39 / 39