CS4100 Outline

- We

We’re re done

- ne with

h Part art I: Search earch and and Planni anning ng!

- Part II: Probabilist

stic Reaso soning

- Diagnosi

sis

- Spe

Speech r recogn gniti tion

- Tracki

king objects

- Ro

Robot mapping

- Ge

Geneti tics

- Er

Error c correcti ting c g code des

- …

… lots s more!

- Pa

Part III: Machine Learning

CS 4100: Artificial Intelligence Probability

Jan-Willem van de Meent, Northeastern University

[These slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

Today

- Pr

Proba babi bility

- Ra

Random Variables

- Jo

Joint and Marginal Dist stributions

- Conditional Dist

stribution

- Product Rule, Chain Rule, Baye

yes’ s’ Rule

- In

Infe ference

- In

Inde depe pende dence

- Yo

You’ll need d all this s st stuff A LOT fo for th the next xt few weeks, ks, so so make ke su sure yo you go go

- ve

ver it now!

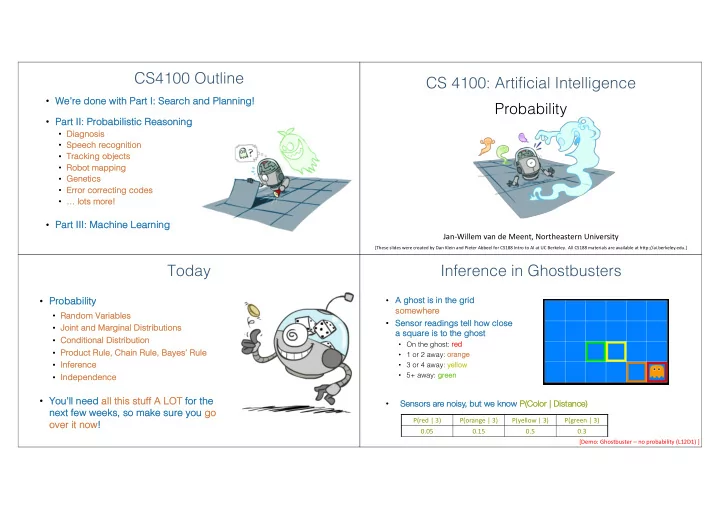

Inference in Ghostbusters

- A ghost

st is s in the grid so somewhere

- Senso

sor readings s tell how close se a sq square is s to the ghost st

- On the ghost: re

red

- 1 or 2 away: or

- rang

ange

- 3 or 4 away: ye

yellow

- 5+ away: gr

green P(red | 3) P(orange | 3) P(yellow | 3) P(green | 3) 0.05 0.15 0.5 0.3

- Sensors are noisy, but we kn

know P( P(Color | Distance)

[Demo: Ghostbuster – no probability (L12D1) ]