SLIDE 1

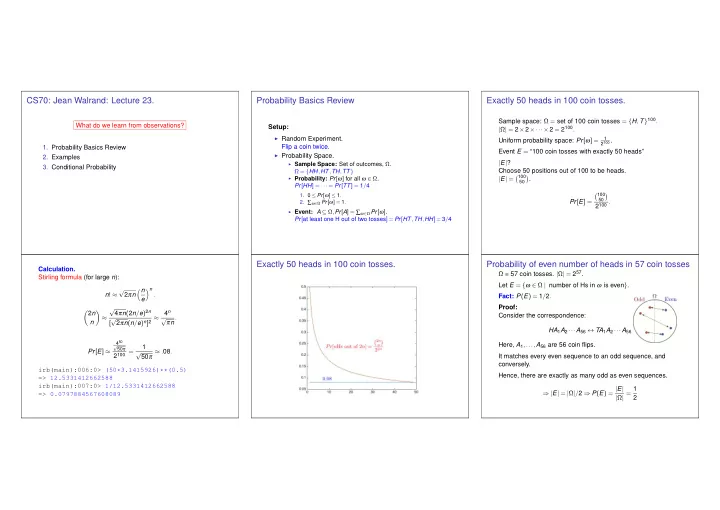

CS70: Jean Walrand: Lecture 23.

What do we learn from observations?

- 1. Probability Basics Review

- 2. Examples

- 3. Conditional Probability

Probability Basics Review

Setup:

◮ Random Experiment.

Flip a coin twice.

◮ Probability Space.

◮ Sample Space: Set of outcomes, Ω.

Ω = {HH,HT,TH,TT}

◮ Probability: Pr[ω] for all ω ∈ Ω.

Pr[HH] = ··· = Pr[TT] = 1/4

- 1. 0 ≤ Pr[ω] ≤ 1.

- 2. ∑ω∈Ω Pr[ω] = 1.

◮ Event: A ⊆ Ω,Pr[A] = ∑ω∈Ω Pr[ω].

Pr[at least one H out of two tosses] = Pr[HT,TH,HH] = 3/4

Exactly 50 heads in 100 coin tosses.

Sample space: Ω = set of 100 coin tosses = {H,T}100. |Ω| = 2×2×···×2 = 2100. Uniform probability space: Pr[ω] =

1 2100 .

Event E = “100 coin tosses with exactly 50 heads” |E|? Choose 50 positions out of 100 to be heads. |E| = 100

50

- .

Pr[E] = 100

50

- 2100 .

Calculation. Stirling formula (for large n): n! ≈ √ 2πn n e n . 2n n

- ≈