204312 PROBABILITY AND 204312 PROBABILITY AND RANDOM PROCESSES FOR COMPUTER ENGINEERS COMPUTER ENGINEERS

Lecture 12: Chapter 10 p

1st Semester, 2007 Monchai Sopitkamon, Ph.D.

Outline: Chapter 10 Stochastic Processes

D fi i i d E l (10 1)

2

Definitions and Examples (10.1) Types of Stochastic Processes (10.2) Random Variables from Random Processes

(10.3) ( 0 3)

Independent, Identically Distributed Random

Sequences (10 4) Sequences (10.4)

The Poisson Process (10.5) Properties of Poisson Process (10.6) Expected Value and Correlation (10.8)

p ( )

Definitions and Examples I (10.1) Definitions and Examples I (10.1)

Stochastic = random Process = function of time Stochastic process = random functions of time P

b th f f f t f

Prob theory focuses on frequency of an event from

an experiment.

Stochastic processes are concerned also with time

sequences of the events.

Definitions and Examples II Definitions and Examples II

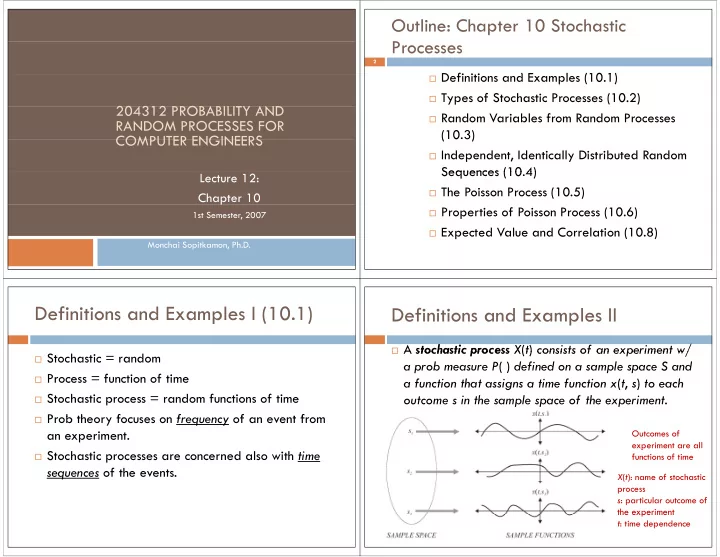

A stochastic process X(t) consists of an experiment w/ A stochastic process X(t) consists of an experiment w/

a prob measure P( ) defined on a sample space S and a function that assigns a time function x(t s) to each a function that assigns a time function x(t, s) to each

- utcome s in the sample space of the experiment.

Outcomes of experiment are all p functions of time X(t): name of stochastic process s: particular outcome of the experiment the experiment t: time dependence