2

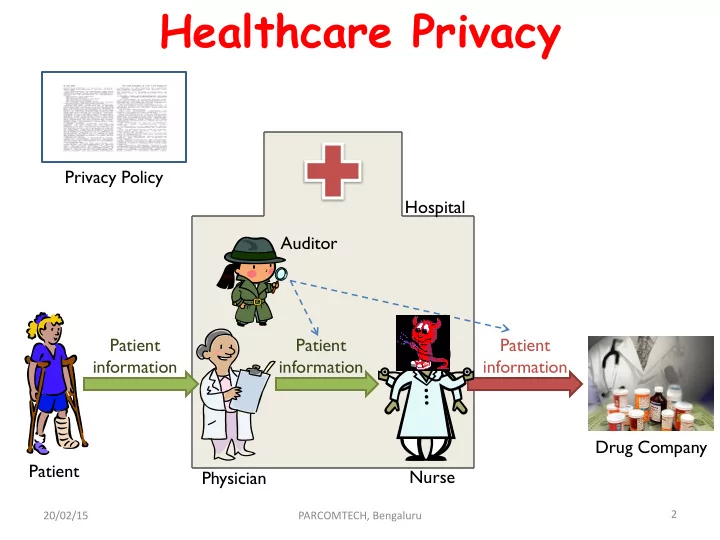

Healthcare Privacy

Hospital Drug Company Patient information Patient Auditor Patient information Patient information Physician Nurse Privacy Policy

20/02/15 PARCOMTECH, Bengaluru

Healthcare Privacy Privacy Policy Hospital Auditor Patient - - PowerPoint PPT Presentation

Healthcare Privacy Privacy Policy Hospital Auditor Patient Patient Patient information information information Drug Company Patient Nurse Physician 2 20/02/15 PARCOMTECH, Bengaluru Why is it being formalized? A patient goes to

2

Hospital Drug Company Patient information Patient Auditor Patient information Patient information Physician Nurse Privacy Policy

20/02/15 PARCOMTECH, Bengaluru

20/02/15 PARCOMTECH, Bengaluru 3

6

20/02/15 PARCOMTECH, Bengaluru

7 20/02/15 PARCOMTECH, Bengaluru

8

20/02/15 PARCOMTECH, Bengaluru

20/02/15 PARCOMTECH, Bengaluru 9

10 20/02/15 PARCOMTECH, Bengaluru

11

20/02/15 PARCOMTECH, Bengaluru

12

20/02/15 PARCOMTECH, Bengaluru

20/02/15 PARCOMTECH, Bengaluru 13

20/02/15 PARCOMTECH, Bengaluru 14

Request (Rq) Response (Rsp) (a)

C(C,{C,S},) S(S,{C,S},) C(C,{C,S},) S(S,{C,S},) Rq(C,{C,S},{C})

C creates Rq

C(C,{C,S},) S(S,{C,S},{C}) Rq(C,{C,S},{C}) C(C,{C,S},) S(S,{C,S},{C}) Rq(C,{C,S},{C}) Rsp(S,{C,S},{C,S}) C(C,{C,S},{C,S}) S(S,{C,S},{C}) Rq(C,{C,S},{C}) Rsp(S,{C,S},{C,S})

C reads Rsp (b)

C(C,{C,S1},) S1(S1,{C,S1},) C(C,{C,S2},) S2(S2,{C,S2},) C(C,{C,S1},) S1(S1,{C,S1},) C(C,{C,S2},) S2(S2,{C,S2},) Rq1(C,{C,S1},{C}) C(C,{C,S1},) S1(S1,{C,S1},{C}) C(C,{C,S2},) S2(S2,{C,S2},) Rq1(C,{C,S1},{C})

C creates Rq1 S1 reads Rq1

C(C,{C,S1},) S1(S1,{C,S1},{C}) C(C,{C,S2},) S2(S2,{C,S2},) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1})

S1 creates Rsp1

C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},) S2(S2,{C,S2},) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1})

C reads Rsp1

C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},) S2(S2,{C,S2},) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1}) Rq2(C,{C,S2},{C})

C creates Rq2 S2 reads Rq2

(b) C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},) S2(S2,{C,S2},{C}) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1}) Rq2(C,{C,S2},{C})

S2 reads Rq2

C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},) S2(S2,{C,S2},{C}) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1}) Rq2(C,{C,S2},{C}) Rsp2(S2,{C,S2},{C,S2})

S2 creates Rsp2

C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},{C,S2}) S2(S2,{C,S2},{C}) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1}) Rq2(C,{C,S2},{C}) Rsp2(S2,{C,S2},{C,S2})

C reads Rsp2

C(C,{C,S1},{C,S1}) S1(S1,{C,S1},C) C(C,{C,S2},{C,S2}) S2(S2,{C,S2},{C}) Rq1(C,{C,S1},{C}) Rsp1(S1,{C,S1},{C,S1}) Rq2(C,{C,S2},{C}) Rsp2(S2,{C,S2},{C,S2}) Rq3(C,{C,S2},{C,S2})

C creates Rq3

[2] Shayak Sen, Saikat Guha, Anupam Datta, Sriram K. Rajamani, Janice Tsai, and Jeannette M.