DM811 HEURISTICS AND LOCAL SEARCH ALGORITHMS FOR COMBINATORIAL OPTIMZATION

Lecture 7

Local Search

Marco Chiarandini

slides in part based on http://www.sls-book.net/

- H. Hoos and T. Stützle, 2005

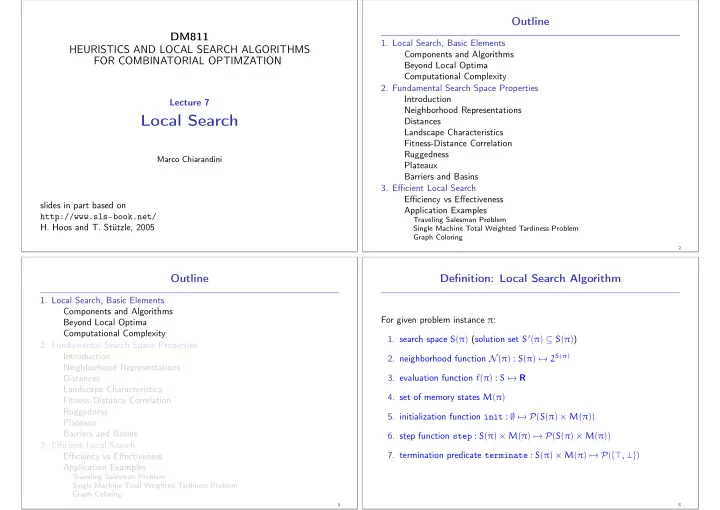

Outline

- 1. Local Search, Basic Elements

Components and Algorithms Beyond Local Optima Computational Complexity

- 2. Fundamental Search Space Properties

Introduction Neighborhood Representations Distances Landscape Characteristics Fitness-Distance Correlation Ruggedness Plateaux Barriers and Basins

- 3. Efficient Local Search

Efficiency vs Effectiveness Application Examples

Traveling Salesman Problem Single Machine Total Weighted Tardiness Problem Graph Coloring

2

Outline

- 1. Local Search, Basic Elements

Components and Algorithms Beyond Local Optima Computational Complexity

- 2. Fundamental Search Space Properties

Introduction Neighborhood Representations Distances Landscape Characteristics Fitness-Distance Correlation Ruggedness Plateaux Barriers and Basins

- 3. Efficient Local Search

Efficiency vs Effectiveness Application Examples

Traveling Salesman Problem Single Machine Total Weighted Tardiness Problem Graph Coloring

3

Definition: Local Search Algorithm

For given problem instance π:

- 1. search space S(π) (solution set S′(π) ⊆ S(π))

- 2. neighborhood function N(π) : S(π) → 2S(π)

- 3. evaluation function f(π) : S → R

- 4. set of memory states M(π)

- 5. initialization function init : ∅ → P(S(π) × M(π))

- 6. step function step : S(π) × M(π) → P(S(π) × M(π))

- 7. termination predicate terminate : S(π) × M(π) → P({⊤, ⊥})

5