1

Logistic Regression

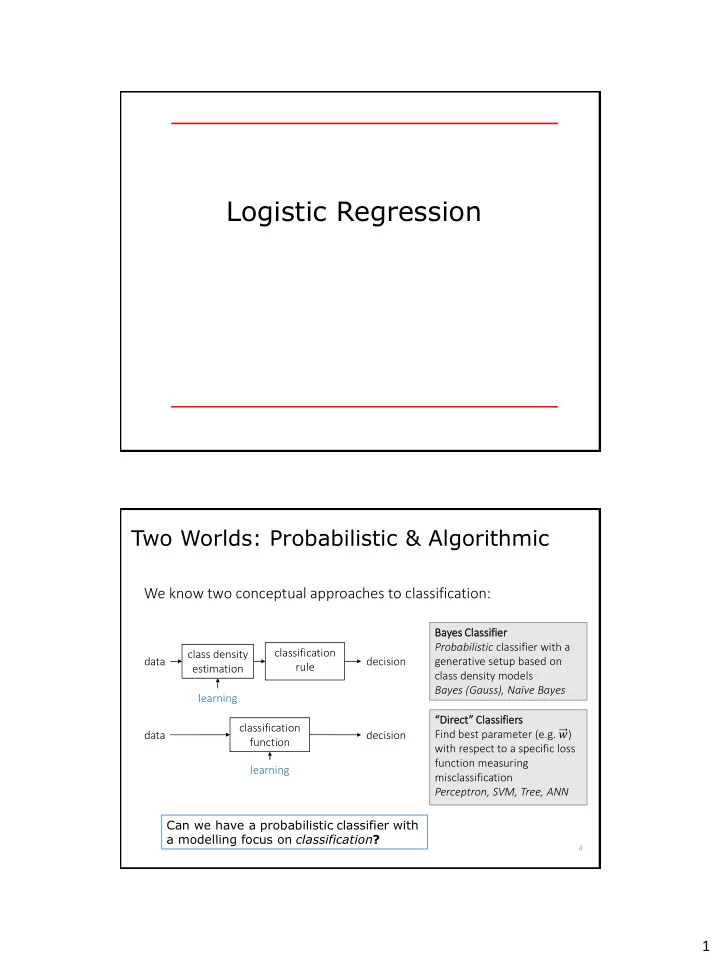

Two Worlds: Probabilistic & Algorithmic

Can we have a probabilistic classifier with a modelling focus on classification? Bayes es Classi ssifier er Probabilistic classifier with a generative setup based on class density models Bayes (Gauss), Naïve Bayes “Direct” Classifiers Find best parameter (e.g. 𝑥) with respect to a specific loss function measuring misclassification Perceptron, SVM, Tree, ANN

We know two conceptual approaches to classification:

data class density estimation classification rule decision learning data classification function decision learning

4