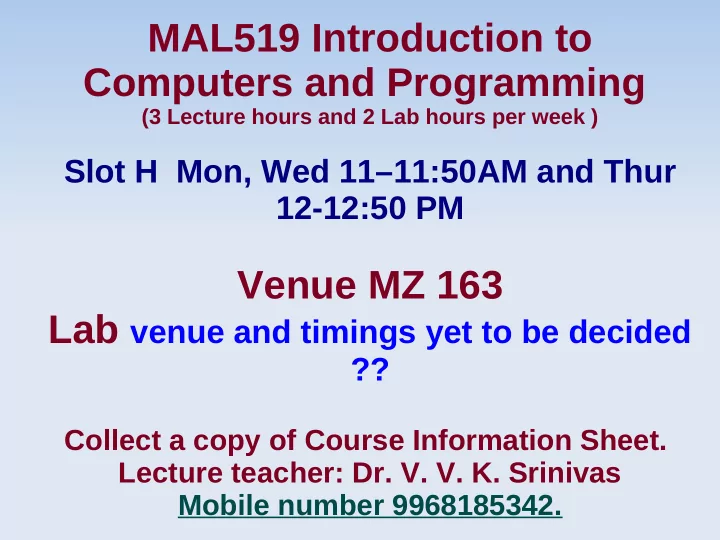

MAL519 Introduction to Computers and Programming

(3 Lecture hours and 2 Lab hours per week )

MAL519 Introduction to Computers and Programming (3 Lecture hours - - PowerPoint PPT Presentation

MAL519 Introduction to Computers and Programming (3 Lecture hours and 2 Lab hours per week ) Slot H Mon, Wed 1111:50AM and Thur 12-12:50 PM Venue MZ 163 Lab venue and timings yet to be decided ?? Collect a copy of Course Information

(3 Lecture hours and 2 Lab hours per week )

Although quantities such as π , e and square root(7) represent specific quantities, they cannot be expressed exactly by a limited number of digits. For example π = 3. 141592653589793238462643... As of January 2010, the record was almost 2.7 trillion digits. This beats the previous record of 2,576,980,370,000 decimals, set by Daisuke Takahashi on the T2K-Tsukuba System, a supercomputer at the University of Tsukuba northeast of Tokyo. π is an irrational number, which means that its value cannot be expressed exactly as a fraction, the numerator and denominator of which are integers. Consequently, its decimal representation never ends or repeats.

The decimal representation of π truncated to 50 decimal places is π = 3.14159265358979323846264338327950288419716939937510... Various online web sites provide π to many more digits. While the decimal representation of π has been computed to more than a trillion (10^12) digits, elementary applications, such as estimating the circumference of a circle, will rarely require more than a dozen decimal places. For example, the decimal representation of π truncated to 11 decimal places is good enough to estimate the circumference of any circle that fits inside the Earth with an error of less than one millimetre, and the decimal representation of π truncated to 39 decimal places is sufficient to estimate the circumference of any circle that fits in the observable universe with precision comparable to the radius of a hydrogen atom.

Round-off error is a side-effect of the way that numbers are stored and manipulated on a computer. Most commonly, when we use a computer to do arithmetic with real numbers we will want to make use

arithmetic with real numbers is that we have to use the CPU's native format for representing real numbers. On most modern CPUs, the native data type for doing real valued arithmetic is the IEEE 754 double precision floating point data

11 bit exponent, and a 52 bit mantissa. For our purposes, the most relevant fact about this structure is that it only allows us to represent a maximum of 16 decimal digits in a number.

Any digits beyond 16 are simply dropped.

The main problem with this digit limit is that it is very easy to

exceed that limit. For example, consider the following multiplication problem: 12.003438125 * 14.459303453 = 173.561354328684345625

The problem with this result is that although both of the

double we will have to simply round off or

Round-off errors get more severe when we do

57.983/102.54 =

1762.0345 + 0.0023993825 =

Note in this case that both of the operands have 8 decimal

digits of precision, yet to fully represent the result requires 14

we would be in effect losing all but 2 of the original 8 digits from the second operand. This is a fairly severe round-off error.

All of the examples shown above proves that

Forward sum: 15.4037 Reverse sum: 16.686

When we do the sum in the forward direction

The situation gets better with the reverse sum.

Round-off error is a pervasive problem in

Roundoff error is the difference between an approximation of a number used in computation and its exact (correct) value. In certain types of computation, roundoff error can be magnified as any initial errors are carried through one or more intermediate steps. Example 1 An egregious ( extraordinarily bad ) example of

roundoff error is provided by a short-lived index devised at the Vancouver stock exchange (McCullough and Vinod 1999). At its inception in 1982, the index was given a value of 1000.000. After 22 months of recomputing the index and truncating to three decimal places at each change in market value, the index stood at 524.881, despite the fact that its "true" value should have been 1009.811.

EXAMPLE 2: A notorious example is the fate of the Ariane rocket launched on June 4, 1996 (European Space Agency 1996). In the 37th second of flight, the inertial reference system attempted to convert a 64-bit floating-point number to a 16- bit number, but instead triggered an overflow error which was interpreted by the guidance system as flight data, causing the rocket to veer off course and be destroyed. EXAMPLE 3: The Patriot missile defense system used during the Gulf War was also rendered ineffective due to roundoff error (Skeel 1992, U.S. GAO 1992). The system used an integer timing register which was incremented at intervals of 0.1 s. However, the integers were converted to decimal numbers by multiplying by the binary approximation of 0.1, 0.00011001100110011001100_2=(209715)/(2097152).

As a result, after 100 hours (3.6×10^6 ticks), an

had accumulated. This discrepancy caused the

Floating-point numbers, like all data, are represented in binary

decimal representations (e.g., 1/3 = 0.333333...), there are also numbers with periodic binary representations (e.g., 1/10 = 0.0001100110011...). Since these numbers cannot be exactly represented in (a finite amount of) computer memory, any calculations involving them will introduce error.

This can be worked around by using high-precision math

libraries, which represent the numbers either as the two numbers of a fraction (i.e., "numerator = 1, denominator = 10")

However, because of the extra work involved in doing any calculations on numbers that are being stored as something else, these libraries necessarily slow down any math that has to go through them.

When you do scientific programming, you'll always have to worry about rounding errors, no matter which programming language

Proof: Say you want to track the movement of a molecule near the border of the universe. The size of the universe is about 93 billion light-years (as far as we know). A molecule is pretty tiny, so you'll want at least nanometer precision (10^-6). That's 50 orders of magnitude. For some reason, you need to rotate that molecule. That involves sin() and cos() operations and a multiply. The multiply is not an issue since the number of valid digits is simply the sum of the length of both operands. But how about sin()?

Double or multiple precision Single precision IEEE754 Dynamic Languages and Scientific computing Python Perl Ruby

Consider this C# example. Below, pretend we’ve got a bank

account with $100,000 and we withdraw $99,999.67. How much money is left? $0.33, right? Let’s see what C# calculates:

If you’ve ever written floating point code, you can probably

predict the first two lines:

float result = 0.328125 double result = 0.330000000001746 decimal result = 0.33

Both the float and double calculations have roundoff error, even

though both perform calculations within the limits of their significant digits. The integer value 100,000 can be represented precisely, but neither float nor double can precisely represent the fractional part of 99,999.67. “.67″ would require an infinitely repeating series of bytes. C# and Java must truncate the bytes to the width of the data-type. This representational error then appears after the subtraction operation.

But, how come the decimal type nailed it – exactly? Shouldn’t

the decimal type still have some error, albeit with far less magnitude than the double? Java BigDecimal will hit it on the nose, too. The reason for this is a thing of beauty.

The gory details of floating point representation is a bit much to

cover here, especially when it has already seen a great discussion in Bill Venner’s article Floating-point Arithmetic. Although the article is Java-specific, the basic details are completely valid for C#. It’s a must read if words like “mantissa” and “radix” are unfamiliar to you.

Floating point numbers are essentially represented as a fixed

length integer (the “mantissa”) combined with a scale that shifts the decimal point left or right (can we still call it a “decimal” point when we’re dealing in base 2?) This shifting is determined by a radix (the “base” of the representation) raised to an exponent.

float and decimal both use a radix of 2. Hence, the number

3.125 could be represented with an integral mantissa of 25 and an exponent of -3:

25 * 2-3 = 3.125

Okay, remember this tidbit from high school? How can you tell if

the decimal representation of a fraction will produce a repeating decimal? If the denominator has any factors other than 2 or 5, the decimal representation will repeat infinitely. So, fractions like 1/2, 2/5, and 3/10 may be represented exactly as 0.5, 0.4, and 0.3. But, 1/3 is a infinite series of 3s (0.33333 . . .).

When representing fractions in binary, the representation of a

fraction repeats infinitely if the denominator has factors other than only 2. So, 1/2 and 3/4 are fine, but not 1/10 (the number 10 includes 5 as a factor). So, the non-repeating decimal 0.1 cannot be represented precisely in a computer.

In a typical application, we enter numbers in

Floating point numbers have traditionally been represented in

base 2 because that’s a natural representation for computers. The arithmetic is fast and efficient.

The genius in decimal and BigDecimal is they both use base 10

internally for both storage and arithmetic. When you enter 0.1 in an application, you get precisely 0.1. These new floating point types understand numbers the same way you do, so there’s no inexact internal representation. You can add and subtract financial values all day and not lose a thing. No need to round

What about performance? The answer here is predictable: base

10 arithmetic is slow – very slow. It has to be done in software, whereas base 2 floating point arithmetic can be offloaded to a specialized floating point processor. For hard performance comparison numbers, check out Wesner Moise’s Decimal

is a non-issue – especially when compared against the cost of programmer’s trying to correct roundoff errors manually.

Some calculations will still result in repeating decimals.

Calculating 1/3 will still result in a repeating decimal. But, these issues have always needed a business solution. On interest calculations, banks have always had to address whether fractional pennies in interest calculations round to the benefit of the bank or the depositor. Those problems are easily handled, but esoteric problems introduced in the black magic of the CPU aren’t. Using decimal and BigDecimal for financial calculations allows programmers to worry only about the easy problems.

deduction amount = 558.71 there are two children CS1 and CS2 both of

during calculation am using ( compute) values

ie it is actually exceeding the deduction amount 279.36+279.36=558.72

It doesn't matter how many you divide by, what matters is you

know where the "balancing" part is to go.

Why do you think you can't get rid of the rounding? If you have

to keep it, you have to work "upside down", in that you have to subtract your balancing item, and the specific place you want it to go will be less than all the others, not greater.

Divide Money-amount by Number-of-dependents giving

Amounts-which-are-equal-per-dependent remainder Balancing- amount-which-is-not-equally-divisible (might be zero at times, won't ever be very big).

Each dependent gets Amounts-which-are-equal-per-dependent

and one (your choice which) gets Amounts-which-are-equal- per-dependent plus Balancing-amount-which-is-not-equally- divisible.

You have encountered a round-off error that demonstrates a

fundamental problem with the way computers deal with fractional numbers. Some numbers (in fact, "most" of them) cannot be represented exactly in binary form -- specifically, fractional numbers that are not powers of two. This will occur in any computer program using ANSI/IEEE standard math.

MATLAB uses the ANSI/IEEE Standard 754, double precision,

for Binary Floating-Point Arithmetic so any non-zero number is written in the following form with 0<=f<1 and integer e:

+or- (1+f)*2^e The quantity f is the fraction, or less officially, the mantissa. The

quantity e is the exponent. Both f and e must have finite expansions in base 2. The finite nature of f means that numbers have a limited accuracy and so arithmetic may involve roundoff

range and so arithmetic may involve under-flow and overflow. As a result, you will sometimes get roundoff errors that will be noticeable, but usually not significant. For example, most of the numbers you are dealing with may be near 1.0e-01, whereas your error is around 1.0e-16.

If you would like to get a closer look at the way the computer

sees these numbers, try using the command FORMAT HEX. You will see that numbers like 1/10 have repeating digits. This demonstrates inaccuracy the same way 0.333... represents 1/3. Numbers like 1/8, however, are accurate, as indicated by the zeros in the mantissa.

Most problems caused by roundoff error can be resolved by

avoiding direct comparisons between numbers. For example, if you have a test for equality that fails due to roundoff error, you should instead compare the difference of the operands and check against a tolerance. Instead of:

A==B try this: abs(A-B) < 1e-12*ones(size(A))

Furthermore, whenever you run any mathematical software,

including MATLAB, the software does various computations. These computations are done by calling low level math libraries like *, +, and ^, not to mention sin, cos, sqrt. The math libraries are supplied with the system's compiler and are therefore system dependent. Thus, you could get different answers on an HP than on a Sun just doing multiplication, but you are unlikely to notice since it will just change one digit, if any. However, after doing a series of computations these differences add up and then you would notice a difference. As stated above, these differences are due to the underlying math libraries, compilers, architecture and how they are implemented.

If you need more precision than MATLAB's

# include <stdio.h> void sp_to_dash(const char *str); int main(void) { sp_to_dash(“this is a test”); return 0; } void sp_to_dash(const char *str); { while(*str){ if(*str== ' ') printf(“%c”, '-'); /* Wrong code if(*str== ' ') *str = '-' else printf(“%c”, *str); Str++; } }

A parity bit, or check bit is a bit added to the end of a string of binary code that indicates whether the number of bits in the string with the value one is even or odd. Parity bits are used as the simplest form of error detecting code. In mathematics, parity refers to the evenness or oddness of an integer, which for a binary number is determined only by the least significant bit. In telecommunications and computing, parity refers to the evenness or oddness of the number of bits with value one within a given set of bits, and is thus determined by the value of all the

for odd parity. This property of being dependent upon all the bits and changing value if any one bit changes allows for its use in error detection schemes.

100 0001 101 65 41 A 100 0010 102 66 42 B 100 0011 103 67 43 C 100 0100 104 68 44 D 100 0101 105 69 45 E 100 0110 106 70 46 F 100 0111 107 71 47 G 100 1000 110 72 48 H 100 1001 111 73 49 I 100 1010 112 74 4A J 100 1011 113 75 4B K 100 1100 114 76 4C L 100 1101 115 77 4D M

100 1110 116 78 4E N 100 1111 117 79 4F O 101 0000 120 80 50 P 101 0001 121 81 51 Q 101 0010 122 82 52 R 101 0011 123 83 53 S 101 0100 124 84 54 T 101 0101 125 85 55 U 101 0110 126 86 56 V 101 0111 127 87 57 W 101 1000 130 88 58 X 101 1001 131 89 59 Y 101 1010 132 90 5A Z

10 0001 141 97 61 a 110 0010 142 98 62 b 110 0011 143 99 63 c 110 0100 144 100 64 d 110 0101 145 101 65 e 110 0110 146 102 66 f 110 0111 147 103 67 g 110 1000 150 104 68 h 110 1001 151 105 69 i 110 1010 152 106 6A j 110 1011 153 107 6B k 110 1100 154 108 6C l 110 1101 155 109 6D m 110 1110 156 110 6E n

110 1110 156 110 6E n 110 1111 157 111 6F

p 111 0001 161 113 71 q 111 0010 162 114 72 r 111 0011 163 115 73 s 111 0100 164 116 74 t 111 0101 165 117 75 u 111 0110 166 118 76 v 111 0111 167 119 77 w 111 1000 170 120 78 x 111 1001 171 121 79 y 111 1010 172 122 7A z