SLIDE 1

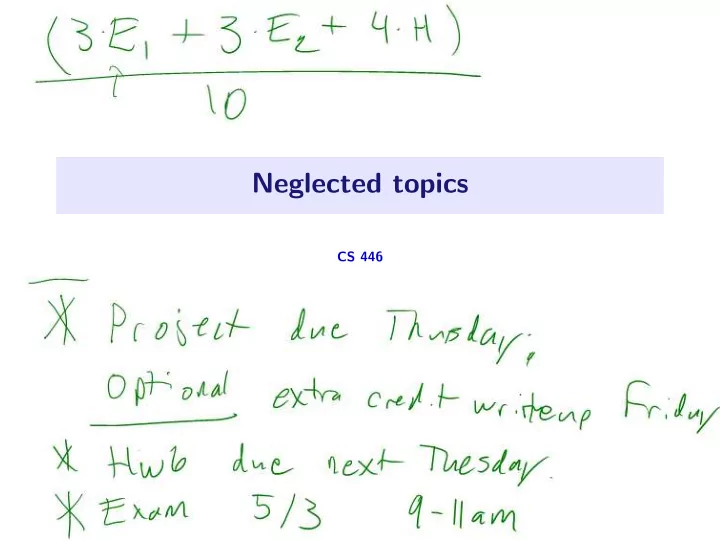

Neglected topics

CS 446

Neglected topics CS 446 Adversarial examples and deep networks 1 / - - PowerPoint PPT Presentation

Neglected topics CS 446 Adversarial examples and deep networks 1 / 23 Adversarial examples? Standard ML setup: We have training data; try to do well on withheld testing data. Adversarial/robust ML setup: We have training

CS 446

1 / 23

2 / 23

2 / 23

3 / 23

p∞≤δ ℓ(f(x + p), y)

4 / 23

f∈F

n

pi∞≤δ ℓ

5 / 23

6 / 23

7 / 23

i , y(j) i )

i=1

j=1

8 / 23

9 / 23

10 / 23

11 / 23

12 / 23

13 / 23

14 / 23

15 / 23

16 / 23

17 / 23

18 / 23

19 / 23

20 / 23

21 / 23

21 / 23

22 / 23

23 / 23