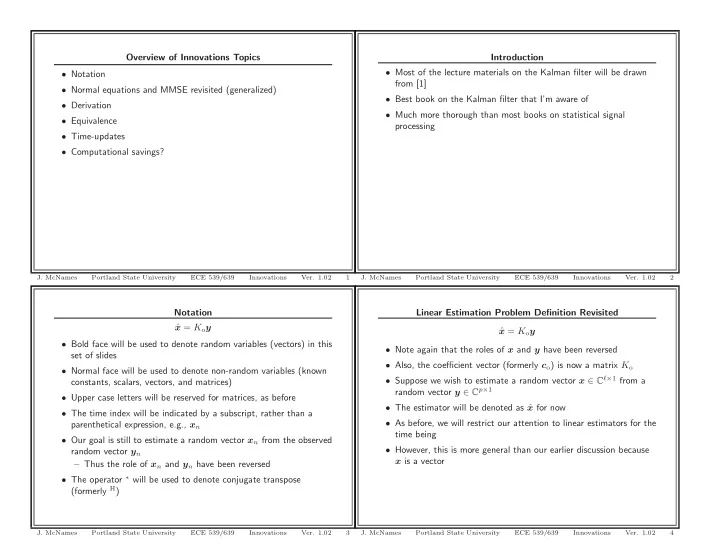

Notation ˆ x = Koy

- Bold face will be used to denote random variables (vectors) in this

set of slides

- Normal face will be used to denote non-random variables (known

constants, scalars, vectors, and matrices)

- Upper case letters will be reserved for matrices, as before

- The time index will be indicated by a subscript, rather than a

parenthetical expression, e.g., xn

- Our goal is still to estimate a random vector xn from the observed

random vector yn – Thus the role of xn and yn have been reversed

- The operator ∗ will be used to denote conjugate transpose

(formerly H)

- J. McNames

Portland State University ECE 539/639 Innovations

- Ver. 1.02

3

Overview of Innovations Topics

- Notation

- Normal equations and MMSE revisited (generalized)

- Derivation

- Equivalence

- Time-updates

- Computational savings?

- J. McNames

Portland State University ECE 539/639 Innovations

- Ver. 1.02

1

Linear Estimation Problem Definition Revisited ˆ x = Koy

- Note again that the roles of x and y have been reversed

- Also, the coefficient vector (formerly co) is now a matrix Ko

- Suppose we wish to estimate a random vector x ∈ Cℓ×1 from a

random vector y ∈ Cp×1

- The estimator will be denoted as ˆ

x for now

- As before, we will restrict our attention to linear estimators for the

time being

- However, this is more general than our earlier discussion because

x is a vector

- J. McNames

Portland State University ECE 539/639 Innovations

- Ver. 1.02

4

Introduction

- Most of the lecture materials on the Kalman filter will be drawn

from [1]

- Best book on the Kalman filter that I’m aware of

- Much more thorough than most books on statistical signal

processing

- J. McNames

Portland State University ECE 539/639 Innovations

- Ver. 1.02

2