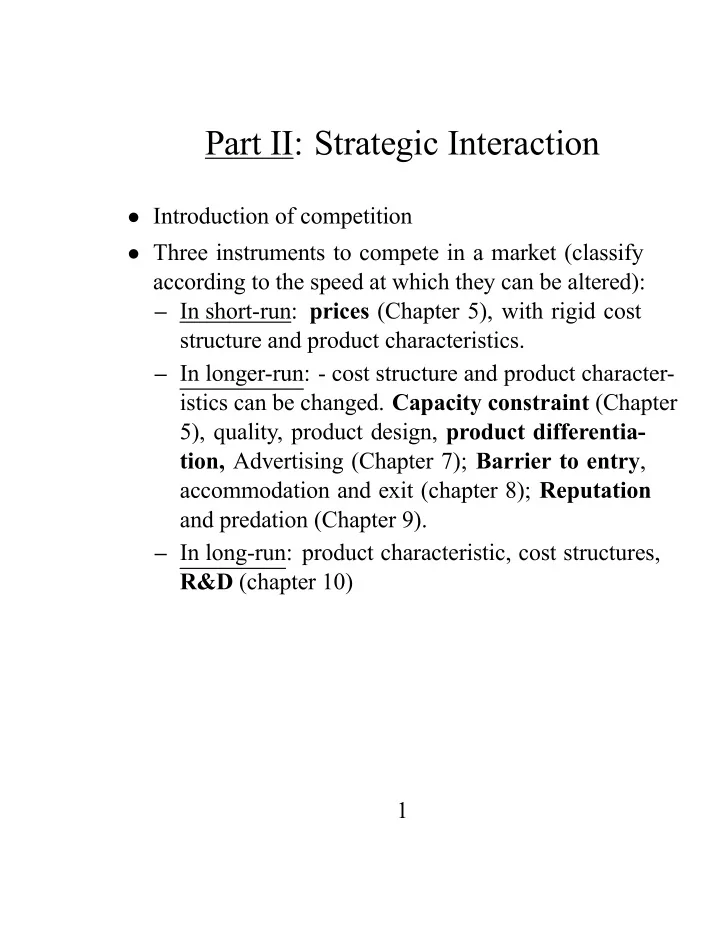

Part II: Strategic Interaction

- Introduction of competition

- Three instruments to compete in a market (classify

Part II: Strategic Interaction Introduction of competition Three - - PDF document

Part II: Strategic Interaction Introduction of competition Three instruments to compete in a market (classify according to the speed at which they can be altered): In short-run: prices (Chapter 5), with rigid cost structure and product

i and s00 i

i is strictly

i if for each feasible combination

i, si+1, ..., sn) < πi(s1, ..., si−1, s00 i , si+1, ..., sn)

1, ..., s∗ n) are a Nash Equilibrium if, for each player i,

i is player i’s best response to the strategies specified for

1, ., s∗ i−1, s∗ i+1, .., s∗ n):

1, ., s∗ i−1, s∗ i, s∗ i+1, .., s∗ n) ≥ πi(s∗ 1, ., s∗ i−1, si, s∗ i+1, .., s∗ n)

i solves

si∈Siπi(s∗ 1, ., s∗ i−1, si, s∗ i+1, .., s∗ n).

1, ..., s∗ n), then these strategies are the

1, ..., s∗ n) are a Nash equilibrium, then they survive

pi

1 = p∗ 2 = 1 + cb

1.

1, R2(a∗ 1))

1, R2(a1))

1, R2(a∗ 1)) but the subgame-perfect Nash equilibrium

1, R2(a1)).

3(a1, a2) and

4(a1, a2), then the timing is

3(a1, a2), a∗ 4(a1, a2)) for i = 1, 2.

1, a∗ 2), then

1, a∗ 2, a∗ 3(a1, a2), a∗ 4(a1, a2))) is a Subgame Perfect

1 1+r is the discount factor (r interest rate)

∞

t=1

4 the trigger strategy is a Nash equilibrium.

1, ..., s∗ n) are a (pure-strategy) Bayesian Nash

si∈Si

t−i∈T−i

1(t1), .., si, s∗ i+1(ti+1), ., s∗ n(tn); t)pi(t−i/ti)

2

2

2(t2) =

2(t0 2) = R.

23 + 1 22 = 5 2 and

20 + 1 24 = 4

1 = U.

ai∈Ai Πi(ai, aj)

∂2Πi(ai,aj) ∂a2

i

i, a∗ j) such that a∗ i = Ri(a∗ j)

j = Rj(a∗ i).

∂2Πi(Ri(aj),aj) ∂2ai ∂Ri(aj) ∂aj

∂ai∂aj

∂Ri(aj) ∂aj

∂2Πi(Ri(aj),aj) ∂ai∂aj ∂2Πi(Ri(aj),aj) ∂2ai

∂aj ) = sign(∂2Πi(Ri(aj),aj) ∂ai∂aj

∂ai∂aj

∂ai∂aj