SLIDE 1 Linköping University 2011-03-28 Department of Computer and Information Science (IDA) Concurrent programming, Operating systems and Real-time operating systems (TDDI04) 17

Process and thread answers

- 1. There is no exact answer, but from reasoning you should be able to come up with

something very similar: a) mp3 player, X-window system, terminal, bash, top, clock, word processor b) user threads in mp3: mixer chart, rolling text, playback; user threads in word processor: writing, spell checking, animation; kernel threads: at least one per process, at most one per user thread. c) Discussed on lesson. d) Discussed on lesson. e) Discussed on lesson.

- 2. Efficient use of resources, Convenience, Standard programming interface

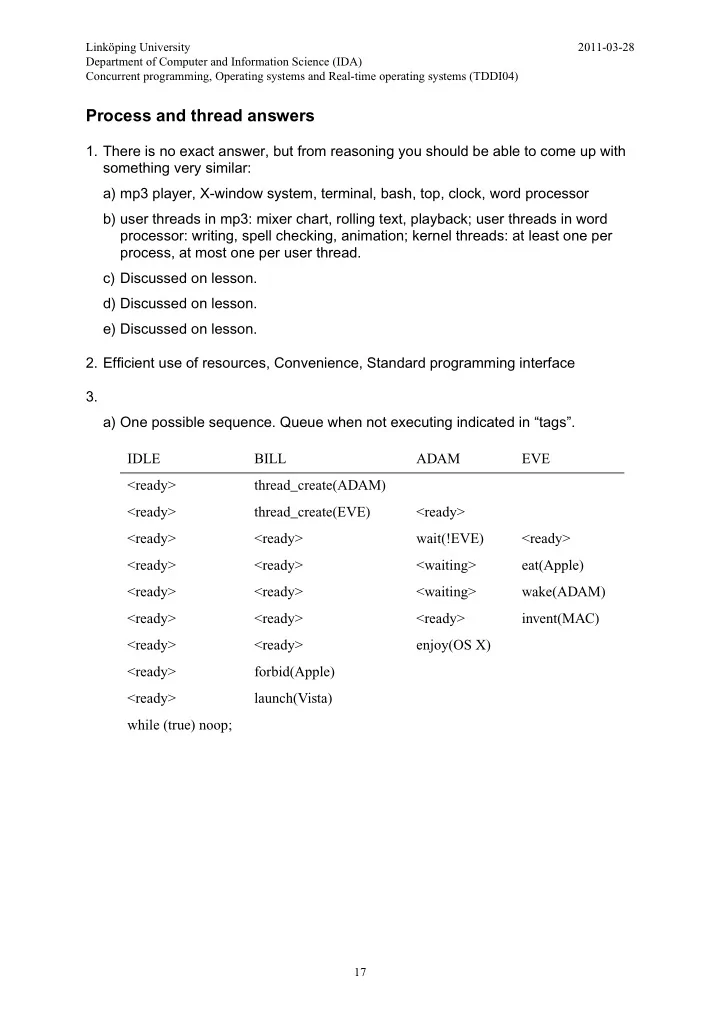

3. a) One possible sequence. Queue when not executing indicated in “tags”. IDLE BILL ADAM EVE <ready> thread_create(ADAM) <ready> thread_create(EVE) <ready> <ready> <ready> wait(!EVE) <ready> <ready> <ready> <waiting> eat(Apple) <ready> <ready> <waiting> wake(ADAM) <ready> <ready> <ready> invent(MAC) <ready> <ready> enjoy(OS X) <ready> forbid(Apple) <ready> launch(Vista) while (true) noop;

SLIDE 2

TDDI04 2011-03-25 18

b) One possible sequence. Queue when not executing indicated in “tags”. c) That you run thread create does not mean the thread starts immediately. the creator may even exit before the created thread start execution. You also see that the order of execution between threads may vary, even though the order of execution within one single thread is sequential. 4. a) A program during execution and all information about that executing program: id, memory, space, thread info, open files, execution time, owner, priority, etc. Executes in user mode. Have one address space protected by OS - no process can access the memory of another process. May share it’s execution environment among several user threads. Thread(s) keep track of executing stack and saved CPU context. b) A structure that keeps track of the execution of one code sequence, to be able to switch between different code sequences, obtaining time-sharing of the CPU - seemingly simultaneous execution of the code sequences. Need to save id, execution stack and saved CPU context of each sequence. c) They enable seemingly simultaneous execution of different code, making programs tasks that should go on in parallel easier to manage. They allow efficient use of both uniprocessors (other threads use the CPU when one must wait) and multiprocessors (need enough tasks to occupy every CPU). d) It will keep track of all things (and more details) listed in a). e) It can be ready, executing or waiting. In addition it can be new - just created - but then immediately transition to ready. And it can be terminated - scheduled for deletion by the next thread. The queues that exists are the ready queue and many waiting queues. IDLE BILL ADAM EVE <ready> thread_create(ADAM) <ready> <ready> <ready> wait(!EVE) <ready> thread_create(EVE) <waiting> <ready> forbid(Apple) <waiting> <ready> <ready> launch(Vista) <waiting> <ready> <ready> <waiting> eat(Apple) <ready> <waiting> wake(ADAM) <ready> enjoy(OS X) <ready> <ready> invent(MAC) while (true) noop;

SLIDE 3 TDDI04 2011-03-25 19

- 5. Require that you think twice! The answer here assume

a) P processes can execute - one per real CPU-core. b) Only processes in RAM are ready. M processes can be held in memory, but if they are ready P of them will execute. The OS will keep the CPU busy when possible. Thus M - P processes can be ready. c) D I/O devices probably mean D wait queues - one per device. All M processes can be queued here. 6. a) See figure 4.10 on page 180 in Stallings book. In “Dinosaur” book, see section 2.7.2 - 2.7.3 on page 59 - 62. b) See figure 4.10 (a) on page 180 in Stallings book: thread/process management - > memory management -> disk management. When starting a program the OS must first create thread and process structures, then allocate memory for the program, and then load the program from disk. 7. a) The OS will in fact only execute as a response to an interrupt! Interrupts provide a means of requesting service from the OS - system Call. They provide the OS means to respond to events - not wait for them. b) Mainly for system-call and automatic thread switches. Also to handle virtual

- memory. And of course to handle other hardware-device events.

- 8. One possible solution:

void* content[128]; /* every pos initiated to NULL in main */ struct lock mutex[128]; /* every lock initiated in main */ int array_add(void* data) { bool found = false; for (int i = 0; i < 128 && !found; ++i) { lock_acquire(&mutex[i]); if (content[i] == NULL) /* determine if index is free */ { content[i] = data; /* save data pointer in free index */ found = true; } lock_release(&mutex[i]); } return i; /* return index used */ } void* array_remove(int id) /* STUDY: Can any sequence go wrong here? */ { void* ret = content[id]; /* temporarily save the index’s pointer */ content[id] = NULL; /* mark the index as free */ return ret; /* return the pointer that was removed */ }

SLIDE 4 TDDI04 2011-03-25 20

9. a) Processes operate in limited user mode and OS operate in unlimited kernel

- mode. This separation allow the OS to protect OS code from process

modification, to prevent processes from stealing resources (CPU, memory, disk, etc.), and processes from interfering with each other. System calls is needed to allow processes (user mode) to request that the OS perform some action (kernel mode). Since it is the OS that perform the kernel-mode action it will still be in

- control. Allowed actions, or services, are predefined by the OS and names

system calls. The system calls also give the application programmer a standardized interface to functionality. System call details needed to request the transition are normally hidden in a normal (user mode) function to make it easy to use. b) See a) and c). c) Steps to perform a system call bool create(char* filename) WHAT WHO MODE enter create(“kalle”) process user place parameter on process stack process user place CREATE label on process stack process user execute system call interrupt process user switch to kernel mode CPU user save CPU context to kernel thread stack CPU kernel switch to kernel mode thread execution stack CPU kernel jump to interrupt handler CPU kernel start system call handler OS kernel verify that the call is valid OS kernel determine service to execute (CREATE) OS kernel locate parameters and check that they are valid OS kernel execute service (create file “kalle”) OS kernel place result where the process will look for it later (in EAX) OS kernel return from interrupt OS kernel switch to user mode CPU kernel restore saved CPU context from kernel stack (including instruction pointer and stack pointer) CPU user read return value (from EAX) process user return from create(“kalle“) process user

SLIDE 5 TDDI04 2011-03-25 21

- 10. Discussion including answers to assignment 15 a) - c).

The following code is in question:

/* sum: A pointer to the variable containing the final sum. start: A pointer to the first value in an array to sum. stop: A pointer which the summation should stop just before. */ void sum_array(int* sum, int* start, int* stop) { while (start < stop) { *sum = *sum + *start; ++start; } } /* Some code in the main function is omitted for brewity. */ int main() { int sum = 0; int array[512]; load_interesting_data_to_array(array, 512); /* Start four threads to sum different parts of the array. * * thread_create schedules (places in ready queue) a new thread * to execute the sum_array function. As this shecdule is just a * list insert it will return quickly, probably (but not always) * before the new thread even staret execution. */ for (int i = 0; i < 4; ++i) thread_create(sum_array, &sum, array + 128*i, array + 128*(i+1)); printf("The sum is: %d\n", sum); }

In the code above we can identify several variables. In main:

sum

locally declared integer, address passed to sum_array, printed

array

address to 512 integers, loaded with data, passed to sum_array

i

local loop variable In sum_array:

sum

address to external integer start start address of array stop address just after the array

SLIDE 6 TDDI04 2011-03-25 22

Further we start four threads, each of them executing sum_array. Thus sum_array code will execute concurrently and may cause error if the code in different thread reference the same memory. Is that the case? sum: always refer to the same variable in main start, stop: refer to different sections of the array in each thread The problem would then be if the memory location referred to by sum simultaneously would be modified or read. Is that the case? Yes, we can see that sum is always de- referenced (*sum) to get the sum variable in main. It is both read and written. If we decompose the relevant line to machine instructions we have:

load *sum to register A load *start to register B add to register A the value in register B store register A to *sum

When two threads, T1 and T2, execute this code correctly. We assume initial values:

*sum = 100; (T1)*start = 40; (T2)*start = 70. T1:A = 100; load *sum to register A T1:B = 40; load *start to register B T1:A = 140; add to register A the value in register B T1:*sum = 140; store register A to *sum T2:A = 140; load *sum to register A T2:B = 70; load *start to register B T2:A = 210; add to register A the value in register B T2:*sum = 210; store register A to *sum

A sequence that become wrong when two threads execute this. We assume initial values: *sum = 100; (T1)*start = 40; (T2)*start = 70.

T1:A = 100; load *sum to register A T1:B = 40; load *start to register B T2:A = 100; load *sum to register A T2:B = 70; load *start to register B T2:A = 170; add to register A the value in register B T2:*sum = 170; store register A to *sum T1:A = 140; add to register A the value in register B T1:*sum = 140; store register A to *sum

The work of T2 is lost! From the start of the operation (*sum is loaded) until the

- peration is complete (*sum is stored) we can not allow *sum to be accessed: the *sum

may not be read, because we are about to give *sum a new value - any read operation would be unaware of our coming change, and the *sum may not be written, because we have already decided to do our operation on the current value of *sum. It become a critical section. To prevent other threads to enter code that access the *sum we must place all such code inside a locking mechanism. The locking mechanism must ensure mutual exclusion - only one thread can enter code that modify our shared *sum.

SLIDE 7 TDDI04 2011-03-25 23

I now assume we have the mechanisms available in PintOS and write an initial attempt to correct the problem in sum_array:

lock sum_lock; /* lock_init(&sum_lock) before thread createing in main */ void sum_array(int* sum, int* start, int* stop) { lock_acquire(&sum_lock); while (start < stop) { *sum = *sum + *start; ++start; } lock_release(&sum_lock); }

Looking at the solution we can identify several problems. The first thread acquire the lock and enters the while loop with the access to *sum. Now no other thread can enter the while loop and thus not access *sum. Mutual exclusion is obtained. However, the second thread can not start it’s summation until the first thread is done and release the

- lock. The threads are forced to execute sequential, and all concurrency and potential

speed-up is gone. The problem is that more than only the critical section is locked. A new attempt:

void sum_array(int* sum, int* start, int* stop) { while (start < stop) { lock_acquire(&sum_lock); *sum = *sum + *start; lock_release(&sum_lock); ++start; } }

In this solution we lock only the critical section, no extra code. The threads can now

- perate concurrently. But we are now forced to acquire and release the lock every

iteration in the loop, the overhead of this solution is severe. Do we really need to do all calculations on the shared *sum? A third solution:

void sum_array(int* sum, int* start, int* stop) { int local_sum = 0; while (start < stop) { local_sum = local_sum + *start; ++start; } lock_acquire(&sum_lock); *sum = *sum + local_sum; lock_release(&sum_lock); }

SLIDE 8 TDDI04 2011-03-25 24

In this solution we add a local variable for each thread to keep it’s sum in. No protection

- f this variable is needed, because it is local to the function and use different memory

in each executing thread. Only in the end, one time, we transfer the local sum to the final sum. Looking at the solution again reveals a design problem. Using global variables (sum_lock) is bad practice. Would we like to create a second array, second_sum, and another four threads to sum the second array to second_sum in the main program they would now use the same global lock. Concurrent access to sum and second_sum would now be mutually exclusive. But sum and second_sum is different variables and do not need mutual exclusion. The problem of removing the global variable is solved after we take a look at the next

- problem. The completed sum is printed last in the main program. That assumes that all

threads are done summing, but that assumption is wrong. Nothing guarantees that the threads are done. If printf is executed before any thread is scheduled the printed value will be 0. How can we guarantee this? First we try with a semaphore. To avoid global variables we pass it to each thread on thread creation.

struct total_sum { int sum; struct lock mutex; struct semaphore ready; } void sum_array(struct total_sum* com, int* start, int* stop) { int local_sum = 0; while (start < stop) { local_sum = local_sum + *start; ++start; } lock_acquire(&com->mutex); com->sum = com->sum + local_sum; lock_release(&com->mutex); sema_up(&com->ready); } int main() { struct total_sum com; int array[512]; com.sum = 0; lock_init(&com.mutex); sema_init(&com.ready, 0); load_interesting_data_to_array(array, 512);

SLIDE 9 TDDI04 2011-03-25 25 /* Start four threads to sum different parts of the array. * * thread_create schedules (places in ready queue) a new thread * to execute the sum_array function. As this shecdule is just a * list insert it will return quickly, probably (but not always) * before the new thread even staret execution. */ for (int i = 0; i < 4; ++i) thread_create(sum_array, &com, array + 128*i, array + 128*(i+1)); for (int i = 0; i < 4; ++i) sema_down(&com.ready); printf("The sum is: %d\n", com.sum); }

Now to some questions on the solution: Why do we initiate the semaphore to 0? We have four threads, why do we not initiate the semaphore to 4 then? A semaphore counts available resources. In this case the resource, or what we are waiting for, is “ready summations”. Why do not have any ready summations from start, so it is correct to initialize to zero. We make resources (“ready summations”) available later by executing sema_up. There is no limit on how many free resources a semaphore can have, and the initial value does not have to be maintained. Should not sema_down and sema_up be swapped?

- No. We obtain one resource (“ready summation”) after the completion of each sum,

and should thus call sema_up add that resource to the count. Can we not call sema_up just after the while loop, immediately when we have the sum? The main program need the sum added to com->sum, the resource, the summation, is not completely available until we have added it to com->sum. If we do sema_up before the resource is completely ready it may also be used before it is ready (the main program may print the sum before it is added to com->sum). Why do we have an extra loop to do sema_down, can we not do thread_create and

sema_down in the same loop?

Then the program would wait for one resource to become available after each thread creation, forcing the threads to execute sequentially. We want to start all threads before waiting for any, to get concurrent execution. Why do we do sema_down four times? We must wait for all four threads to complete the summation. sema_down only consume one resource, and we need all four to continue. Can we solve the problem with locks? Conditions? Certainly we can:

SLIDE 10

TDDI04 2011-03-25 26 struct total_sum { int sum; struct lock mutex; int done_counter; struct condition done_cond; }; void sum_array(struct total_sum* com, int* start, int* stop) { int local_sum = 0; while (start < stop) { local_sum = local_sum + *start; ++start; } lock_acquire(&com->mutex); com->sum = com->sum + local_sum; com->done_counter = com->done_counter + 1; cond_signal(&com->done_cond, &com->mutex); lock_release(&com->mutex); } int main() { struct total_sum com; int array[512]; com.sum = 0; com.done_counter = 0; lock_init(&com.mutex); cond_init(&com.done); load_interesting_data_to_array(array, 512); /* Start four threads to sum different parts of the array. * * thread_create schedules (places in ready queue) a new thread * to execute the sum_array function. As this shecdule is just a * list insert it will return quickly, probably (but not always) * before the new thread even staret execution. */ for (int i = 0; i < 4; ++i) thread_create(sum_array, &com, array + 128*i, array + 128*(i+1)); lock_acquire(&com->mutex); while (com.done_counter < 4) cond_wait(&com->done_cond, &com->mutex); lock_release(&com->mutex); printf("The sum is: %d\n", com.sum); }

How do we arrive at this solution? First we create a variable to count the number of threads ready with the calculation:

com->done_counter = com->done_counter + 1;

SLIDE 11 TDDI04 2011-03-25 27

Then one way to wait is a loop:

while (com.done_counter < 4) ; /* empty loop */

This solution have two problems. done_counter is accesses concurrently from the

- threads. Thus it must be protected by a lock. Since done_counter and sum is always

updated together by the same threads we can reuse the same mutex lock in this

- case. The second problem is that the busy-loop waste CPU time by only waiting,

and after acquiring the mutex this wait would be indefinite, since no-one can update

done_counter while it is locked. Thus we must employ cond_wait function to do the

waiting in the loop - it is designed specifically to solve both of those problem.

SLIDE 12 TDDI04 2011-03-25 28

- 11. One possible solution:

struct message { bool bad; struct semaphore ready; }; /* Open the file as a zip file and scan each file inside for viruses. * Set the output parameter bad to true if the zip is invalid. */ void unpack_and_scan(const char* filename, struct message* msg) { zip = open_zip(filename); > msg->bad = open_failed(zip); > sema_up(&msg->ready); > > if ( msg.bad ) > return; /* Iterate each file contained in the zip and scan it. */ for (file = zip_first(zip); file != NULL; file = zip_next(zip)) { scan_regular_file(file); } } /* Scan all files given as argument to main. * > indicate lines important for the assignemnt. */ int main(int argc, char* argv[]) { int i; for (i = 0; i < argc; ++argc) { switch ( file_extension(argv[i]) ) { case ZIP: { > struct message msg; > > msg.bad = false; > sema_init(&bad_file.ready, 0); /* thread_create schedules a new thread to execute * unpack_and_scan. As schedule is just a list insert it * will return quickly, probably before the new thread even * stared execution. */ thread_create(unpack_and_scan, argv[i], &msg); > sema_down(&msg.ready); > > if ( ! msg.bad ) > { > break; /* break switch and continue for loop. */ > } /* Fall through to COM,EXE,DLL action since break is missing.*/ } case COM,EXE,DLL: ...

SLIDE 13 TDDI04 2011-03-25 29

- 12. One possible solution:

struct stack_node /* Represents one item on the stack. */ { struct stack_node* next; int data; }; struct stack /* Represents the entire stack. */ { struct stack_node* top; struct lock mutex; struct semaphore available_items; }; /* Initiate the stack to become empty. * Called once before any threads are started. */ void stack_init(struct stack* s) { s->top = NULL; lock_init(&s->mutex); sema_init(&s->available_items, 0); /* no items from start */ } /* Pop one item from the stack if possible. The stack is specified as * parameter. Many threads use this function simultaneously. */ int stack_pop(struct stack* s) { sema_down(&s->available_items); lock_acquire(&s->mutex); struct stack_node* popped = s->top; /* Get the top element */ if (popped != NULL) /* Was it anything there? */ s->top = popped->next; /* Remove the top element */ lock_release(&s->mutex); if (popped == NULL) /* Was it anything there? */ return -1; /* The stack was empty */ int data = popped->data; /* Save the data we want */ free(popped); /* Free removed element memory */ return data; /* Return the saved data */ } /* Push one item to the stack. The stack and the integer data to push * are specified as parameters. Many threads use this function. */ void stack_push(struct stack* s, int x) { struct stack_node* new_node = malloc(sizeof(struct stack_node)); new_node->data = x; lock_acquire(&s->mutex); new_node->next = s->top; s->top = new_node; lock_release(&s->mutex); sema_up(&s->available_items); } ...

SLIDE 14 TDDI04 2011-03-25 30

- 13. One possible solution:

#define SIZE 256 /* With this size and 8-bit read- and write positions the position * counters will automatically go from 255 around to 0. * Else we must add code to wrap around (position % SIZE).*/ /* This structure represent a bounded buffer (queue) */ struct bounded { int buffer[SIZE]; int8 read_pos; int8 write_pos; struct semaphore free_slots; /* the available resourse is free slots */ struct semaphore busy_slots; /* the available resourse is busy slots */ struct lock read_mutex; struct lock write_mutex; }; void bounded_init(struct bounded* b) { b->read_pos = 0; b->write_pos = 0; lock_init(&b->read_mutex); lock_init(&b->write_mutex); sema_init(&b->free_slots, SIZE); sema_init(&b->busy_slots, 0); } /* Read the oldest value in the queue. */ int bounded_read(struct bounded* b) { /* We must wait until the queue have at least one value. */ sema_down(&b->busy_slots); /* Read the value from the read position. */ lock_acquire(&b->read_mutex); int ret = b->buffer[b->read_pos++]; lock_release(&b->read_mutex); sema_up(&b->free_slots); return ret; } /* Write a new value to the next free position in the queue. */ void bounded_write(struct bounded* b, int x) { /* We must wait until at least one slot is available. */ sema_down(&b->free_slots); lock_acquire(&b->write_mutex); b->buffer[b->write++] = x; lock_release(&b->write_mutex); sema_up(&b->busy_slots); } ...

SLIDE 15 TDDI04 2011-03-25 31

- 14. One possible solution:

bool seats[ROWS][COLUMNS]; /* true mean occupied */ struct lock row_lock[ROWS]; /* different rows can be used in parallell */ /* The main program may be an internet server for reservation of * movie seats. It sets all seats to free initially, and starts * one reservation thread each time a user connects to the server. */ /* Reserve ‘n’ consecutive seats in ‘row’ */ bool reserve_seat(int n, int row) { int max_start = 0; int max_count = 0; int c = 0; lock_acquire(&row_lock[row]); while (c < COLUMNS) { int start; int end; while (c < COLUMNS && seats[row][c] == true) ++c; start = c; /* first free seat in row */ while (c < COLUMNS && seats[row][c] == false) ++c; end = c; /* column of the next occupies seat */ /* Is it the longest sequence of free seats in row? */ if (max_count < (end - start)) { max_start = start; max_count = end - start; } } /* Was the longest free sequence long enough? */ if (max_count < n) { lock_release(&row_lock[row]); return false; } /* Allocate the seats. We must prevent access to any of the seats in * the sequence found from the point of first checking if the seat is * free up to the point the entire sequence is reserved. */ for (c = max_start; c < (max_start + n); ++c) { seats[row][c] = true; } lock_release(&row_lock[row]); return true; }

- 15. See assignments 10-14.

SLIDE 16

TDDI04 2011-03-25 32