26/05/2016 1

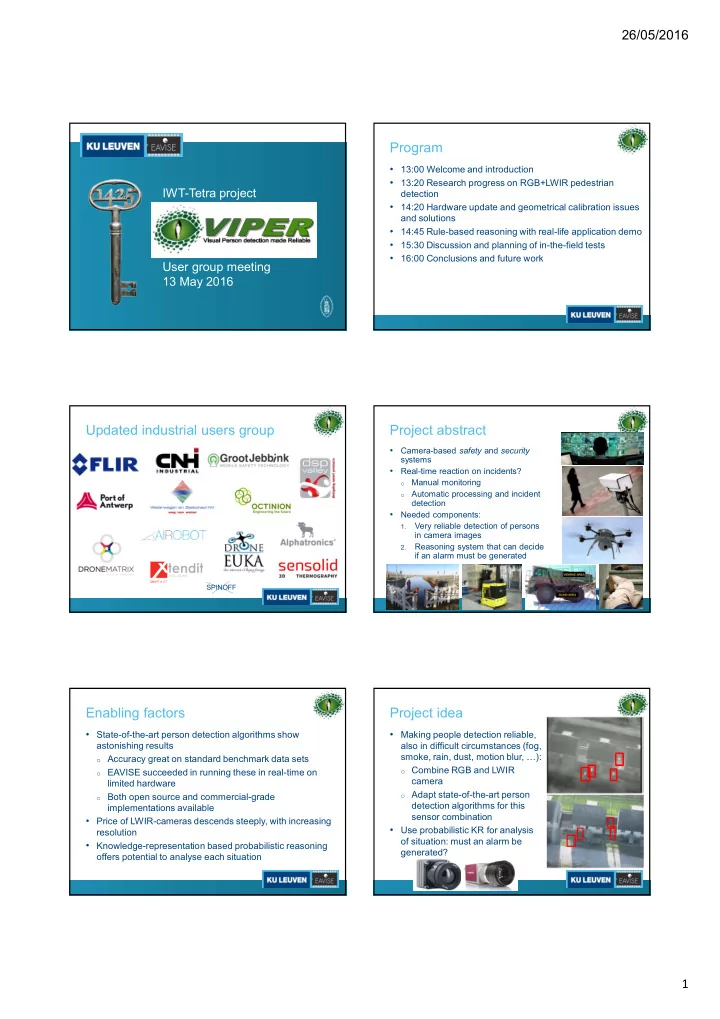

IWT-Tetra project User group meeting 13 May 2016

Program

- 13:00 Welcome and introduction

- 13:20 Research progress on RGB+LWIR pedestrian

detection

- 14:20 Hardware update and geometrical calibration issues

and solutions

- 14:45 Rule-based reasoning with real-life application demo

- 15:30 Discussion and planning of in-the-field tests

- 16:00 Conclusions and future work

Updated industrial users group

SPINOFF

Project abstract

- Camera-based safety and security

systems

- Real-time reaction on incidents?

- Manual monitoring

- Automatic processing and incident

detection

- Needed components:

1.

Very reliable detection of persons in camera images

2.

Reasoning system that can decide if an alarm must be generated

Enabling factors

- State-of-the-art person detection algorithms show

astonishing results

- Accuracy great on standard benchmark data sets

- EAVISE succeeded in running these in real-time on

limited hardware

- Both open source and commercial-grade

implementations available

- Price of LWIR-cameras descends steeply, with increasing

resolution

- Knowledge-representation based probabilistic reasoning

- ffers potential to analyse each situation

Project idea

- Making people detection reliable,

also in difficult circumstances (fog, smoke, rain, dust, motion blur, …):

- Combine RGB and LWIR

camera

- Adapt state-of-the-art person

detection algorithms for this sensor combination

- Use probabilistic KR for analysis

- f situation: must an alarm be

generated?