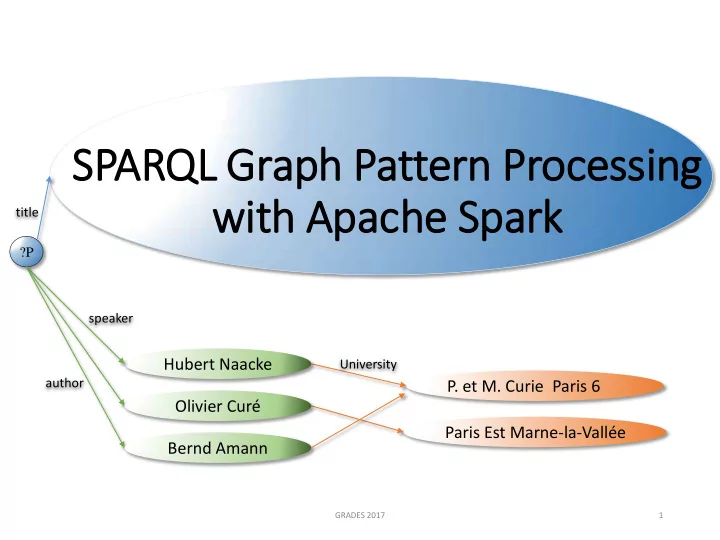

SPARQL Graph Pattern Processing with Apache Spark

GRADES 2017 1

title

Hubert Naacke

?P speaker author

Olivier Curé Bernd Amann

- P. et M. Curie Paris 6

University

Paris Est Marne-la-Vallée

SPARQL Graph Pattern Processing with Apache Spark title ?P - - PowerPoint PPT Presentation

SPARQL Graph Pattern Processing with Apache Spark title ?P speaker Hubert Naacke University author P. et M. Curie Paris 6 Olivier Cur Paris Est Marne-la-Valle Bernd Amann GRADES 2017 1 Context Big RDF data Linked Open Data

GRADES 2017 1

title

Hubert Naacke

?P speaker author

Olivier Curé Bernd Amann

University

Paris Est Marne-la-Vallée

2

from WatDiv benchmark

Chain pattern

from LUBM benchmark

GRADES 2017

t2 t3

?z ?y

t1 advisor teacherOf type Course

includes

?x ?u

Retail0

➭ Leverage on existing platform

GRADES 2017 3

GRADES 2017 4

Cluster ressource management Distributed File system Resilient Distributed Datastructures (RDD)

SPARQL SQL SPARQL DF SPARQL RDD

Hybrid DF Hybrid RDD

Our solutions

SPARQL Graph Pattern query RDF triples RDF triples

no compression

DataFrame (DF) SQL GraphX

data compression

➭ Favor local computation ➭ Reduce data transfers

GRADES 2017 5

GRADES 2017 6

cost(Q92) = m * (Ct2 + Ct3) cost(Q93) = Ct1 + m * Ct3 cost(Q91) = Ct1 + Ct2 + Ct2 ⨝ t3

with : Cpattern = transferCost(pattern) θcomm is the unit tranfer cost m = #computeNodes - 1

Plan cost:

SELECT * WHERE {

?x advisor ?y . ?y teacherOf ?z . ?z type Course }

Triple patterns of Q9 SPARQL Hybrid plan

Q93 ⋈z

B

y

⋈y

P

x

t2 t3

?z ?y

t1 advisor teacherOf type

SPARQL RDD plan

Q91 ⋈z

P

y z

⋈y

P

x

Distribute Partitioned join

⋈

P SPARQL DF plan

Q92 ⋈y

B

x

⋈z

B Broadcast Broadcast join

⋈

B Legend:

GRADES 2017 8

Star Snowfake Complex

➭ One dataset: <Subject> partitioning Hybrid DF accelerates DF up to 2.4 times ➭ One dataset per property: <Property> and <Subject> partitioning Hybrid accelerates S2RDF up to 2.2 times

SPARQL queries at large scale.

More info at the poster session … Thank you. Questions ?

GRADES 2017 9

GRADES 2017 10

GRADES 2017 11

Thank you Questions ?

GRADES 2017 12

13

SPARQL RDD plan

Q91 ⋈z

P

y z

⋈y

P

x

SPARQL SQL plan

Q92 ⋈y

B

x

⋈z

B SPARQL Hybrid plan

Q93 ⋈z

B

y

⋈y

P

x

with : Cpattern = θcomm * size(pattern) θcomm is the unit tranfer cost m = #computeNodes - 1

cost(Q92) = m * (Ct2 + Ct3) cost(Q93) = Ct1 + m * Ct3

Plan cost:

cost(Q91) = Ct1 + Ct2 + Ct2 ⨝ t3

s3 p1 o2 s2 p1 o2 s2 p3 o4

Dataset (subject, prop, object)

BDA 2016 15

s1 p1 o1 s1 p2 o3 s1 p1 o1 s2 p1 o2 s3 p1 o2 s1 p2 o3 s2 p3 o4 ...

Part 1 Part 2 Part N

BDA 2016 16

Piece of data Operation Result

Compute node 1 node 2 node N

Memory CPU Memory Comm is expensive

Ressources:

BDA 2016 17

Part 1 Part 2 Part N

Partitioned dataset

select Result 1 select Result 2 select Result N

Examples of local MAP operations: selection, projection, join on subject

Partitioned Result Compute node 1 node 2 node N

BDA 2016 18

Part 2 Part n

Dataset

Global Operation Part 1 Result 1

Data transfers

Global (REDUCE) Operation

Global Operation Global Operation Result 2 Result n

Examples of global REDUCE operations : join, sort, distinct

Data:

BDA 2016 19

lab at

?L ?P ?V

Star query:

P2 lab L3 P2 age 20 P2 name Bob P4 lab L1 P1 lab L1 P1 name Ali P3 lab L2 P3 name Clo L1 at Poitiers L1 since 2000 L3 at Paris L3 staff 200 L2 at Aix L2 at Toulon L2 partner L1 …

Transfer lab or at

lab name

?P ?L ?N ?L

age

Snowflake query: No transfer

Chain query:

lab

?L ?P ?a

age name

?n

at

?V

staff

?s ?N

partner

Complex query

BDA 2016 20

Join on L1

Result is partitioned on L

Join on L2

P1 lab L1 at Poitiers P4 lab L1 at Poitiers P3 lab L2 at Aix P3 lab L2 at Toulon P4 lab L1 at Poitiers

Join on L3

P2 lab L3 at Paris

Data transfers = sum of repartitioned datasets

BDA 2016 21

hash on L hash on L hash on L hash on L

Partitioned dataset

P1 lab L1 P3 lab L2 C1 loc L3 C3 loc L1 P2 lab L3 P4 lab L1 C2 loc L1 C4 loc L2

Part 1 Part n Part 1 Part n

P1 lab L1 P4 lab L1 C3 loc L1 C2 loc L1

BDA 2016 22

Join on L

Result preserves the target partitioning

P1 lab L1 P3 lab L2 P2 lab L3 P4 lab L1

Join on L

P1 lab L1 at Poitiers P3 lab L2 at Aix P3 lab L2 at Toulon

Part 1 Part n Part 1 Part n

P2 lab L3 at Paris P4 lab L1 at Poitiers L1 at Poitiers L2 at Aix L2 at Toulon L3 at Paris

Larger target dataset Smaller broadcast dataset

Data transfers = Small dataset * nb of compute nodes

Get a linear join plan of stars

BDA 2016 23

1) Compute all stars: S1, S2,…

2) Join 2 stars, say Si with Sj

get Si, Sj and a join algorithm

3) Continue with a 3rd star, say Sk

and so on …

BDA 2016 24

Method co-partitioning Join plan Merged selection Query Optimizer Data Compression SPARQL RDD Pjoin SPARQL DF v 1.5 Pjoin,BrJoin1 poor SPARQL SQL v 1.5 Pjoin,BrJoin1 cross prod Hybrid RDD Pjoin,BrJoin+ cost based Hybrid DF Pjoin,BrJoin+ cost based

BDA 2016 25

Our solutions

supported not supported

Spark interface

BDA 2016 26

Dataset Name Nb of triples Description DrugBank 500K Real dataset LUBM 1.3B Synthetic data, LeHigh Univ WatDiv 1.1B Synthetic data, Waterloo Univ

BDA 2016 27

Dataset size

Achieve higher gain for larger datasets No compression: 4,7 times faster Compressed data 3 times faster

Questions ?

BDA 2016 28

BDA 2016 29

BDA 2016 30

BDA 2016 31

BDA 2016 32

BDA 2016 33

1) Partition data on the join key

Check current data partitioning

2) Distribute (i.e., shuffle) the partitions 3) Compute the join for each key Data transfers

BDA 2016 34

1) Broadcat the small dataset to every compute node 2) Compute the join for each partition of the target Data transfers

BDA 2016 35

BDA 2016 36

BDA 2016 37

lab at

Requête:

?L ?P ?V

BDA 2016 38

Part 1 Part n

Triple dataset

Join for h(x)=1 Result 1

Triple join : x memberOf y . x email z

Result

Select t2 and hash

Result n Select t2 and hash

Part 1 Part n Select t2 and hash

Select t2 and hash

BDA 2016 39

BDA 2016 40

title ?L ?P speaker author Auteur Laboratoire Université Hubert Naacke LIP6

Olivier Curé LIGM Paris Est Marne-la-Vallée Bernd Amann LIP6

BDA 2016 41

Part 1 Part 2 Part N

Triple dataset

Global Operation Result

Data transfers Global operation is not parallel enough, Scalability ?

Data:

BDA 2016 42

lab at

?L ?P ?V

P2 lab L3 P2 name Bob P4 lab L1 P1 lab L1 P1 name Ali P3 lab L2 P3 name Clo L1 at Poitiers L1 since 2000 L3 at Paris L3 staff 200 L2 at Aix L2 at Toulon L2 partner L1

Chain:

lab name

?P ?L

Star:

?N ?L

age lab at

?L ?P ?V

Chain:

Data:

lab at

?L ?P ?V

Star query:

P2 lab L3 P2 age 20 P2 name Bob P4 lab L1 P1 lab L1 P1 name Ali P3 lab L2 P3 name Clo L1 at Poitiers L1 since 2000 L3 at Paris L3 staff 200 L2 at Aix L2 at Toulon L2 partner L1 … lab name

?P ?L ?N ?L

age

Snowflake query:

Chain query:

lab

?L ?P ?a

age name

?n

at

?V

staff

?s ?N

partner

Complex query

BDA 2016 44

BDA 2016 45

GRADES 2017 46

includes

?x ?u

BDA 2016 47