Laplace Max-margin Markov Networks

8/6/2009 VLPR 2009 @ Beijing, China

1

Eric Xing Eric Xing

epxing@cs.cmu.edu Machine Learning Dept./Language Technology Inst./Computer Science Dept.

Carnegie Mellon University Carnegie Mellon University

1

Recent Recent A Advances in dvances in L Learning earning SPARSE SPARSE S Structured tructured I I/ /O O M Model

- dels

s: : models, algorithms, and applications models, algorithms, and applications

8/6/2009 VLPR 2009 @ Beijing, China

2

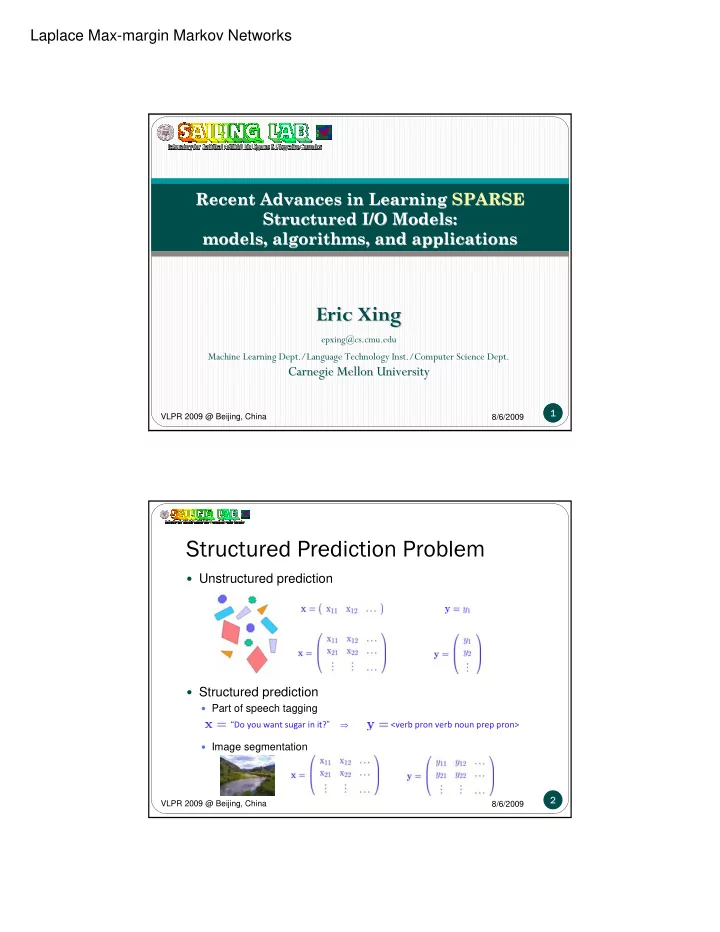

Structured Prediction Problem

“Do you want sugar in it?”

⇒

<verb pron verb noun prep pron>

Unstructured prediction Structured prediction

Part of speech tagging Image segmentation