SLIDE 1 19.6.2012 1 ailab.ijs.si

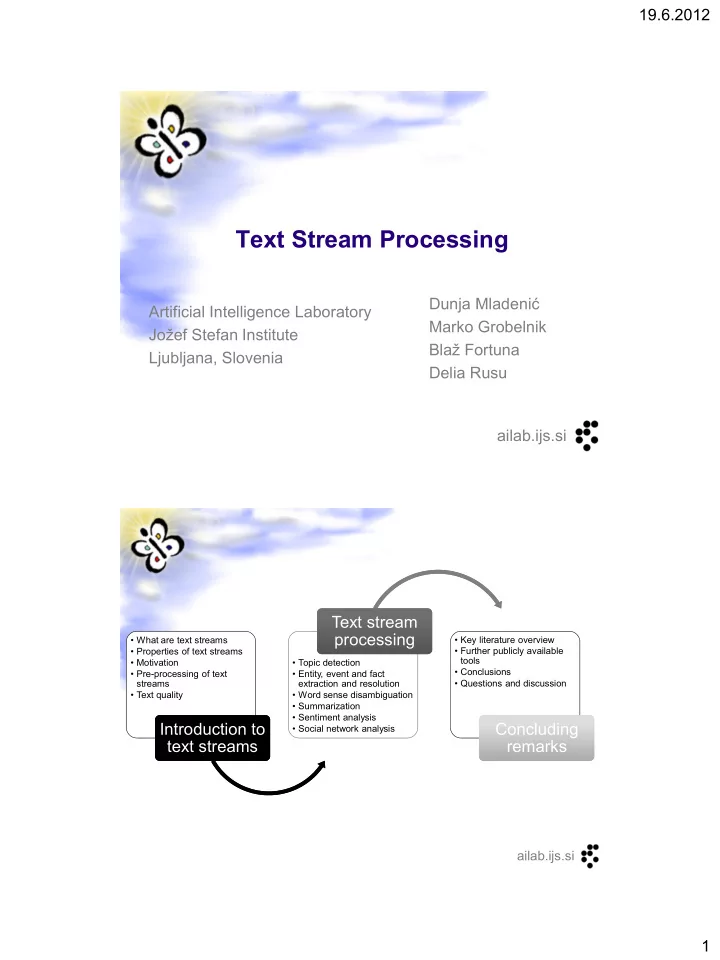

Text Stream Processing

Dunja Mladenić Marko Grobelnik Blaž Fortuna Delia Rusu Artificial Intelligence Laboratory Jožef Stefan Institute Ljubljana, Slovenia

ailab.ijs.si

- What are text streams

- Properties of text streams

- Motivation

- Pre-processing of text

streams

Introduction to text streams Introduction to text streams

- Topic detection

- Entity, event and fact

extraction and resolution

- Word sense disambiguation

- Summarization

- Sentiment analysis

- Social network analysis

Text stream processing Text stream processing

- Key literature overview

- Further publicly available

tools

- Conclusions

- Questions and discussion

Concluding remarks Concluding remarks

SLIDE 2

19.6.2012 2

ailab.ijs.si

Introduction to Text Streams

What are text streams Properties of text streams Motivation Pre-processing of text streams Text quality

ailab.ijs.si

What are data streams

Continuously arriving data, usually in real-time Dealing with streams can be often easy, but…

…gets hard when we have an intensive data stream and complex operations on data are required!

In such situations usually…

…the volume of data is too big to be stored …the data can be scanned thoroughly only once …the data is highly non-stationary (changes properties through time), therefore approximation and adaptation are key to success

Therefore, a typical solution is…

…not to store observed data explicitly, but rather in the aggregate form which allows execution of required operations

SLIDE 3

19.6.2012 3

ailab.ijs.si

Stream processing

Who works with real time data processing?

“Stream Mining” (subfield of “Data Mining”) dealing with mining data streams in different scenarios in relation with machine learning and data bases

http://en.wikipedia.org/wiki/Data_stream_mining

“Complex Event Processing” is a research area discovering complex events from simple ones by inference, statistics etc.

http://en.wikipedia.org/wiki/Complex_Event_Processing ailab.ijs.si

Why one would need (near) real-time information processing?

…because Time and Reaction Speed correlate with many target quantities – e.g.:

…on stock exchange with Earnings …in controlling with Quality of Service …in fraud detection with Safety, etc.

Generally, we can say: Reaction Speed == Value

…if our systems react fast, we create new value!

Motivation for stream processing

SLIDE 4

19.6.2012 4

ailab.ijs.si

What are text streams

Continuous, often rapid, ordered sequence of texts Text information arriving continuously over time in the form of a data stream News and similar regular report

News articles, online comments on news, online traffic reports, internal company reports, web searches, scientific papers, patents

Social media

discussion forums (eg., Twitter, Facebook), short messages on phones or computer, chat, transcripts of phone conversations, blogs, e-mails

Demo http://newsfeed.ijs.si

ailab.ijs.si

NewsFeed

SLIDE 5

19.6.2012 5

ailab.ijs.si

Properties of text streams

Produced with a high rate over time Can be read only once or a small number of times (due to the rate and/or overall volume) Challenging for computing and storage capabilities – efficiency and scalability of the approaches Strong temporal dimension Modularity over time and sources (topic, sentiment,…)

ailab.ijs.si

Example task: evolution of research topics and communities over time

Based on time stamped research publication titles and authors Observe which topics/communities shrunk, which emerged, which split, over time, when in time were the turning points,… TimeFall – monitoring dynamic, temporally evolving graphs and streams based on Minimum Description Length

find good cut-points in time, and stitch together the communities: good cut-point leads to shorter description length. fast and efficient incremental algorithm, scales to large datasets, easily parallelizable

SLIDE 6 19.6.2012 6

ailab.ijs.si

Example task: evolution of research topics and communities over time

Given: n time-stamped events (eg., papers), each related to several of m items (eg., title-words, and/or author-names) Find cluster patterns and summarize their evolution in time

V

1 2 3 4 5

Papers Words Time 1990 1990 1991 1990 1992 1991 1992 1991 Time 1992 1990 1990 1992 1991 1991 1991 1990 Words Papers Words 1990 1991 1992 Time 1990 1991 1992 Time Word Clusters 1990 1991 1992 Time Word Clusters 1990 1992 Time Word Clusters

ailab.ijs.si

TimeFall on 12 million medical publications from PubMed MEDLINE over 40 years scales linearly with the product of the initial time point blocks and the number of non- zeros in the matrix

- J. Ferlez, C. Faloutsos, J. Leskovec, D. Mladenic, M. Grobelnik. Monitoring Network Evolution

using MDL. International Conference on Data Engineering (ICDE 2008).

- J. Ferlez, C. Faloutsos, J. Leskovec, D. Mladenic, M. Grobelnik. Monitoring Network Evolution

using MDL. International Conference on Data Engineering (ICDE 2008).

SLIDE 7

19.6.2012 7

ailab.ijs.si

Pre-processing text stream

Basic text pre-processing

including removing stop-words, applying stemming

Representing text for internal processing

Splitting into units (eg., sentences or words) Mapping to internal representation (eg., feature vectors of words, vectors of ontology concepts)

Pre-processing for aligning/merging text streams

Time wise alignment of multiple text streams - coordinated text streams (appearing over the same time window, eg. news) Content alignment possibly over different languages

ailab.ijs.si

Example

The city hosts a great number of religious buildings, many of them dating back to medieval times.

Stop Words

SLIDE 8

19.6.2012 8

ailab.ijs.si

Example

city hosts great number religious buildings, many them dating back medieval times.

Stemming host religi build date time mediev ailab.ijs.si

Example

city host great number religi build, many them date back mediev time.

Splitting into units of words

(city, host, great, number, religi, build, many, them, date, back, mediev, time) Feature vector of words

SLIDE 9 19.6.2012 9

ailab.ijs.si

Text Quality

Factors:

Vocabulary use Grammatical and fluent sentences Structure and coherence Non-redundant information Referential clarity – e.g. proper usage of pronouns

Models of text quality

Global coherence - overall document organization Local coherence - Adjacent sentences

Language model based approaches

ailab.ijs.si

- What are text streams

- Properties of text streams

- Motivation

- Pre-processing of text

streams

Introduction to text streams Introduction to text streams

- Topic detection

- Entity, event and fact

extraction and resolution

- Word sense disambiguation

- Summarization

- Sentiment analysis

- Social network analysis

Text stream processing Text stream processing

- Key literature overview

- Further publicly available

tools

- Conclusions

- Questions and discussion

Concluding remarks Concluding remarks

SLIDE 10

19.6.2012 10

ailab.ijs.si

Text Stream Processing

WEB Web Crawler Web Crawler Text Pre- Processing Text Pre- Processing Topic Detection Topic Detection Information Extraction Information Extraction Word Sense Disambiguation Word Sense Disambiguation Summarization Summarization Sentiment Analysis Sentiment Analysis Social Network Analysis Social Network Analysis Text Stream Processing Results

ailab.ijs.si

Topic Detection

Religion Art

SLIDE 11

19.6.2012 11

ailab.ijs.si

Topic Detection

Supervised techniques

The data is labeled with predefined topics Machine learning algorithms are used to predict unseen data labels

Unsupervised techniques

Identify patterns and structure within the dataset Clustering: grouping data sharing similar topics Statistical methods: probabilistic topic modeling

ailab.ijs.si

Probabilistic Topic Modeling

Topic: a probability distribution over words in a fixed vocabulary Given an input corpus containing a number of documents, each having a sequence of words, the goal is to find useful sets of topics

SLIDE 12 19.6.2012 12

ailab.ijs.si

Latent Dirichlet Allocation

- D. Blei, A. Ng, and M. Jordan. Latent Dirichlet allocation. Journal of

Machine Learning Research, 3:993–1022, January 2003

- D. Blei, A. Ng, and M. Jordan. Latent Dirichlet allocation. Journal of

Machine Learning Research, 3:993–1022, January 2003

Documents can have multiple topics

Religion Art

ailab.ijs.si

LDA Generative Process

A topic is a distribution over words A document is a mixture of topics (at the level of the corpus) Each word is drawn from one of the corpus-level topics For each document generate the words:

- 1. Randomly choose a distribution over the topics

- 2. For each word in the document

a) Randomly choose a topic from the distribution over topics in (step 1) b) Randomly choose a word from the corresponding distribution

SLIDE 13 19.6.2012 13

ailab.ijs.si

LDA Generative Process

religious 0.03 monastery 0.01 church 0.01 art 0.02 painter 0.02 sculpture 0.01 park 0.01 garden 0.01 Assume a number of topics for the document collection (Craiova guide) Choose a distribution

For each word:

assignment

from the topic

ailab.ijs.si

Topic Models - Extensions

Hierarchical Topic Models

- D. Blei, T. Griffiths, and M. Jordan. The nested

Chinese restaurant process and Bayesian nonparametric inference of topic hierarchies. Journal

- f the ACM, 57:2 1–30, 2010.

- Q. Ho, J. Eisenstein, E. P. Xing. Document

Hierarchies from Text and Links. WWW 2012

Dynamic Topic Models

- D. Blei and J. Lafferty. Dynamic topic models. In

Proceedings of the 23rd International Conference on Machine Learning, 2006.

SLIDE 14

19.6.2012 14

ailab.ijs.si

Topic Detection in Streams

Unsupervised methods Simpler approaches – e.g. Clustering Probabilistic topic models

Challenging because of the amount and dynamics of the data E.g. Online inference for LDA – fits a topic model to random Wikipedia articles

ailab.ijs.si

Topic Detection Tools

Available implementations

LDA, HLDA, …

http://www.cs.princeton.edu/~blei/topicmodeling.html

Mallet

Toolkit for statistical NLP http://mallet.cs.umass.edu/

SLIDE 15 19.6.2012 15

ailab.ijs.si

Clustering on text streams

Grouping similar documents – adjusting to changes in the topics over time

Clusters generated as the data arrives and stored in a tree Adding examples by adjusting the whole path from the root to the leaf node with the new example – adding, removing, splitting and merging clusters

ailab.ijs.si

Clustering on Reuters V1 news (colors showing predefined topics)

- B. Novak, Algorithm for identifying topics in text streams, 2008

- B. Novak, Algorithm for identifying topics in text streams, 2008

SLIDE 16

19.6.2012 16

ailab.ijs.si

Topic Detection - DEMOS

A 100-topic browser of the dynamic topic model fit to Science (1882-2001)

http://topics.cs.princeton.edu/Science/

Browsing search results

http://searchpoint.ijs.si/

ailab.ijs.si

100-topic browser Science (1882-2001)

1890 1940 2000

SLIDE 17

19.6.2012 17

ailab.ijs.si

Search Point

ailab.ijs.si

Entity Extraction

Subtask of information extraction Identifying elements in text which belong to a predefined group of things:

Names of people, locations, organizations (most common) Time expressions, quantities, money amounts, percentages Gene and protein names Etc.

SLIDE 18 19.6.2012 18

ailab.ijs.si

Entity Extraction

ailab.ijs.si

Entity Extraction Approaches

Lists of entities (gazetteers) and grammar rules

e.g. GATE – General Architecture for Text Engineering

- H. Cunningham, et al. Text Processing with GATE (Version

6). University of Sheffield Department of Computer Science. 15 April 2011

Statistical models

e.g. Stanford NER - linear chain Conditional Random Field (CRF) sequence models

- J. R. Finkel, T. Grenager, and C. Manning. Incorporating Non-

local Information into Information Extraction Systems by Gibbs Sampling. In ACL 2005, pp. 363-370.

SLIDE 19 19.6.2012 19

ailab.ijs.si

Collective Entity Resolution

Entity resolution: discover and map entities to corresponding references (e.g from a database, knowledge base, etc.). Approaches:

Pairwise similarity with attributes of references Relational clustering using both attribute and relational information

- I. Bhattacharya, L. Getoor. Collective entity resolution in

relational data. ACM Transactions on Knowledge Discovery from Data (TKDD), 2007.

Topic models for the context of every word in a knowledge base

- P. Sen. Collective Context-Aware Topic Models for Entity

- Disambiguation. WWW 2012

ailab.ijs.si

Entity Resolution to Linked Data

Enhance named entity classification using Linked Data features

- Y. Ni, L. Zhang, Z. Qiu, C. Wang. Enhancing the Open-Domain

Classification of Named Entity using Linked Open Data. ISWC, 2010.

Type knowledge base from LOD

(name string, type) E.g. from the triplet (dbpedia:Craiova, rdf:type, Place) -> (Craiova, Place)

Uses WordNet as an intermediate taxonomy to compute the similarity between the LOD type and the target type

SLIDE 20 19.6.2012 20

ailab.ijs.si

Entity Resolution to Linked Data

Finding all possible forms under which an entity can occur in text

Resource descriptions - most useful rdfs:label and foaf:name Redirect relationship

(entity1, type1) (entity2, ?) entity1 has URI1 entity2 has URI2 URI1 owl:sameAs URI2 Conclude: (entity2, type1)

ailab.ijs.si

Relation Extraction

Identifying relationships between entities (and more generally phrases) Traditional relation extraction

The target relation is given, together with corresponding extraction patterns for the relation A specific corpus

Open relation extraction (and more general Open information extraction)

Diverse relations, not previously fixed Corpus: the Web

- M. Banko, O. Etzioni. The Tradeoffs Between Open

and Traditional Relation Extraction. ACL, 2008.

SLIDE 21 19.6.2012 21

ailab.ijs.si

Identifying Relations for Open IE

3-step method:

Label – automatically label sentences with extractions (arg1, relation phrase, arg2) Learn – learn a relation phrase extractor (e.g using CRF) Extract – given a sentence, identify (arg1, arg2) and the relation phrase (based on the learned relation extractor)

Examples

TextRunner – M. Banko, M. Cafarella, S. Soderland, M. Broadhead, O. Etzioni. Open Information Extraction from the Web. IJCAI 2007. WOE – F. Wu, D.S. Weld. Open Information Extraction using Wikipedia. ACL 2010.

ailab.ijs.si

Identifying Relations for Open IE

REVERB Input: POS-tagged and NP-chunked sentence Identify relation phrases

syntactic and lexical constraints

Find a pair of NP arguments for each relation phrase – assign confidence score (logistic regression classifier) Output: (x,y,z) extraction triplets

- A. Fader, S. Soderland O. Etzioni. Identifying Relations for Open

Information Extraction. EMNLP 2011.

- A. Fader, S. Soderland O. Etzioni. Identifying Relations for Open

Information Extraction. EMNLP 2011.

SLIDE 22 19.6.2012 22

ailab.ijs.si

Identifying Relations for Open IE

Key points:

Relation phrases are identified holistically as

Potential phrases are filtered based on statistics (lexical constraints) relation first opposed to arguments first

relation phrase not confused for arguments (e.g. “made a deal with”)

DEMO

http://openie.cs.washington.edu/#

ailab.ijs.si

REVERB

SLIDE 23 19.6.2012 23

ailab.ijs.si

Never Ending Language Learning

NELL – Never Ending Language Learning

Addressed tasks

Reading task: read the Web and extract a knowledge base of structured facts and knowledge. Learning task: improved (and updated) reading – extract past information more accurately

- A. Carlson, J. Betteridge, B. Kisiel, B. Settles, E.R. Hruschka Jr. and T.M. Mitchell.

Toward an Architecture for Never-Ending Language Learning. AAAI, 2010.

- A. Carlson, J. Betteridge, B. Kisiel, B. Settles, E.R. Hruschka Jr. and T.M. Mitchell.

Toward an Architecture for Never-Ending Language Learning. AAAI, 2010.

ailab.ijs.si

Never Ending Language Learning

SLIDE 24

19.6.2012 24

ailab.ijs.si

Never Ending Language Learning

Coupled Pattern Learner (CPL)

Extracts instances of categories and relations (using contextual patterns)

Coupled SEAL (CSEAL)

Queries the Web with beliefs from each category or relation, mines lists and tables to extract new instances

Coupled Morphological Classifier (CMC)

One regression model per category – classifies noun phrases

Rule Learner (RL)

Infer new relation instances

DEMO

http://rtw.ml.cmu.edu/rtw/

ailab.ijs.si

NELL

SLIDE 25 19.6.2012 25

ailab.ijs.si

Domain and Summary Templates

Domain templates

Event-centric: the focus is on events described with verbs

- E. Filatova, V. Hatzivassiloglou, K. McKeown.

Automatic creation of domain templates. In Proceedings of COLING/ACL 2006

Summary templates

Entity-centric: the focus is on summarizing entity categories

- P. Li, J. Jiang, Y. Wang. Generating templates of

entity summaries with an entity-aspect model and pattern mining. ACL 2010.

ailab.ijs.si

Domain Templates

Domain is a set of events of a particular type

E.g. presidential elections, football championships

Domains can be instantiated – instances of events of a particular type

E.g. Euro Championship 2012

Different levels of granularity Hierarchical structure for domains Template – a set of attribute-value pairs

The attributes specify functional roles characteristic for the domain events

SLIDE 26 19.6.2012 26

ailab.ijs.si

Domain Templates

Use a corpus describing instances of events within a domain and learn the domain templates (general characteristics of the domain) The verbs are used as a starting point – estimate of the verb importance given the domain The sentences containing the top X verbs are parsed The most frequent subtrees (FREQuent Tree miner) are kept The named entities are substituted with more generic constructs – e.g. POS tags The frequent sub-trees are merged together

ailab.ijs.si

Domain Templates

E.g. terrorist attack domain

Killed, told, found, injured, reported, Happened, blamed, arrested, died, linked

(VP(ADVP(NP))(VBD killed)(NP(CD 34)(NNS people))) (VP(ADVP)(VBD killed)(NP(CD 34)(NNS people)))

(VBD killed)(NP(NUMBER)(NNS people))

SLIDE 27

19.6.2012 27

ailab.ijs.si

Summary Templates

Starting point: a collection of entity summaries for a given entity category Goal: to obtain a summary template for the entity category

E.g. The physicist category ENTITY received his phd from ? university ENTITY studied ? under ? ENTITY earned his ? in physics from university of ? ENTITY was awarded the medal in ? ENTITY won the ? award ENTITY received the Nobel prize in physics in ?

ailab.ijs.si

Summary Templates

Identify subtopics (aspects) of the summary collection

Using LDA (see Topic Detection) Each word:

a stop word, a background word, a document word, an aspect word

SLIDE 28

19.6.2012 28

ailab.ijs.si

Summary Templates

Sentence patterns are generated for each aspect

frequent subtree pattern mining

Fixed structure of a sentence pattern

Aspect words, background words, stop words

Template slots – vary between documents

Document words

ailab.ijs.si

Summary Templates

Sentence pattern generation

Locate subject entities (using heuristics) – e.g. pronouns in a biography Generate parse trees (using Stanford Parser) – label stop, background, aspect, document, entity words given by the topic model Mine frequent subtree patterns (using FREQT) Prune patterns without entities or aspect words Convert subtree patterns to sentence patterns (find the sentences that generated the pattern)

SLIDE 29 19.6.2012 29

ailab.ijs.si

Word sense disambiguation

Identifying the meaning of words in context Supervised WSD

Words labeled with their senses are required Classification task

Unsupervised WSD

Known as word sense induction Clustering task

Knowledge-based WSD

Relies on knowledge resources: WordNet, Wikipedia, OpenCyc, etc.

- R. Navigli. Word sense disambiguation: A survey.

ACM Computational Surveys, 41(2), 2009.

- R. Navigli. Word sense disambiguation: A survey.

ACM Computational Surveys, 41(2), 2009.

ailab.ijs.si

Word Sense Disambiguation

Ponzetto, S.P. and Navigli, R. Knowledge-rich Word Sense Disambiguation Rivaling Supervised

Extend WordNet with Wikipedia relations Apply simple knowledge-based approaches Performance was similar with state-of-the-art supervised approaches

SLIDE 30 19.6.2012 30

ailab.ijs.si

WSD Evaluation

Evaluation workshops SenseEval, SemEval, … WSD evaluation topics (SemEval 2010)

Cross-lingual WSD WSD on a specific domain Word sense induction Disambiguating Sentiment Ambiguous Adjectives

Evaluation topics related to WSD (SemEval 2012)

Semantic textual similarity – similarity between two sentences Relational similarity – between pairs of words

ailab.ijs.si

Summarization

Extractive

Identifying relevant sentences that belong to the summary

Abstractive

Identifying/paraphrasing sections of the document to be summarized E.g. Summarization as phrase extraction - K. Woodsend, M. Lapata. Automatic Generation of Story

joint content selection and compression model ILP model to determine phrases that form the highlights

SLIDE 31 19.6.2012 31

ailab.ijs.si

Summarization Evaluation

Several evaluation workshops

Document Understanding Conferences (DUC), Text Analysis Conferences (TAC) Metrics: ROUGE (n-gram based)

Linguistic quality

Grammaticality, non-redundancy, referential clarity, focus, structure and coherence

- E. Pitler, A. Louis, A. Nenkova. Automatic Evaluation

- f Linguistic Quality in Multi-Document

- Summarization. ACL 2010.

ailab.ijs.si

Sentiment analysis

Broad sense: sentiment analysis ~ opinion mining “computational treatment of opinion, sentiment, and subjectivity in text” (B. Pang, L. Lee, 2008) Surveys, book chapters:

- B. Pang, L. Lee. Opinion mining and sentiment analysis.

Foundations and Trends in Information Retrieval 2(1-2), pp. 1–135, 2008

- B. Liu. Sentiment Analysis and Subjectivity. Handbook of

Natural Language Processing, Second Edition, (editors: N. Indurkhya and F. J. Damerau), 2010.

- B. Liu. Web Data Mining - Exploring Hyperlinks, Contents

and Usage Data, Ch. 11: Opinion Mining, Second Edition, Springer, 2011.

SLIDE 32 19.6.2012 32

ailab.ijs.si

Interactive Approach to Sentiment Analysis

http://aidemo.ijs.si/render/index.html

Model and task selection Query result, grouped by predicted label Examples retrieved by uncertainty or class-margin sampling Query Model-based filters

- T. Stajner and I. Novalija. Managing Diversity through Social Media, ESWC

2012 Workshop on Common value management.

- T. Stajner and I. Novalija. Managing Diversity through Social Media, ESWC

2012 Workshop on Common value management.

ailab.ijs.si

Architecture

Indexed documents Active learning for modeling topic, sentiment (diversity analysis) Interactive user interface

Example: stream of social media posts relevant to brand management

SLIDE 33 19.6.2012 33

ailab.ijs.si

Social Network Analysis

Modeling social relationships Network theory concepts

Nodes – individuals within the network Edges – relationships between individuals

Mario Karlovčec, Dunja Mladenić, Marko Grobelnik, Mitja Jermol. Visualizations of Business and Research Collaboration in Slovenia, Proc. Of the Information Technology Interfaces 2012. Mario Karlovčec, Dunja Mladenić, Marko Grobelnik, Mitja Jermol. Visualizations of Business and Research Collaboration in Slovenia, Proc. Of the Information Technology Interfaces 2012.

ailab.ijs.si

Influence and Passivity in Social Media

Majority of Twitter users are passive information consumers – do not forward the content to the network Influence and passivity based on information forwarding activity Passivity

User retweeting rate and audience retweeting rate how difficult it is for other users to influence him

Algorithm ~ HITS

Passivity score ~ authority score

Most passive: robot users – follow many users, but retweet a small percentage

Influence score ~ hub score

Most influential: news services – post many links forwarded by other users

D.M. Romero, W. Galuba, S. Asur, and B.A. Huberman. Influence and Passivity in Social Media. ECML PKDD, 2011. D.M. Romero, W. Galuba, S. Asur, and B.A. Huberman. Influence and Passivity in Social Media. ECML PKDD, 2011.

SLIDE 34 19.6.2012 34

ailab.ijs.si

- What are text streams

- Properties of text streams

- Motivation

- Pre-processing of text

streams

Introduction to text streams

- Topic detection

- Entity, event and fact

extraction and resolution

- Word sense disambiguation

- Summarization

- Sentiment analysis

- Social network analysis

Text stream processing

- Key literature overview

- Further publicly available

tools

- Conclusions

- Questions and discussion

Concluding remarks

ailab.ijs.si

Key literature

SLIDE 35

19.6.2012 35

ailab.ijs.si

Further publicly available tools

Topic detection

David Blei’s homepage: http://www.cs.princeton.edu/~blei/topicmodeling.html Mallet: http://mallet.cs.umass.edu/

Natural language toolkits

GATE: http://gate.ac.uk/ OpenNLP: http://opennlp.apache.org/ Nltk: http://nltk.org/

Entity Extraction

Stanford NER: http://nlp.stanford.edu/ner/index.shtml

Relation Extraction

NELL: http://rtw.ml.cmu.edu/rtw/ REVERB: http://openie.cs.washington.edu/

WSD

WordNet::SenseRelate: http://senserelate.sourceforge.net/ ailab.ijs.si

Conclusions

Dealing with streams can be often easy, but…

…gets hard when we have an intensive data stream and complex operations on data are required!

Topic detection

Currently online inference (e.g. for LDA) is a new direction

Entity, relationship and template extraction, sentiment analysis and social network analysis

Are already applied on streams

Word Sense Disambiguation

Complex knowledge bases (e.g. WordNet + Wikipedia) coupled with simple disambiguation algorithms work well

Summarization

Abstraction summaries are more suited for text streams

SLIDE 36

19.6.2012 36

ailab.ijs.si

Questions and discussion