2010-09-11 1

Multicore Real-Time Systems

- - Challenges & Solutions

Wang Yi Uppsala University VTSA Summer School Luxembourg, Sept 2010

Part 2

Thanks

Guan Nan, Martin Stigge, Mingsong Lv, Zhang Yi, Erik Hagersten, Bengt Jonsson and Alexander Medvedev

2

OUTLINE

Multicore Challenges (Real-Time Applications?)

- Why and what are multicores?

- What we are doing in Uppsala: CoDeR-MP

- The timing analysis problem

Possible Solutions – Partition/Isolation

- Dealing with Cache Contention [EMSOFT 2009]

- Dealing with Bus Interference [RTSS 2010]

- Dealing with Core Sharing [RTAS 2010]

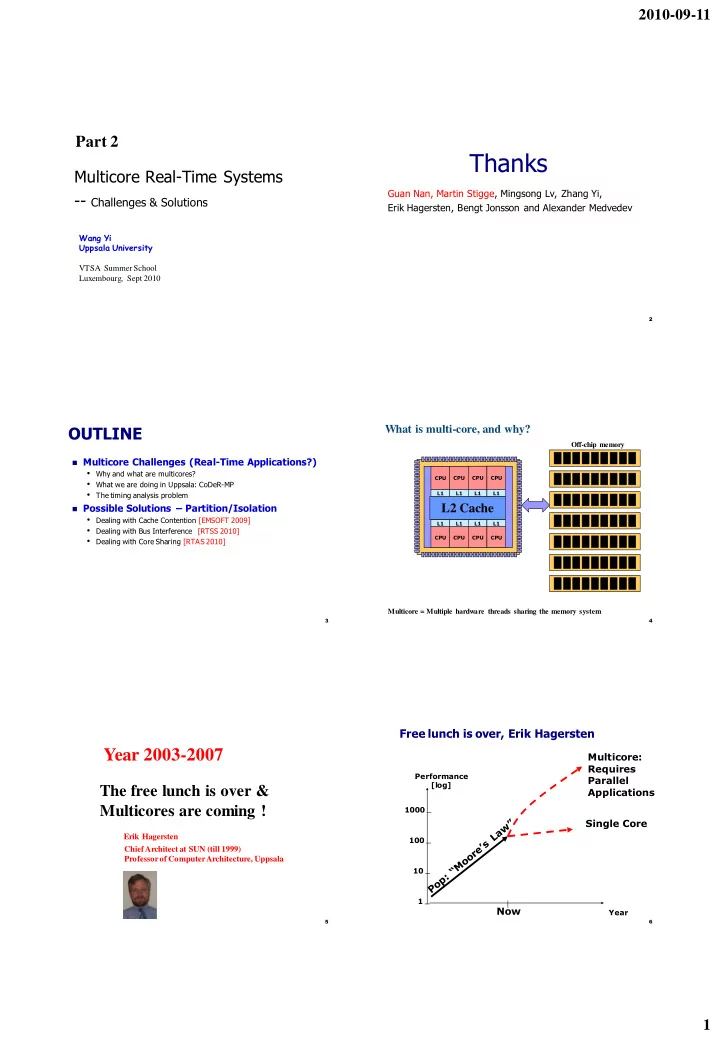

3 CPU L1 CPU L1 CPU L1 CPU L1 CPU L1 CPU L1 CPU L1 CPU L1

What is multi-core, and why?

L2 Cache

Off-chip memory

4

Multicore = Multiple hardware threads sharing the memory system

The free lunch is over & Multicores are coming !

Erik Hagersten Chief Architect at SUN (till 1999) Professor of Computer Architecture, Uppsala

Year 2003-2007

5

1 10 100 1000

Now

Performance [log] Year

Single Core Multicore: Requires Parallel Applications

Free lunch is over, Erik Hagersten

6