Distributed Computing Group Roger Wattenhofer 113

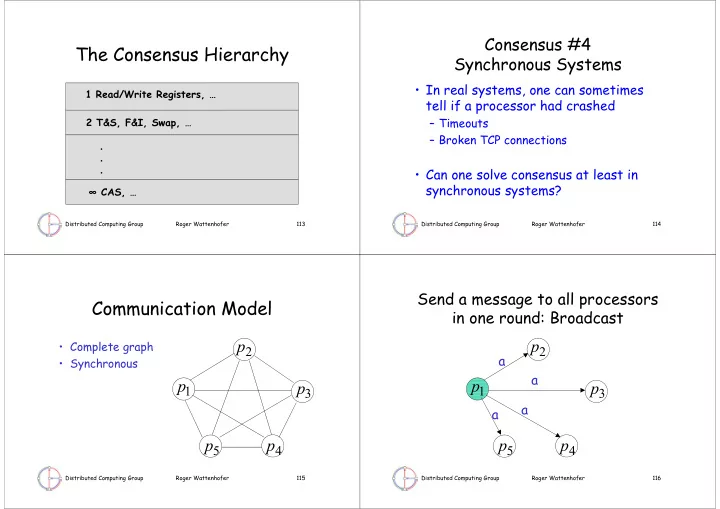

The Consensus Hierarchy

1 Read/Write Registers, … 2 T&S, F&I, Swap, … ∞ CAS, … . . .

Distributed Computing Group Roger Wattenhofer 114

Consensus #4 Synchronous Systems

- In real systems, one can sometimes

tell if a processor had crashed

– Timeouts – Broken TCP connections

- Can one solve consensus at least in

synchronous systems?

Distributed Computing Group Roger Wattenhofer 115

Communication Model

- Complete graph

- Synchronous

1

p

2

p

3

p

4

p

5

p

Distributed Computing Group Roger Wattenhofer 116