The Geometry of Least Squares

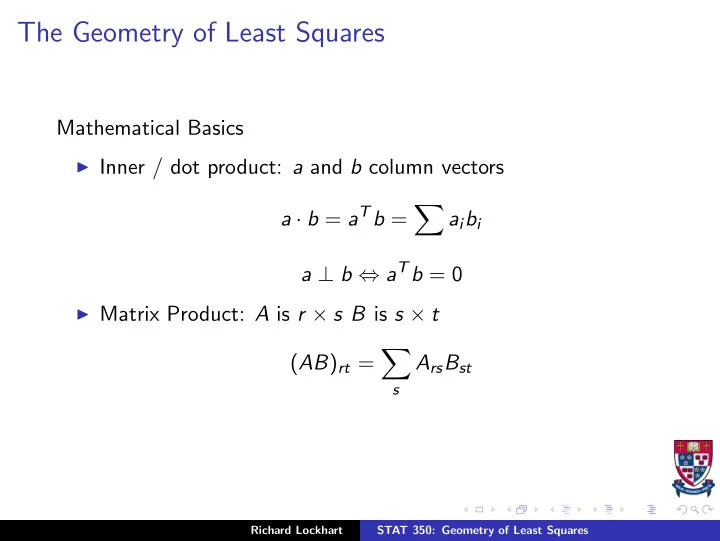

Mathematical Basics

◮ Inner / dot product: a and b column vectors

a · b = aTb =

- aibi

a ⊥ b ⇔ aTb = 0

◮ Matrix Product: A is r × s B is s × t

(AB)rt =

- s

ArsBst

Richard Lockhart STAT 350: Geometry of Least Squares

The Geometry of Least Squares Mathematical Basics Inner / dot - - PowerPoint PPT Presentation

The Geometry of Least Squares Mathematical Basics Inner / dot product: a and b column vectors a b = a T b = a i b i a b a T b = 0 Matrix Product: A is r s B is s t ( AB ) rt = A rs B st s Richard Lockhart STAT

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

2 6 6 6 6 6 6 6 6 6 4 62 63 68 56 60 67 66 62 63 71 71 60 59 64 67 61 65 68 63 66 68 64 63 59 3 7 7 7 7 7 7 7 7 7 5 = 2 6 6 6 6 6 6 6 6 6 4 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 64 3 7 7 7 7 7 7 7 7 7 5 + 2 6 6 6 6 6 6 6 6 6 4 −3 2 4 −3 −3 2 4 −3 −3 2 4 −3 −3 2 4 −3 2 4 −3 2 4 −3 −3 −3 3 7 7 7 7 7 7 7 7 7 5 + 2 6 6 6 6 6 6 6 6 6 4 1 −3 −5 −1 1 −2 1 2 5 3 −1 −2 −2 −1 −1 2 3 2 −2 3 7 7 7 7 7 7 7 7 7 5 Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares

Richard Lockhart STAT 350: Geometry of Least Squares