Background

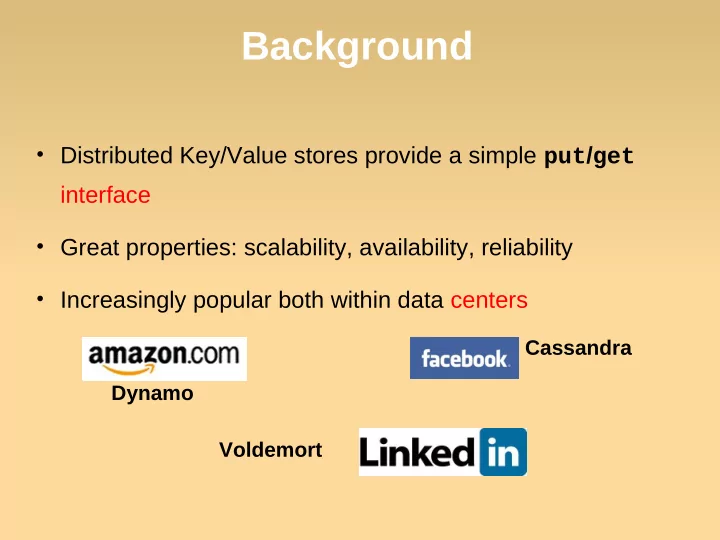

- Distributed Key/Value stores provide a simple put/get

interface

- Great properties: scalability, availability, reliability

- Increasingly popular both within data centers

Background Distributed Key/Value stores provide a simple put / get - - PowerPoint PPT Presentation

Background Distributed Key/Value stores provide a simple put / get interface Great properties: scalability, availability, reliability Increasingly popular both within data centers Cassandra Dynamo Voldemort Dynamo: Amazon's Highly

2

3

Highly scalable and reliable. Tight control over the trade-offs between

Flexible enough to let designer to make trade-

Simple primary-key access to data store.

Best seller list, shopping carts, customer

4

Query Model

Simple read and write operations to a data item that is uniquely identified by a key.

Small objects, ~1MB.

ACID (Atomicity, Consistency, Isolation, Durability)

Trade consistency for availability.

Does not provide any isolation guarantees.

Efficiency

Stringent SLA requirement.

Assumed non-hostile environment.

No authentication or authorization.

Conflict resolution is executed during read instead of write.

Always writable. Performed either by data store or application

5

6

Problem Technique Advantage

Partitioning Consistent Hashing Incremental Scalability High Availability for writes Vector clocks with reconciliation during reads Version size is decoupled from update rates. Handling temporary failures Sloppy Quorum and hinted handoff Provides high availability and durability guarantee when some of the replicas are not available. Recovering from permanent failures Anti-entropy using Merkle trees Synchronizes divergent replicas in the background. Membership and failure detection Gossip-based membership protocol and failure detection. Preserves symmetry and avoids having a centralized registry for storing membership and node liveness information.

7

Consistent hashing: the output

range of a hash function is treated as a fixed circular space or “ring”.

”Virtual Nodes”: Each node can be responsible for more than one virtual node.

Node fails: load evenly dispersed

across the rest.

Node joins: its virtual nodes accept

a roughly equivalent amount of load from the rest.

Heterogeneity.

8

Strategy 1: T random tokens per node and and

Ranges vary in size and frequently change. Long bootstrapping. Difficult to take a snapshot.

9

Strategy 2: T random tokens per node, partition by token value. Turn out to be the worst, why? Strategy 3: Q/S tokens per node, equal-sized partitions.

Best load balancing configuration. Drawback: Changing node membership requires coordination.

10

Each data item is

“preference list”: The list

Improvement: The

11

A vector clock is a list of

Every version of every

Client perform

12

R: min num of nodes in a successful read. W: min num of nodes in a successful write. N: Num of machines in System. Different combination of R and W results in

13

Consistenc y Insurance

Consistenc y Insurance

Always writable, but high risk on inconsistency.

Write: 1 Read: ?

Read Engine

Write: 3 Read: 1

Consistenc y Insurance

Normally

Write: 2 Read: 2

14

Assume N = 3. When A is

D is hinted that the

What if A never

What if D fails before

15

Merkle trees:

Hash tree. Leaves are hashes of individual

Parent nodes are hashes of their

Reduce amount of data required

16

Manually signal membership change. Gossip-based protocol propagates membership

Some Dynamo nodes as seed nodes for

Potential single point of failure?

Local detection of neighbor failure

Gossip style protocol to propagate failure

17

What applications are suitable Dynamo

What applications are NOT suitable for

How can you adapt Dynamo to store large

How can you make Dynamo secure?

1

Roxana Geambasu, Amit Levy, Yoshi Kohno, Arvind Krishnamurthy, and Hank Levy

OSDI'10 OSDI'10 OSDI'10 OSDI'10 Presented by Shen Li

2

3

put put put/ / / /get get get get interface

popular in data centers

4

amazon S3 amazon S3 amazon S3 amazon S3

5

6

7

– Fixed 8-hour data timeout – Overly aggressive replication, which hurts security

– Need Vuze engineer – Long deployment cycle – Hard to evaluate before deployment

Vuze Vanis h

Vuze Vanis h

Vuze Vanis h

Vuze Vanis h

Futur e app

Vuze Vuze Vuze Vuze App App App App Vanish Vanish Vanish Vanish Future Future Future Future app app app app

Vanish: Enhancing the Privacy of the Web with Self-Destructing Data Vanish: Enhancing the Privacy of the Web with Self-Destructing Data Vanish: Enhancing the Privacy of the Web with Self-Destructing Data Vanish: Enhancing the Privacy of the Web with Self-Destructing Data . USENIX Security . USENIX Security . USENIX Security . USENIX Security ‘ ‘ ‘ ‘09 09 09 09

8

9

10

11

Active object Comet node

12

– The code is a set of handlers and user defined functions

ASO data code function onGet() function onGet() function onGet() function onGet() [ [ [ [… … … …] ] ] ] end end end end

13

ASO data code

14

15

Intercept Intercept Intercept Intercept accesses accesses accesses accesses Periodic Periodic Periodic Periodic Tasks Tasks Tasks Tasks Host Host Host Host Interaction Interaction Interaction Interaction DHT DHT DHT DHT Interaction Interaction Interaction Interaction

getSystemTime getSystemTime getSystemTime getSystemTime() get get get get(key, nodes)

getNodeIP getNodeIP getNodeIP getNodeIP() put put put put(key, data, nodes)

getNodeID getNodeID getNodeID getNodeID() lookup lookup lookup lookup(key) getASOKey getASOKey getASOKey getASOKey() deleteSelf deleteSelf deleteSelf deleteSelf()

16

17

18

19

20

function aso:selectReplicas(neighbors) function aso:selectReplicas(neighbors) function aso:selectReplicas(neighbors) function aso:selectReplicas(neighbors) [...] [...] [...] [...] end end end end function aso:onTimer() function aso:onTimer() function aso:onTimer() function aso:onTimer() neighbors = comet.lookup() neighbors = comet.lookup() neighbors = comet.lookup() neighbors = comet.lookup() replicas = self.selectReplicas(neighbors) replicas = self.selectReplicas(neighbors) replicas = self.selectReplicas(neighbors) replicas = self.selectReplicas(neighbors) comet.put(self, replicas) comet.put(self, replicas) comet.put(self, replicas) comet.put(self, replicas) end end end end

21

22

Comet tracker Random tracker

23

aso.neighbors = {} aso.neighbors = {} aso.neighbors = {} aso.neighbors = {} function aso:onTimer() function aso:onTimer() function aso:onTimer() function aso:onTimer() neighbors = comet.lookup() neighbors = comet.lookup() neighbors = comet.lookup() neighbors = comet.lookup() self.neighbors[comet.systemTime()] = neighbors self.neighbors[comet.systemTime()] = neighbors self.neighbors[comet.systemTime()] = neighbors self.neighbors[comet.systemTime()] = neighbors end end end end

24

Vuze Node Lifetime (hours)

25