SLIDE 11 11

Credit-Based vs. Xon/ Xoff Flow Control

Both schemes can fully utilize buffers Restart latency is lower for credit-based schemes and therefore therefore

Credit-based flow control has higher average buffer occupancy at high loads Credit-based flow control leads to higher throughput at high loads Smaller inter-packet gap

Control traffic is higher for credit schemes

Block credits can be used to tune link behavior

Buffer sizes are independent of round trip latency for credit schemes (at the expense of performance) Credit schemes have higher information content useful for QoS schemes

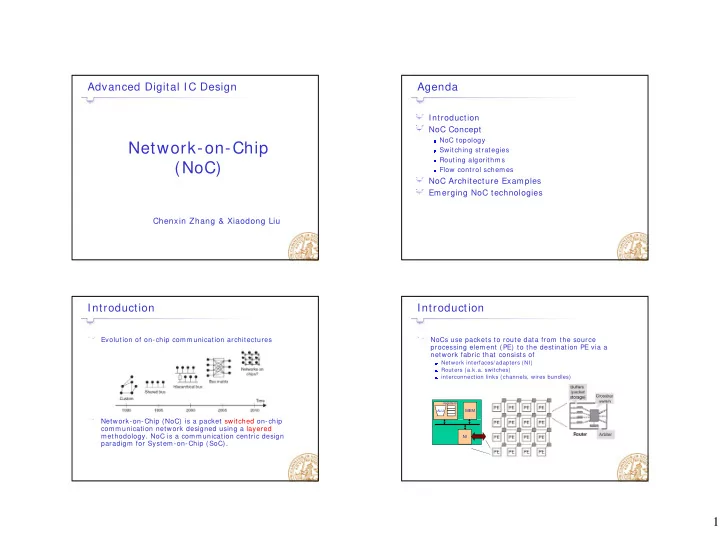

NoC Architecture Examples

Intel’s Teraflops Research Processor

Deliver Tera-scale performance

Single precision TFLOP at desktop power Frequency target 5GHz

I/ O Area I/ O Area

single tile single tile 1 5mm 1 5mm

1 2 .6 4 m m

Frequency target 5GHz Bi-section B/ W order of Terabits/ s Link bandwidth in hundreds of GB/ s

Prototype two key technologies

On-die interconnect fabric 3D stacked memory

Develop a scalable design methodology

1.5mm 1.5mm 2. .0 0mm mm

2 1 .7 2 m m

65nm, 1 poly, 8 metal (Cu) Technology 100 Million (full-chip) 1.2 Million (tile) Transistors 275mm2 (full-chip) Die Area 65nm, 1 poly, 8 metal (Cu) Technology 100 Million (full-chip) 1.2 Million (tile) Transistors 275mm2 (full-chip) Die Area

methodology

Tiled design approach Mesochronous clocking Power-aware capability

I/ O Area I/ O Area PLL PLL TAP TAP I/ O Area I/ O Area PLL PLL TAP TAP

( ) 3mm2 (tile) 8390 C4 bumps # ( ) 3mm2 (tile) 8390 C4 bumps #

[Vangal08]

Main Building Blocks

Special Purpose Cores 2D Mesh Interconnect

Mesochronous Interface Crossbar Router

MSINT 39 40 GB/s MSINT MSINT

2D Mesh Interconnect Mesochronous Clocking Workload-aware Power Management

2KB Data memory (DMEM) memory (IMEM) 6-read, 4-write 32 entry RF

32 64 64 32 64 RIB 96 96 M 39 x 32 x 32 MSINT

3KB Inst. m

Processing Engine (PE)

FPMAC0 + Normalize 32 FPMAC1 + 32 Normalize

Tile