Parallel Computing Portable Software & Cost-Effective Hardware - - PowerPoint PPT Presentation

Parallel Computing Portable Software & Cost-Effective Hardware - - PowerPoint PPT Presentation

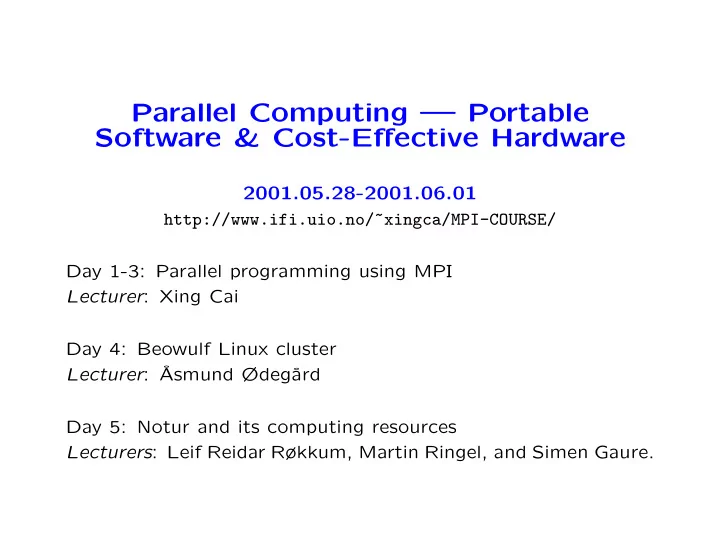

Parallel Computing Portable Software & Cost-Effective Hardware 2001.05.28-2001.06.01 http://www.ifi.uio.no/~xingca/MPI-COURSE/ Day 1-3: Parallel programming using MPI Lecturer : Xing Cai Day 4: Beowulf Linux cluster Lecturer :

Work form Lectures 9:15-12:00 On-site exercise/demo 13:00-16:00 Data-lab VB 207

Parallel Computing Using MPI

Xing Cai Department of Informatics University of Oslo

Parallel Computing Using MPI

Contents Introduction to parallel computing Getting started with MPI Exercises for Day 1 Basic MPI programming Exercises for Day 2 Advanced MPI programming Exercises for Day 3 2

Introduction to Parallel Computing Parallel computing In the field of scientific computing, there is an ever-lasting wish to do larger simulations using shorter computer time. Development of the capacity for single-processor computers can hardly keep up with the pace of scientific computing:

- processor speed

- memory size/speed

There arises need for parallel computing! 3

Introduction to Parallel Computing

The Top500 list based on the Linpack Benchmark

http://www.top500.org/slides/2000/11/ 4

Introduction to Parallel Computing

The Top500 list based on the Linpack Benchmark

http://www.top500.org/slides/2000/11/ 5

Introduction to Parallel Computing Average Number of Processors

Nov93 Nov94 Nov95 Nov96 Nov97 Nov98 Nov99 Nov00 50 100 150 200 250 300

The Top500 list based on the Linpack Benchmark

6

Introduction to Parallel Computing The basic ideas of parallel computing Pursuit of shorter computation time and larger simulation size gives rise to parallel computing. Multiple processors are involved to solve a global problem. The essence is to divide the entire computation evenly among collaborative processors. 7

Introduction to Parallel Computing A rough classification of hardware models Conventional single-processor computers can be called SISD (single-instruction-single-data) machines. SIMD (single-instruction-multiple-data) machines incorporate the idea of parallel processing, which uses a large number of process- ing units to execute the same instruction on different data. Modern parallel computers are so-called MIMD (multiple-instruction- multiple-data) machines and can execute different instruction streams in parallel on different data. 8

Introduction to Parallel Computing Shared memory & distributed memory One way of categorizing modern parallel computers is to look at the memory configuration. In shared memory systems the CPUs share the same address

- space. Any CPU can access any data in the global memory.

In distributed memory systems each CPU has its own memory. The CPUs are connected by some network and may exchange messages. A new trend is ccNUMA (cache-coherent-non-uniform-memory- access) systems which are clusters of SMP (symmetric multi- processing) machines and have a virtual shared memory. 9

Introduction to Parallel Computing Different parallel programming paradigms Task parallelism – the work of a global problem can be divided into a number of independent tasks, which rarely need to syn-

- chronize. Monte Carlo simulation is one example. However this

paradigm is of limited use. Data parallelism – use of multiple threads (e.g. one thread per processor) to dissect loops over arrays etc. This paradigm re- quires a single memory address space. Communication and syn- chronization between processors are often hidden, thus easy to

- program. However, the user surrenders much control to a spe-

cialized compiler. Examples of data parallelism are compiler-based parallelization and OpenMP directives. 10

Introduction to Parallel Computing Different parallel programming paradigms (cont’d) Message-passing – all involved processors have an independent memory address space. The user is responsible for partition- ing the data/work of a global problem and distributing the sub- problems to the processors. Collaboration between processors is achieved by explicit message passing, which is used for data transfer plus synchronization. This paradigm is the most general one where the user has full

- control. Better parallel efficiency is usually achieved by explicit

message passing. However, message-passing programming is more difficult. 11

Introduction to Parallel Computing SPMD Although message-passing programming supports MIMD, it suf- fices with a SPMD (single-program-multiple-data) model, which is flexible enough for practical cases: Same executable for all the processors. Each processor works primarily with its assigned local data. Progression of code is allowed to differ between synchronization points. Possible to have a master/slave model. 12

Introduction to Parallel Computing Today’s situation of parallel computing Distributed memory is the dominant hardware configuration. There is a large diversity in these machines, from MPP (massively par- allel processing) systems to clusters of off-the-shelf PCs, which are very cost-effective. Message-passing is a mature programming paradigm and widely

- accepted. It often provides an efficient match to the hardware.

It is primarily for the distributed memory systems, but can also be used on shared memory systems. We therefore consider here only using message-passing for writ- ing parallel programs. 13

Introduction to Parallel Computing Some useful concepts Speed-up S(P) = T(1) T(P). Efficiency η(P) = S(P) P . Latency and bandwidth — the cost model of send a message of length L between two processors: tC(L) = τ + βL. 14

Introduction to Parallel Computing Overhead present in parallel computing Uneven load balance → not all the processors can perform useful work at all time. Overhead of synchronization. Overhead of communication. Extra computation due to parallelization. Due to the above overhead and that certain part of a sequential algorithm can not be parallelized, T(P) ≥ T(1) P ⇒ S(P) ≤ P. However, super-linear speed-up (S(P) > P) may sometimes oc- cur due to e.g. cache effect. 15

Introduction to Parallel Computing Parallelizing a sequential algorithm Identify the part(s) of a sequential algorithm that can be exe- cuted in parallel. Distribute the global work and data among P processors. Insert communication and synchronization functions where nec- essary. 16

Introduction to Parallel Computing Example 1: Addition of two vectors.

- w = a

x + b y, where a and b are two real scalars and the vectors x, y and w are all of length M. Suppose P is a factor of M, i.e., I = M/P. For every processor, 1 ≤ p ≤ P, we assign it with wp, xp, and yp where e.g.

- wp = (w(p−1)I+1, . . . , wpI).

17

Introduction to Parallel Computing When each processor has the sub-vectors ready, it does its por- tion of the global computation:

- wp = a

xp + b yp. No inter-processor communication is necessary. Perfect speed-up: S(P) = T(1) T(P) = 3MtA 3ItA = M I = P. (tA is the time for one floating-point operation.) 18

Introduction to Parallel Computing Example 2: Inner-product of two vectors. c = x · y =

M

- i=1

xiyi. Again suppose P is a factor of M, I = M/P. Each processor is assigned with sub-vectors xp and

- yp. The local computation is

thus: cp = xp · yp. 19

Introduction to Parallel Computing To obtain the global result, each processor has to send its local result cp to all the other processors. This takes about (P−1)tC(1) as the communication cost. Finally we get c =

p cp.

The speed-up is S(P) = T(1) T(P) = (2M − 1)tA (2I − 1)tA + (P − 1)tC(1) + (P − 1)tA = 2M − 1 (2I + P − 2) + (P − 1)γ ≈ P 1 + γP 2

2M

, which depends on γ = tC(1)/tA and the factor P 2/M. 20

Introduction to Parallel Computing Need a standard for writing message-passing programs Distributed memory systems have a wide spectrum in respect of hardware architecture design. Each vendor used to have special routines of its own for writing message-passing programs. To promote software portability and allow efficient implementa- tion across a range of architectures, there was an obvious need for a standard in early 90’s. 21

Getting Started With MPI MPI MPI is a library specification for the message passing interface, proposed as a standard.

- independent of hardware;

- not a language or compiler specification;

- not a specific implementation or product.

A message passing standard for portability and ease-of-use. Pri- marily for SPMD/MIMD. Designed for high performance. 22

Getting Started With MPI Two phases MPI-1: Traditional message-passing. Standard finalized in 1995. Widely available, many implementa- tions. MPI-2: Remote memory, parallel I/O, dynamic process, threads. Standard finalized in 1997. However, implementations are not yet wide-spread. We will only learn MPI-1 in this course. 23

Getting Started With MPI What’s MPI-1? Point-to-point message passing Collective communication Support for process groups & communication contexts Support for process topologies Environmental inquiry routines Profiling interface 24

Getting Started With MPI Process and processor We refer to process as a logical unit which executes its own code, in an MIMD style. Processor is a physical device on which one or several processes are executed. The MPI standard uses the concept process consistently through-

- ut its documentation.

However, we only consider situations where one processor is re- sponsible for one process and therefore use the two terms inter- changeably. 25

Getting Started With MPI Bindings to MPI routines MPI comprises a message-passing library where all the routines have corresponding C-binding MPI Command name and Fortran-binding (routine names are in uppercase) MPI COMMAND NAME (We focus on the C-bindings in the lecture notes.) 26

Getting Started With MPI Communicator A group of MPI processes with a name (context). Any process is identified by its rank. The rank is only meaningful within a particular communicator. By default communicator MPI COMM WORLD contains all the MPI processes. Mechanism to identify subset of processes. Promotes modular design of parallel libraries. 27

Getting Started With MPI The 6 most important MPI routines MPI Init - initiate an MPI computation MPI Finalize - terminate the MPI computation and clean up MPI Comm size - how many processes participate in a given MPI communicator? MPI Comm rank - which one am I? (A number between 0 and size-1.) MPI Send - send a message to a particular process within an MPI communicator MPI Recv - receive a message from a particular process within an MPI communicator 28

Getting Started With MPI The first MPI program Let every process write “Hello world” on the standard output.

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); printf("Hello world, I’ve rank %d out of %d procs.\n", my_rank,size); MPI_Finalize (); return 0; }

29

Getting Started With MPI The Fortran program

program hello include "mpif.h" integer size, my_rank, ierr ! call MPI_INIT(ierr) call MPI_COMM_SIZE(MPI_COMM_WORLD, size, ierr) call MPI_COMM_RANK(MPI_COMM_WORLD, my_rank, ierr) ! print *,"Hello world, I’ve rank ",my_rank," out of ",size ! call MPI_FINALIZE(ierr) end

30

Getting Started With MPI Compilation by e.g.,

> mpicc -c hello.c > mpicc -o hello hello.o

Execution

> mpirun -np 4 ./hello

Example running result (non-deterministic)

Hello world, I’ve rank 2 out of 4 procs. Hello world, I’ve rank 1 out of 4 procs. Hello world, I’ve rank 3 out of 4 procs. Hello world, I’ve rank 0 out of 4 procs.

31

Getting Started With MPI Parallel execution

✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✿ ❀✵❁✧❂✳✽✜❂✪✄✢✁✲✑❃★❅❄✞✄✜✦✞✬✵✭✂✏❆✯❇❄☛✦✞✬✵✭✙✏✌✶❆✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❉★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✙✏✌✁✒✹✌☞✙✶▲✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾▼★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❆✿ ✛✌✬✧✁☎✄✌✑✵◆❖★✲P✪◗☛☞✵✝✵✝✮✓❙❘✜✓✞✬✜✝✞✡❖✯❚❂❆❯❲❱☛☞✎✬☛✦✳✄✵✾❨❳✮✡❩✓✳✟✵✑❩✓✞◆❨❳✵✡✫✛✌✬☛✓☛✆✵✏✺✔❅❬✠✄▲P✢✯ ✚✙✼✜✽✳✬☛✦✠✄✌✾✕✯❭✏✌✁✲✹✌☞✙✶❆✿ ❀✵❁✧❂✳✽✳❪✂✁☎✄✜✦✌✝✜✁✲✹✌☞▼★✪✶▲✿ ✬☛☞✞✑✮✟✌✬✞✄❨❫❖✿ ❴ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✿ ❀✵❁✧❂✳✽✜❂✪✄✢✁✲✑❃★❅❄✞✄✜✦✞✬✵✭✂✏❆✯❇❄☛✦✞✬✵✭✙✏✌✶❆✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❉★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✙✏✌✁✒✹✌☞✙✶▲✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾▼★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❆✿ ✛✌✬✧✁☎✄✌✑✵◆❖★✲P✪◗☛☞✵✝✵✝✮✓❙❘✜✓✞✬✜✝✞✡❖✯❚❂❆❯❲❱☛☞✎✬☛✦✳✄✵✾❨❳✮✡❩✓✳✟✵✑❩✓✞◆❨❳✵✡✫✛✌✬☛✓☛✆✵✏✺✔❅❬✠✄▲P✢✯ ✚✙✼✜✽✳✬☛✦✠✄✌✾✕✯❭✏✌✁✲✹✌☞✙✶❆✿ ❀✵❁✧❂✳✽✳❪✂✁☎✄✜✦✌✝✜✁✲✹✌☞▼★✪✶▲✿ ✬☛☞✞✑✮✟✌✬✞✄❨❫❖✿ ❴ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✿ ❀✵❁✧❂✳✽✜❂✪✄✢✁✲✑❃★❅❄✞✄✜✦✞✬✵✭✂✏❆✯❇❄☛✦✞✬✵✭✙✏✌✶❆✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❉★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✙✏✌✁✒✹✌☞✙✶▲✿ ❀✵❁✧❂✳✽✞❈✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾▼★❅❀✵❁✧❂✳✽✳❈☛❊✳❀✵❀✜✽✒❋✙❊✳●✌❍✮■❏✯❑❄✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❆✿ ✛✌✬✧✁☎✄✌✑✵◆❖★✲P✪◗☛☞✵✝✵✝✮✓❙❘✜✓✞✬✜✝✞✡❖✯❚❂❆❯❲❱☛☞✎✬☛✦✳✄✵✾❨❳✮✡❩✓✳✟✵✑❩✓✞◆❨❳✵✡✫✛✌✬☛✓☛✆✵✏✺✔❅❬✠✄▲P✢✯ ✚✙✼✜✽✳✬☛✦✠✄✌✾✕✯❭✏✌✁✲✹✌☞✙✶❆✿ ❀✵❁✧❂✳✽✳❪✂✁☎✄✜✦✌✝✜✁✲✹✌☞▼★✪✶▲✿ ✬☛☞✞✑✮✟✌✬✞✄❨❫❖✿ ❴Process 0 Process 1 Process P-1

· · · No inter-processor communication, no synchronization. 32

Getting Started With MPI Synchronization Many parallel algorithms require that none process proceeds be- fore all the processes have reached the same state at certain points of a program. Explicit synchronization

int MPI_Barrier (MPI_Comm comm)

Implicit synchronization through use of e.g. pairs of MPI Send and MPI Recv. Ask yourself the following question: “If Process 1 progresses 100 times faster than Process 2, will the final result still be correct?” 33

Getting Started With MPI Example: ordered output

Explicit synchronization

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank,i; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); for (i=0; i<size; i++) { MPI_Barrier (MPI_COMM_WORLD); if (i==my_rank) { printf("Hello world, I’ve rank %d out of %d procs.\n", my_rank, size); fflush (stdout); } } MPI_Finalize (); return 0; }

34

Getting Started With MPI

✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯✿✁❁❀ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❅★❇❆✞✄✜✦✞✬✵✭✂✏❁✯❈❆☛✦✞✬✵✭✙✏✌✶❁❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❊★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✙✏✌✁✒✹✌☞✙✶▼❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾◆★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❁❀ ❖✌✓✞✬P★✪✁✒◗✌❘✺❀❙✁✠✍☛✏✌✁✒✹✌☞✺❀✱✁✒❚✵❚✂✶ ✷ ❂✵❃✂❄✳✽✠❯✜✦✞✬✮✬✧✁✠☞✞✬❱★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏✂✶▼❀ ✁✒❖P★✪✁✒◗✮◗✒✚✙✼✜✽✳✬✌✦✳✄✌✾✧✶ ✷ ✛✌✬✧✁☎✄✵✑✌❖❲★✲❳✪❨✌☞✌✝✵✝✮✓❙❩✜✓✞✬✜✝✳✡❑✯❬❄❁❭❫❪☛☞❴✬☛✦✠✄✌✾❛❵✵✡❜✓✠✟✌✑❝✓✞❖❛❵✮✡❞✛✌✬✌✓✜✆✵✏✺✔❇❡✒✄❁❳✢✯ ✚✙✼✜✽✳✬✌✦✳✄✌✾✕✯✱✏✌✁✲✹✌☞✙✶❁❀ ❖✵❖☛✝✠✟✂✏✲✖P★✿✏✒✑✌✡✵✓✳✟✌✑✧✶❁❀ ❢ ❢ ❂✵❃✧❄✳✽✳❣✂✁☎✄✜✦✌✝✜✁✲✹✌☞◆★✪✶▼❀ ✬☛☞✞✑✮✟✌✬✞✄❤❘❲❀ ❢ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯✿✁❁❀ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❅★❇❆✞✄✜✦✞✬✵✭✂✏❁✯❈❆☛✦✞✬✵✭✙✏✌✶❁❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❊★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✙✏✌✁✒✹✌☞✙✶▼❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾◆★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❁❀ ❖✌✓✞✬P★✪✁✒◗✌❘✺❀❙✁✠✍☛✏✌✁✒✹✌☞✺❀✱✁✒❚✵❚✂✶ ✷ ❂✵❃✂❄✳✽✠❯✜✦✞✬✮✬✧✁✠☞✞✬❱★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏✂✶▼❀ ✁✒❖P★✪✁✒◗✮◗✒✚✙✼✜✽✳✬✌✦✳✄✌✾✧✶ ✷ ✛✌✬✧✁☎✄✵✑✌❖❲★✲❳✪❨✌☞✌✝✵✝✮✓❙❩✜✓✞✬✜✝✳✡❑✯❬❄❁❭❫❪☛☞❴✬☛✦✠✄✌✾❛❵✵✡❜✓✠✟✌✑❝✓✞❖❛❵✮✡❞✛✌✬✌✓✜✆✵✏✺✔❇❡✒✄❁❳✢✯ ✚✙✼✜✽✳✬✌✦✳✄✌✾✕✯✱✏✌✁✲✹✌☞✙✶❁❀ ❖✵❖☛✝✠✟✂✏✲✖P★✿✏✒✑✌✡✵✓✳✟✌✑✧✶❁❀ ❢ ❢ ❂✵❃✧❄✳✽✳❣✂✁☎✄✜✦✌✝✜✁✲✹✌☞◆★✪✶▼❀ ✬☛☞✞✑✮✟✌✬✞✄❤❘❲❀ ❢ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯✿✁❁❀ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❅★❇❆✞✄✜✦✞✬✵✭✂✏❁✯❈❆☛✦✞✬✵✭✙✏✌✶❁❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞❊★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✙✏✌✁✒✹✌☞✙✶▼❀ ❂✵❃✧❄✳✽✞❉✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾◆★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏❑✯▲❆✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❁❀ ❖✌✓✞✬P★✪✁✒◗✌❘✺❀❙✁✠✍☛✏✌✁✒✹✌☞✺❀✱✁✒❚✵❚✂✶ ✷ ❂✵❃✂❄✳✽✠❯✜✦✞✬✮✬✧✁✠☞✞✬❱★❇❂✵❃✧❄✳✽✳❉☛❋✳❂✵❂✜✽✒●✙❋✳❍✌■✮❏✂✶▼❀ ✁✒❖P★✪✁✒◗✮◗✒✚✙✼✜✽✳✬✌✦✳✄✌✾✧✶ ✷ ✛✌✬✧✁☎✄✵✑✌❖❲★✲❳✪❨✌☞✌✝✵✝✮✓❙❩✜✓✞✬✜✝✳✡❑✯❬❄❁❭❫❪☛☞❴✬☛✦✠✄✌✾❛❵✵✡❜✓✠✟✌✑❝✓✞❖❛❵✮✡❞✛✌✬✌✓✜✆✵✏✺✔❇❡✒✄❁❳✢✯ ✚✙✼✜✽✳✬✌✦✳✄✌✾✕✯✱✏✌✁✲✹✌☞✙✶❁❀ ❖✵❖☛✝✠✟✂✏✲✖P★✿✏✒✑✌✡✵✓✳✟✌✑✧✶❁❀ ❢ ❢ ❂✵❃✧❄✳✽✳❣✂✁☎✄✜✦✌✝✜✁✲✹✌☞◆★✪✶▼❀ ✬☛☞✞✑✮✟✌✬✞✄❤❘❲❀ ❢Process 0 Process 1 Process P-1

· · ·

✲ ✛ ✲ ✛

The processes synchronize between themselves P times. Parallel execution result:

Hello world, I’ve rank 0 out of 4 procs. Hello world, I’ve rank 1 out of 4 procs. Hello world, I’ve rank 2 out of 4 procs. Hello world, I’ve rank 3 out of 4 procs.

35

Getting Started With MPI Point-to-point communication An MPI message is an array of elements of a particular MPI datatype.

- predefined standard types

- derived types

MPI message = “data inside an envelope” Data: start address of the message buffer, counter of elements in the buffer, data type. Envelope: source/destination process, message tag, communi- cator. 36

Getting Started With MPI To send a message

int MPI_Send(void *buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm);

This blocking send function returns when the data has been de- livered to the system and the buffer can be reused. The message may not have been received by the destination process. 37

Getting Started With MPI To receive a message

int MPI_Recv(void *buf, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Status *status);

This blocking receive function waits until a matching message is received from the system so that the buffer contains the incoming message. Match of data type, source process (or MPI ANY SOURCE), message tag (or MPI ANY TAG). Receiving fewer datatype elements than count is ok, but receiving more is an error. 38

Getting Started With MPI MPI Status The source or tag of a received message may not be known if wildcard values were used in the receive function. In C, MPI Status is a structure that contains further information. It can be queried as follows:

status.MPI_SOURCE status.MPI_TAG MPI_Get_count (MPI_Status *status, MPI_Datatype datatype, int *count);

39

Getting Started With MPI Another solution to “ordered output”

Passing semaphore from process to process, in a ring

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank, flag; MPI_Status status; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); if (my_rank>0) MPI_Recv (&flag, 1, MPI_INT, my_rank-1, 100, MPI_COMM_WORLD, &status); printf("Hello world, I’ve rank %d out of %d procs.\n",my_rank,size); if (my_rank<size-1) MPI_Send (&my_rank, 1, MPI_INT, my_rank+1, 100, MPI_COMM_WORLD); MPI_Finalize (); return 0; }

40

Getting Started With MPI

✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯❀✿☛✝✮✦✳✭✕❁ ❂✵❃✧❄✳✽✮❅✳✑☛✦✞✑✮✟✧✏✥✏✒✑☛✦✞✑✞✟✧✏✰❁ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❆★❈❇✞✄✜✦✞✬✵✭✂✏❉✯❊❇☛✦✞✬✵✭✙✏✌✶❉❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞●★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✙✏✌✁✒✹✌☞✙✶❖❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾P★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❉❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✘✮◗✙✶ ❂✵❃✂❄✳✽✳❏☛☞✜✆✲❘❆★❈❇✌✿☛✝✞✦✞✭✕✯❚❙✢✯❯❂✵❃✧❄✳✽☛❄✲❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✜❳✙❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✕✯◆❇✂✏✒✑✌✦✞✑✮✟✧✏✌✶❖❁ ✛✌✬✧✁☎✄✌✑✵✿❩★✲❭✪❪☛☞✵✝✵✝✮✓❚❫✜✓✞✬✜✝✞✡❩✯❨❄❉❴❵❘☛☞✎✬☛✦✳✄✵✾❜❛✮✡❝✓✳✟✵✑❝✓✞✿❜❛✵✡✫✛✌✬☛✓☛✆✵✏✺✔❈❞✠✄❖❭✢✯✤✚✙✼✜✽✠✬☛✦✠✄✌✾▼✯❡✏✌✁☎✹✌☞✙✶❖❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✍☛✏✌✁✲✹✌☞✌❳✂❙✠✶ ❂✵❃✂❄✳✽✮❅✵☞✳✄✌✡❢★❈❇✠✚✙✼☛✽✳✬☛✦✳✄✌✾▼✯❣❙✢✯❯❂✵❃✧❄✳✽✜❄☎❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✌❤✧❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✧✶❖❁ ❂✵❃✧❄✳✽✳✐✂✁☎✄✜✦✌✝✜✁✲✹✌☞P★✪✶❖❁ ✬☛☞✞✑✮✟✌✬✞✄❜◗❩❁ ❥ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯❀✿☛✝✮✦✳✭✕❁ ❂✵❃✧❄✳✽✮❅✳✑☛✦✞✑✮✟✧✏✥✏✒✑☛✦✞✑✞✟✧✏✰❁ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❆★❈❇✞✄✜✦✞✬✵✭✂✏❉✯❊❇☛✦✞✬✵✭✙✏✌✶❉❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞●★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✙✏✌✁✒✹✌☞✙✶❖❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾P★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❉❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✘✮◗✙✶ ❂✵❃✂❄✳✽✳❏☛☞✜✆✲❘❆★❈❇✌✿☛✝✞✦✞✭✕✯❚❙✢✯❯❂✵❃✧❄✳✽☛❄✲❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✜❳✙❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✕✯◆❇✂✏✒✑✌✦✞✑✮✟✧✏✌✶❖❁ ✛✌✬✧✁☎✄✌✑✵✿❩★✲❭✪❪☛☞✵✝✵✝✮✓❚❫✜✓✞✬✜✝✞✡❩✯❨❄❉❴❵❘☛☞✎✬☛✦✳✄✵✾❜❛✮✡❝✓✳✟✵✑❝✓✞✿❜❛✵✡✫✛✌✬☛✓☛✆✵✏✺✔❈❞✠✄❖❭✢✯✤✚✙✼✜✽✠✬☛✦✠✄✌✾▼✯❡✏✌✁☎✹✌☞✙✶❖❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✍☛✏✌✁✲✹✌☞✌❳✂❙✠✶ ❂✵❃✂❄✳✽✮❅✵☞✳✄✌✡❢★❈❇✠✚✙✼☛✽✳✬☛✦✳✄✌✾▼✯❣❙✢✯❯❂✵❃✧❄✳✽✜❄☎❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✌❤✧❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✧✶❖❁ ❂✵❃✧❄✳✽✳✐✂✁☎✄✜✦✌✝✜✁✲✹✌☞P★✪✶❖❁ ✬☛☞✞✑✮✟✌✬✞✄❜◗❩❁ ❥ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☛✏✒✑✌✡✂✁✒✓✕✔✗✖✙✘ ✂✁☎✄✂✆✞✝✠✟☛✡✌☞✎✍☎✚✜✛✢✁✣✔✤✖✙✘ ✁☎✄✌✑✥✚✧✦✜✁☎✄✩★✪✁☎✄✌✑✫✄✜✦✞✬✮✭✂✏✰✯✱✆✲✖✜✦✳✬✂✴✵✴✥✦✞✬✵✭✙✏✌✶ ✷ ✁☎✄✌✑✸✏✌✁✒✹✌☞✺✯✻✚✜✼✜✽✳✬☛✦✳✄✵✾✕✯❀✿☛✝✮✦✳✭✕❁ ❂✵❃✧❄✳✽✮❅✳✑☛✦✞✑✮✟✧✏✥✏✒✑☛✦✞✑✞✟✧✏✰❁ ❂✵❃✧❄✳✽✜❄✪✄✢✁✲✑❆★❈❇✞✄✜✦✞✬✵✭✂✏❉✯❊❇☛✦✞✬✵✭✙✏✌✶❉❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽☛✏✵✁✒✹✌☞●★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✙✏✌✁✒✹✌☞✙✶❖❁ ❂✵❃✧❄✳✽✞❋✵✓✲✚✵✚✢✽✳✬✌✦✳✄✌✾P★❈❂✵❃✧❄✳✽✳❋☛❍✳❂✵❂✜✽✒■✙❍✳❏✌❑✮▲▼✯◆❇✒✚✙✼✜✽✳✬☛✦✠✄✌✾✧✶❉❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✘✮◗✙✶ ❂✵❃✂❄✳✽✳❏☛☞✜✆✲❘❆★❈❇✌✿☛✝✞✦✞✭✕✯❚❙✢✯❯❂✵❃✧❄✳✽☛❄✲❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✜❳✙❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✕✯◆❇✂✏✒✑✌✦✞✑✮✟✧✏✌✶❖❁ ✛✌✬✧✁☎✄✌✑✵✿❩★✲❭✪❪☛☞✵✝✵✝✮✓❚❫✜✓✞✬✜✝✞✡❩✯❨❄❉❴❵❘☛☞✎✬☛✦✳✄✵✾❜❛✮✡❝✓✳✟✵✑❝✓✞✿❜❛✵✡✫✛✌✬☛✓☛✆✵✏✺✔❈❞✠✄❖❭✢✯✤✚✙✼✜✽✠✬☛✦✠✄✌✾▼✯❡✏✌✁☎✹✌☞✙✶❖❁ ✁✒✿✩★✤✚✙✼✜✽✳✬☛✦✠✄✌✾✜✍☛✏✌✁✲✹✌☞✌❳✂❙✠✶ ❂✵❃✂❄✳✽✮❅✵☞✳✄✌✡❢★❈❇✠✚✙✼☛✽✳✬☛✦✳✄✌✾▼✯❣❙✢✯❯❂✵❃✧❄✳✽✜❄☎❱✵❲✕✯ ✚✙✼✜✽✠✬☛✦✳✄✌✾✌❤✧❙✢✯❨❙✲◗✮◗❩✯❬❂✵❃✧❄✳✽✞❋✌❍✳❂✵❂✜✽✠■✜❍✳❏✌❑✮▲✧✶❖❁ ❂✵❃✧❄✳✽✳✐✂✁☎✄✜✦✌✝✜✁✲✹✌☞P★✪✶❖❁ ✬☛☞✞✑✮✟✌✬✞✄❜◗❩❁ ❥Process 0 Process 1 Process P-1

· · ·

✲ ✲

Process 0 sends a message to Process 1, which forwards it further to Process 2, and so on. 41

Getting Started With MPI MPI timer

double MPI_Wtime(void)

This function returns a number representing the number of wall- clock seconds elapsed since some time in the past. Example usage:

double starttime, endtime; starttime = MPI_Wtime(); /* .... work to be timed ... */ endtime = MPI_Wtime(); printf("That took %f seconds\n",endtime-starttime);

42

Getting Started With MPI Timing point-to-point communication (ping-pong)

#include <stdio.h> #include <stdlib.h> #include "mpi.h" #define NUMBER_OF_TESTS 10 int main( int argc, char **argv ) { double *buf; int rank; int n; double t1, t2, tmin; int i, j, k, nloop; MPI_Status status; MPI_Init( &argc, &argv ); MPI_Comm_rank( MPI_COMM_WORLD, &rank ); if (rank == 0) printf( "Kind\t\tn\ttime (sec)\tRate (MB/sec)\n" ); for (n=1; n<1100000; n*=2) { nloop = 1000; buf = (double *) malloc( n * sizeof(double) );

43

Getting Started With MPI

if (!buf) { fprintf( stderr, "Could not allocate send/recv buffer of size %d\n", n ); MPI_Abort( MPI_COMM_WORLD, 1 ); } tmin = 1000; for (k=0; k<NUMBER_OF_TESTS; k++) { if (rank == 0) { /* Make sure both processes are ready */ MPI_Sendrecv( MPI_BOTTOM, 0, MPI_INT, 1, 14, MPI_BOTTOM, 0, MPI_INT, 1, 14, MPI_COMM_WORLD, &status ); t1 = MPI_Wtime(); for (j=0; j<nloop; j++) { MPI_Send( buf, n, MPI_DOUBLE, 1, k, MPI_COMM_WORLD ); MPI_Recv( buf, n, MPI_DOUBLE, 1, k, MPI_COMM_WORLD, &status ); } t2 = (MPI_Wtime() - t1) / nloop; if (t2 < tmin) tmin = t2; } else if (rank == 1) { /* Make sure both processes are ready */ MPI_Sendrecv( MPI_BOTTOM, 0, MPI_INT, 0, 14, MPI_BOTTOM, 0, MPI_INT, 0, 14, MPI_COMM_WORLD, &status ); for (j=0; j<nloop; j++) { MPI_Recv( buf, n, MPI_DOUBLE, 0, k, MPI_COMM_WORLD, &status );

44

Getting Started With MPI

MPI_Send( buf, n, MPI_DOUBLE, 0, k, MPI_COMM_WORLD ); } } } /* Convert to half the round-trip time */ tmin = tmin / 2.0; if (rank == 0) { double rate; if (tmin > 0) rate = n * sizeof(double) * 1.0e-6 /tmin; else rate = 0.0; printf( "Send/Recv\t%d\t%f\t%f\n", n, tmin, rate ); } free( buf ); } MPI_Finalize( ); return 0; }

45

Getting Started With MPI Measurements of pingpong.c on a Linux cluster

Kind n time (sec) Rate (MB/sec) Send/Recv 1 0.000154 0.051985 Send/Recv 2 0.000155 0.103559 Send/Recv 4 0.000158 0.202938 Send/Recv 8 0.000162 0.394915 Send/Recv 16 0.000173 0.739092 Send/Recv 32 0.000193 1.323439 Send/Recv 64 0.000244 2.097787 Send/Recv 128 0.000339 3.018741 Send/Recv 256 0.000473 4.329810 Send/Recv 512 0.000671 6.104322 Send/Recv 1024 0.001056 7.757576 Send/Recv 2048 0.001797 9.114882 Send/Recv 4096 0.003232 10.137046 Send/Recv 8192 0.006121 10.706747 Send/Recv 16384 0.012293 10.662762 Send/Recv 32768 0.024315 10.781164 Send/Recv 65536 0.048755 10.753412 Send/Recv 131072 0.097074 10.801766 Send/Recv 262144 0.194003 10.809867 Send/Recv 524288 0.386721 10.845800 Send/Recv 1048576 0.771487 10.873298

46

Getting Started With MPI Exercises for Day 1 Exercise One: Write a new “Hello World” program, where all the processes first generate a text message using sprintf and then send it to Process 0 (you may use strlen(message)+1 to find out the length of the message). Afterwards, Process 0 is responsible for writing out all the messages on the standard output. Exercise Two: Modify the “ping-pong” program to involve more than 2 processes in the measurement. That is, Process 0 sends message to Process 1, which forwards the message further to Process 2, and so on, until Process P-1 returns the message back to Process 0. Run the test for some different choices of P. 47

Getting Started With MPI Exercise Three: Write a parallel program for calculating π, using the formula: π =

1

4 1 + x2 dx . (Hint: use numerical integration and divide the interval [0, 1] into n intervals.) How to use the PBS scheduling system?

- 1. mpicc program.c

- 2. qsub -o res.txt pbs.sh, where the script pbs.sh may be:

#! /bin/sh #PBS -e stderr.txt #PBS -l walltime=0:05:00,ncpus=4 cd $PBS_O_WORKDIR mpirun -np $NCPUS ./a.out exit $?

48

Basic MPI Programming Example of send & receive: sum of random numbers

Write an MPI program in which each process generates a random number and then Process 0 calculates the sum of these numbers.

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank, i, a, sum; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); srand (7654321*(my_rank+1)); a = rand()%100; printf("<%02d> a=%d\n",my_rank,a); fflush (stdout); if (my_rank==0) { MPI_Status status; sum = a; for (i=1; i<size; i++) { MPI_Recv (&a, 1, MPI_INT, i, 500, MPI_COMM_WORLD, &status);

49

Basic MPI Programming

sum += a; } printf("<%02d> sum=%d\n",my_rank,sum); } else MPI_Send (&a, 1, MPI_INT, 0, 500, MPI_COMM_WORLD); MPI_Finalize (); return 0; }

50

Basic MPI Programming Rules for point-to-point communication Message order preservation – If Process A sends two messages to Process B, which posts two matching receive calls, then the two messages are guaranteed to be received in the order they were sent. Progress – It is not possible for a matching send and receive pair to remain permanently outstanding. That is, if one process posts a send and a second process posts a matching receive, then either the send or the receive will eventually complete. 51

Basic MPI Programming Probing in MPI It is possible in MPI to only read the envelope of a message before choosing whether or not to read the actual message.

int MPI_Probe(int source, int tag, MPI_Comm comm, MPI_Status *status)

The function blocks until a message having given source and/or tag is available. The result of probing is returned in an MPI Status data structure. 52

Basic MPI Programming Sum of random numbers: Alternative 1

Use of MPI Probe

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank, i, a, sum; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); srand (7654321*(my_rank+1)); a = rand()%100; printf("<%02d> a=%d\n",my_rank,a); fflush (stdout); if (my_rank==0) { MPI_Status status; sum = a; for (i=1; i<size; i++) { MPI_Probe (MPI_ANY_SOURCE,500,MPI_COMM_WORLD,&status); MPI_Recv (&a, 1, MPI_INT, status.MPI_SOURCE,500,MPI_COMM_WORLD,&status);

53

Basic MPI Programming

sum += a; } printf("<%02d> sum=%d\n",my_rank,sum); } else MPI_Send (&a, 1, MPI_INT, 0, 500, MPI_COMM_WORLD); MPI_Finalize (); return 0; }

54

Basic MPI Programming Collective communication Communication carried out by all processes in a communicator

A0 A0 A0 A0 A0

- ne-to-all broadcast

MPI_BCAST data processes A0 A1 A2 A3 A0 A1 A2 A3

- ne-to-all scatter

MPI_SCATTER A0 A1 A2 A3 A0 A1 A2 A3 all-to-one gather MPI_GATHER

55

Basic MPI Programming Collective communication (Cont’d)

1316 0 2 0 2 0 2 0 2 0 2

- -

- -

- -

- -

- -

- -

MPI_REDUCE with MPI_SUM, root = 1 : MPI_ALLREDUCE with MPI_MIN: MPI_REDUCE with MPI_MIN, root = 0 :

2 4 5 7 6 2 0 3 1 2 3

Initial Data : Processes . . .

56

Basic MPI Programming Sum of random numbers: Alternative 2

Use of MPI Gather

#include <stdio.h> #include <mpi.h> #include <malloc.h> int main (int nargs, char** args) { int size, my_rank, i, a, sum, *array; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); srand (7654321*(my_rank+1)); a = rand()%100; printf("<%02d> a=%d\n",my_rank,a); fflush (stdout); if (my_rank==0) array = (int*) malloc(size*sizeof(int)); MPI_Gather (&a, 1, MPI_INT, array, 1, MPI_INT, 0, MPI_COMM_WORLD); if (my_rank==0) {

57

Basic MPI Programming

sum = a; for (i=1; i<size; i++) sum += array[i]; printf("<%02d> sum=%d\n",my_rank,sum); free (array); } MPI_Finalize (); return 0; }

58

Basic MPI Programming Sum of random numbers: Alternative 3

Use of MPI Reduce (MPI Allreduce)

#include <stdio.h> #include <mpi.h> int main (int nargs, char** args) { int my_rank, a, sum; MPI_Init (&nargs, &args); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); srand (7654321*(my_rank+1)); a = rand()%100; printf("<%02d> a=%d\n",my_rank,a); fflush (stdout); MPI_Reduce (&a,&sum,1,MPI_INT,MPI_SUM,0,MPI_COMM_WORLD); if (my_rank==0) printf("<%02d> sum=%d\n",my_rank,sum); MPI_Finalize (); return 0; }

59

Basic MPI Programming Example: inner-product Write an MPI program that calculates inner-product between two vectors u = (u1, u2, . . . , uM) and u = (v1, v2, . . . , vM), where uk = xk, vk = x2

k,

xk = k M , 1 ≤ k ≤ M. Partition u and v into P segments (load balancing) with sub- length mi: mi =

- ⌊M

P ⌋ + 1

0 ≤ i < mod(M, P) ⌊M

P ⌋

mod(M, P) ≤ i < P First find the local result. Then compute the global result. 60

Basic MPI Programming

#include <stdio.h> #include <mpi.h> #include <malloc.h> int main (int nargs, char** args) { int size, my_rank, i, m = 1000, m_i, lower_bound, res; double l_sum, g_sum, time, x, dx, *u, *v; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); if (my_rank==0 && nargs>1) m = atoi(args[1]); MPI_Bcast (&m, 1, MPI_INT, 0, MPI_COMM_WORLD); dx = 1.0/m; time = MPI_Wtime(); /* data partition & load balancing */ m_i = m/size; res = m%size; lower_bound = my_rank*m_i;

61

Basic MPI Programming

lower_bound += (my_rank<=res) ? my_rank : res; if (my_rank+1<=res) ++m_i; /* allocation of data storage */ u = (double*) malloc (m_i*sizeof(double)); v = (double*) malloc (m_i*sizeof(double)); /* fill out the u og v-vectors */ x = lower_bound*dx; for (i=0; i<m_i; i++) { x += dx; u[i] = x; v[i] = x*x; } /* calculate the local result */ l_sum = 0.; for (i=0; i<m_i; i++) l_sum += u[i]*v[i]; MPI_Allreduce (&l_sum,&g_sum,1,MPI_DOUBLE,MPI_SUM,MPI_COMM_WORLD); time = MPI_Wtime()-time;

62

Basic MPI Programming

/* output the global result */ printf("<%d>m=%d, lower_bound=%d, m_i=%d, u*v=%g, time=%g\n", my_rank, m, lower_bound, m_i, g_sum*dx, time); free (u); free (v); MPI_Finalize (); return 0; }

63

Basic MPI Programming Parallelizing explicit finite element schemes Consider the following 1D wave equation: ∂2u ∂t2 = γ2∂2u ∂x2, x ∈ (0, 1), t > 0, u(0, t) = UL, u(1, t) = UR, u(x, 0) = f(x), ∂ ∂tu(x, 0) = 0 . 64

Basic MPI Programming Discretization Let uk

i denote the numerical approximation to u(x, t) at the spa-

tial grid point xi and the temporal grid point tk, where ∆x =

1 n+1,

xi = i∆x and tk = k∆t. Defining C = γ∆t/∆x, u0

i = f(xi),

i = 0, . . . , n + 1, uk+1

i

= 2uk

i − uk−1 i

+ C2(uk

i+1 − 2uk i + uk i−1),

i = 1, . . . , n, k ≥ 0, uk+1 = UL, k ≥ 0, uk+1

n+1 = UR,

k ≥ 0, u−1

i

= u0

i + 1

2C2(u0

i+1 − 2u0 i + u0 i−1),

i = 1, . . . , n . 65

Basic MPI Programming Domain partition Each subdomain has a number of computational points, plus 2 “ghost points” that are used to contain values from neighboring subdomains. It is only on those computational points that uk+1

i

is updated based on uk

i−1, uk i , uk i+1, and uk−1 i

. After local computation at each time level, values on the leftmost and rightmost computa- tional points are sent to the left and right neighbors, respectively. Values from neighbors are received into the left and right ghost points. 66

Basic MPI Programming

#include <stdio.h> #include <malloc.h> #include <mpi.h> int main (int nargs, char** args) { int size, my_rank, i, n = 999, n_i; double h, x, t, dt, gamma = 1.0, C = 0.9, tstop = 1.0; double *up, *u, *um, *tmp, umax = 0.05, UL = 0., UR = 0., time; MPI_Status status; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); if (my_rank==0 && nargs>1) /* total number of points in (0,1) */ n = atoi(args[1]); if (my_rank==0 && nargs>2) /* read in Courant number */ C = atof(args[2]); if (my_rank==0 && nargs>3) /* length of simulation */ tstop = atof(args[3]); MPI_Bcast (&n, 1, MPI_INT, 0, MPI_COMM_WORLD);

67

Basic MPI Programming

MPI_Bcast (&C, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD); MPI_Bcast (&tstop, 1, MPI_DOUBLE, 0, MPI_COMM_WORLD); h = 1.0/(n+1); /* distance between two points */ time = MPI_Wtime(); /* find number of subdomain computational points, assume mod(n,size)=0 */ n_i = n/size; up = (double*) malloc ((n_i+2)*sizeof(double)); u = (double*) malloc ((n_i+2)*sizeof(double)); um = (double*) malloc ((n_i+2)*sizeof(double)); /* set initial conditions */ x = my_rank*n_i*h; for (i=0; i<=n_i+1; i++) { u[i] = (x<0.7) ? umax/0.7*x : umax/0.3*(1.0-x); x += h; } if (my_rank==0) u[0] = UL; if (my_rank==size-1) u[n_i+1] = UR; for (i=1; i<=n_i; i++) um[i] = u[i]+0.5*C*C*(u[i+1]-2*u[i]+u[i-1]); /* artificial condition */

68

Basic MPI Programming

dt = C/gamma*h; t = 0.; while (t < tstop) { /* time stepping loop */ t += dt; for (i=1; i<=n_i; i++) up[i] = 2*u[i]-um[i]+C*C*(u[i+1]-2*u[i]+u[i-1]); if (my_rank>0) { /* receive from left neighbor */ MPI_Recv (&(up[0]),1,MPI_DOUBLE,my_rank-1,501,MPI_COMM_WORLD,&status); /* send left neighbor */ MPI_Send (&(up[1]),1,MPI_DOUBLE,my_rank-1,502,MPI_COMM_WORLD); } else up[0] = UL; if (my_rank<size-1) { /* send to right neighbor */ MPI_Send (&(up[n_i]),1,MPI_DOUBLE,my_rank+1,501,MPI_COMM_WORLD); /* receive from right neighbor */ MPI_Recv (&(up[n_i+1]),1,MPI_DOUBLE,my_rank+1,502,MPI_COMM_WORLD,&status); } else up[n_i+1] = UR;

69

Basic MPI Programming

/* prepare for next time step */ tmp = um; um = u; u = up; up = tmp; } time = MPI_Wtime()-time; printf("<%d> time=%g\n", my_rank, time); free (um); free (u); free (up); MPI_Finalize (); return 0; }

70

Basic MPI Programming Two-point boundary value problem −u′′(x) = f(x), 0 < x < 1, x(0) = x(1) = 0. Uniform 1D grid: x0, x1, . . . , xn+1, ∆x =

1 n+1.

Finite difference discretization −ui−1 + 2ui − ui+1 ∆x2 = fi, 1 ≤ i ≤ n.

2 −1 −1 2 −1 ... ... ... −1 2

u1 u2 . . . un

= ∆x2

f1 f2 . . . fn

Au

= f 71

Basic MPI Programming A simple iterative method for Au = f Start with an initial guess u0. Jacobi iteration uk

i =

- ∆x2fi + uk−1

n−1 + uk−1 n+1

- /2.

Calculate residual r = f − Auk. Repeat Jacobi iterations until the norm of residual is small enough. 72

Basic MPI Programming Partition of work load Divide the interior points x1, x2, . . . , xn among the P processors: xi,1, xi,2, . . . , xi,ni. We need two “ghost” boundary nodes xi,0 and xi,ni+1. Those two ghost boundary nodes need to be updated after each Jacobi iteration by receiving data from the neighbors. 73

Basic MPI Programming The main program

#include <stdio.h> #include <mpi.h> #include <math.h> extern void jacobi_iteration (int my_rank, int size, int n_i, double* rhs, double* x_k1, double* x_k); extern void calc_residual (int my_rank, int size, int n_i, double* rhs, double* x, double* res); extern double norm (int n_i, double* x); int main (int nargs, char** args) { int size, my_rank, i, j, n = 99999, n_i, lower_bound; double time, base, r_norm, x, dx, *rhs, *res, *x_k, *x_k1; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); if (my_rank==0 && nargs>1) n = atoi(args[1]); MPI_Bcast (&n, 1, MPI_INT, 0, MPI_COMM_WORLD); dx = 1.0/(n+1);

74

Basic MPI Programming

time = MPI_Wtime(); /* data partition & load balancing */ n_i = n/size; i = n%size; lower_bound = my_rank*n_i; lower_bound += (my_rank<=i) ? my_rank : i; if (my_rank+1<=i) ++n_i; /* allocation of data storage, plus 2 (ghost) boundary points */ x_k = (double*) malloc ((n_i+2)*sizeof(double)); x_k1= (double*) malloc ((n_i+2)*sizeof(double)); rhs = (double*) malloc ((n_i+2)*sizeof(double)); res = (double*) malloc ((n_i+2)*sizeof(double)); /* fill out the rhs og x_k-vectors */ x = lower_bound*dx; for (i=1; i<=n_i; i++) { x += dx; rhs[i] = dx*dx*M_PI*M_PI*sin(M_PI*x); x_k[i] = x_k1[i] = 0.; } x_k[0]=x_k[n_i+1]=x_k1[0]=x_k1[n_i+1]=0.;

75

Basic MPI Programming

base = norm(n_i,rhs); r_norm = 1.0; i = 0; while (i<1000 && (r_norm/base)>1.0e-4) { ++i; jacobi_iteration(my_rank,size,n_i,rhs,x_k1,x_k); calc_residual(my_rank,size,n_i,rhs,x_k,res); r_norm = norm(n_i,res); for (j=0; j<n_i+2; j++) x_k1[j] = x_k[j]; } time = MPI_Wtime()-time; printf("<%d> n+1=%d, iters=%d, time=%g\n",my_rank,n+1,i,time); free (x_k); free (x_k1); free (rhs); free (res); MPI_Finalize (); return 0; }

76

Basic MPI Programming The routine for Jacobi iteration

#include <mpi.h> void jacobi_iteration (int my_rank, int size, int n_i, double* rhs, double* x_k1, double* x_k) { MPI_Status status; for (int i=1; i<=n_i; i++) x_k[i] = 0.5*(rhs[i]+x_k1[i-1]+x_k1[i+1]); if (my_rank>0) { /* receive from left neighbor */ MPI_Recv (&(x_k[0]),1,MPI_DOUBLE,my_rank-1,501,MPI_COMM_WORLD,&status); /* send left neighbor */ MPI_Send (&(x_k[1]),1,MPI_DOUBLE,my_rank-1,502,MPI_COMM_WORLD); } if (my_rank<size-1) { /* send to right neighbor */ MPI_Send (&(x_k[n_i]),1,MPI_DOUBLE,my_rank+1,501,MPI_COMM_WORLD); /* receive from right neighbor */ MPI_Recv (&(x_k[n_i+1]),1,MPI_DOUBLE,my_rank+1,502,MPI_COMM_WORLD,&status); } }

77

Basic MPI Programming The routine for calculating the residual

#include <mpi.h> void calc_residual (int my_rank, int size, int n_i, double* rhs, double* x, double* res) { /* no communication is necessary */ int i; for (i=1; i<=n_i; i++) res[i] = rhs[i]-2.0*x[i]+x[i-1]+x[i+1]; }

Note that no communication is necessary, because the latest so- lution vector is already correctly duplicated between neighboring processes after jacobi iteration. 78

Basic MPI Programming The routine for calculating the norm of a vector

#include <mpi.h> #include <math.h> double norm (int n_i, double* x) { double l_sum=0., g_sum; for (int i=1; i<=n_i; i++) l_sum += x[i]*x[i]; MPI_Allreduce (&l_sum,&g_sum,1,MPI_DOUBLE,MPI_SUM,MPI_COMM_WORLD); return sqrt(g_sum); }

79

Basic MPI Programming Source of deadlocks Send a large message from one process to another

- If there is insufficient storage at the destination, the send must wait for the

user to provide the memory space (through a receive).

Process 0 Process 1 Send(1) Send(0) Recv(1) Recv(0) Unsafe because it depends on the availability of system buffers. 80

Basic MPI Programming Solutions to deadlocks

- Order the operations more carefully

- Use MPI Sendrecv

- Use MPI Bsend

- Use non-blocking operations

Performance may be improved on many systems by overlapping communication and computation. This is especially true on sys- tems where communication can be executed autonomously by an intelligent communication controller. Use of non-blocking and completion routines allow computa- tion and communication to be overlapped. (Not guaranteed, though.) 81

Basic MPI Programming Non-blocking send and receive Non-blocking send: returns “immediately”; message buffer should not be written to after return; must check for local completion.

int MPI_Isend(void* buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm, MPI_Request *request)

Non-blocking receive: returns “immediately”; message buffer should not be read from after return; must check for local com- pletion.

int MPI_Irecv(void* buf, int count, MPI_Datatype datatype, int source, int tag, MPI_Comm comm, MPI_Request *request)

The use of nonblocking receives may also avoid system buffering and memory-to-memory copying, as information is provided early

- n the location of the receive buffer.

82

Basic MPI Programming MPI Request A request object identifies various properties of a communication

- peration. In addition, this object stores information about the

status of the pending communication operation. 83

Basic MPI Programming Local completion Two basic ways of checking on non-blocking sends and receives:

- MPI Wait blocks until the communication is complete.

MPI_Wait(MPI_Request *request, MPI_Status *status)

- MPI Test returns “immediately”, and sets flag to true is the

communication is complete.

MPI_Test(MPI_Request *request, int *flag, MPI_Status *status)

84

Basic MPI Programming Using non-blocking send & receive

#include <mpi.h> extern MPI_Request* req; extern MPI_Status* status; void jacobi_iteration_nb (int my_rank, int size, int n_i, double* rhs, double* x_k1, double* x_k) { int i; /* calculate values on the end-points */ x_k[1] = 0.5*(rhs[1]+x_k1[0]+x_k1[2]); x_k[n_i] = 0.5*(rhs[n_i]+x_k1[n_i-1]+x_k1[n_i+1]); if (my_rank>0) { /* receive from left neighbor */ MPI_Irecv (&(x_k[0]),1,MPI_DOUBLE, my_rank-1,501,MPI_COMM_WORLD,&req[0]); /* send left neighbor */ MPI_Isend (&(x_k[1]),1,MPI_DOUBLE, my_rank-1,502,MPI_COMM_WORLD,&req[1]); } if (my_rank<size-1) {

85

Basic MPI Programming

/* send to right neighbor */ MPI_Isend (&(x_k[n_i]),1,MPI_DOUBLE, my_rank+1,501,MPI_COMM_WORLD,&req[2]); /* receive from right neighbor */ MPI_Irecv (&(x_k[n_i+1]),1,MPI_DOUBLE, my_rank+1,502,MPI_COMM_WORLD,&req[3]); } /* calculate values on the inner-points */ for (i=2; i<n_i; i++) x_k[i] = 0.5*(rhs[i]+x_k1[i-1]+x_k1[i+1]); if (my_rank>0) { MPI_Wait (&req[0],&status[0]); MPI_Wait (&req[1],&status[1]); } if (my_rank<size-1) { MPI_Wait (&req[2],&status[2]); MPI_Wait (&req[3],&status[3]); } }

86

Basic MPI Programming Exercises for Day 2 Exercise One: Introduce non-blocking send/receive MPI func- tions into the “1D wave” program. Do you get any performance improvement? Also improve the program so that load balance is maintained for any choice of n. Can you think of other improve- ments? Exercise Two: Data storage preparation for doing unstructured finite element computation in parallel.

- ✁

87

Basic MPI Programming When a non-overlapping partition of a finite element grid is car- ried out, i.e., each element belongs to only one subdomain, grid points lying on the internal boundaries will be shared between neighboring subdomains. Assume grid points in every subdomain remember their original global number, contained in files: global-ids.00, global-ids.01, and so. Write an MPI program that can a) find out for each subdomain who the neighboring subdomains are, b) how many points are shared between each pair of neighbor subdomains, and c) calculate the Euclidean norm of a global vector that is dis- tributed as sub-vectors contained in files: sub-vec.00, sub-vec.01 and so on. 88

Basic MPI Programming The data files are contained in mpi-kurs.tar, which can be found under directory /site/vitsim/ on the machine pico.uio.no. The Euclidean norm should be 17.3594 if you have programmed ev- erything correctly. 89

Advanced MPI Programming More about point-to-point communication When a standard mode blocking send call returns, the message data and envelope have been “safely stored away”. The message might be copied directly into the matching receive buffer, or it might be copied into a temporary system buffer. MPI decides whether outgoing messages will be buffered. If MPI buffers outgoing messages, the send call may complete before a matching receive is invoked. On the other hand, buffer space may be unavailable, or MPI may choose not to buffer outgoing messages, for performance reasons. Then the send call will not complete until a matching receive has been posted, and the data has been moved to the receiver. 90

Advanced MPI Programming Four communication modes for sending messages standard mode - a send may be initiated even if a matching receive has not been initiated. buffered mode - similar to standard mode, but completion is always independent of matching receive, and message may be buffered to ensure this. synchronous mode - a send may be initiated only if a matching receive has been initiated. ready mode - a send will not complete until message delivery is guaranteed. All 4 modes have blocking and non-blocking versions for send. 91

Advanced MPI Programming The buffered mode (MPI Bsend) A buffered mode send operation can be started whether or not a matching receive has been posted. It may complete before a matching receive is posted. If a send is executed and no matching receive is posted, then MPI must buffer the outgoing message. An error will occur if there is insufficient buffer space. The amount of available buffer space is controlled by the user. Buffer allocation by the user may be required for the buffered mode to be effective. 92

Advanced MPI Programming Buffer allocation for buffered send A user specifies a buffer to be used for buffering messages sent in buffered mode. To provide a buffer in the user’s memory to be used for buffering

- utgoing messages:

int MPI_Buffer_attach( void* buffer, int size)

To detach the buffer currently associated with MPI:

int MPI_Buffer_detach( void* buffer_addr, int* size)

93

Advanced MPI Programming The synchronous mode (MPI Ssend) A synchronous send can be started whether or not a matching receive was posted. However, the send will complete successfully

- nly if a matching receive is posted, and the receive operation

has started to receive the message. The completion of a synchronous send not only indicates that the send buffer can be reused, but also indicates that the receiver has reached a certain point in its execution, namely that it has started executing the matching receive. 94

Advanced MPI Programming The ready mode (MPI Rsend) A send using the mode may be started only if the matching re- ceive is already posted. Otherwise, the operation is erroneous and its outcome is undefined. The completion of the send op- eration does not depend on the status of a matching receive, and merely indicates that the send buffer can be reused. A send using the ready mode provides additional information to the sys- tem (namely that a matching receive is already posted), that can save some overhead. In a correct program, a ready send could be replaced by a stan- dard send with no effect on the behavior of the program other than performance. 95

Advanced MPI Programming Persistent communication requests Often a communication with the same argument list is repeatedly

- executed. MPI can bind the list of communication arguments to

a persistent communication request once and, then, repeatedly use the request to initiate and complete messages. Overhead reduction for communication between the process and commu- nication controller. A persistent communication request can e.g. be created as (no communication yet):

int MPI_Send_init(void* buf, int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm, MPI_Request *request)

96

Advanced MPI Programming Persistent communication requests (cont’d) A communication that uses a persistent request is initiated by

int MPI_Start(MPI_Request *request)

A communication started with a call to MPI Start can be com- pleted by a call to MPI Wait or MPI Test. The request becomes inactive after successful completion. The request is not deallo- cated and it can be activated anew by a MPI Start call. A persistent request is deallocated by a call to MPI Request free. 97

Advanced MPI Programming Send-receive operations MPI send-receive operations combine in one call the sending

- f a message to one destination and the receiving of another

message, from another process. A send-receive operation is very useful for executing a shift op- eration across a chain of processes.

int MPI_Sendrecv(void *sendbuf, int sendcount, MPI_Datatype sendtype, int dest, int sendtag, void *recvbuf, int recvcount, MPI_Datatype recvtype, int source, int recvtag, MPI_Comm comm, MPI_Status *status)

98

Advanced MPI Programming Null process The special value MPI PROC NULL can be used to specify a “dummy” source or destination for communication. This may simplify the code that is needed for dealing with boundaries, e.g., a non- circular shift done with calls to send-receive. A communication with process MPI PROC NULL has no effect.

int left_rank = my_rank-1, right_rank = my_rank+1; if (my_rank==0) left_rank = MPI_PROC_NULL; if (my_rank==size-1) right_rank = MPI_PROC_NULL;

99

Advanced MPI Programming Derived datatypes We have so far used communication involving only contiguous buffers containing elements of the same type. However, one often wants to pass messages that contain values with different datatypes (e.g., an integer count, followed by a sequence of real numbers); Also, one often wants to send noncontiguous data (e.g., a sub- block of a matrix). 100

Advanced MPI Programming Derived datatypes (cont’d) More general communication buffers are specified by replacing the basic datatypes with derived datatypes that are constructed from basic datatypes. A general datatype is an opaque object that specifies two things:

- a sequence of basic datatypes

- a sequence of integer (byte) displacements

101

Advanced MPI Programming Datatype constructors

int MPI_Type_contiguous(int count, MPI_Datatype oldtype, MPI_Datatype *newtype) int MPI_Type_vector(int count, int blocklength, int stride, MPI_Datatype oldtype, MPI_Datatype *newtype) int MPI_Type_hvector(int count, int blocklength, MPI_Aint stride, MPI_Datatype oldtype, MPI_Datatype *newtype) int MPI_Type_indexed(int count, int *array_of_blocklengths, int *array_of_displacements, MPI_Datatype oldtype, MPI_Datatype *newtype) int MPI_Type_hindexed(int count, int *array_of_blocklengths, MPI_Aint *array_of_displacements, MPI_Datatype oldtype, MPI_Datatype *newtype) int MPI_Type_struct(int count, int *array_of_blocklengths, MPI_Aint *array_of_displacements, MPI_Datatype *array_of_types, MPI_Datatype *newtype)

102

Advanced MPI Programming One example of creating new datatypes in MPI

#include <mpi.h> #include <stdio.h> typedef struct { float a; float b; int n; } MY_TYPE; int main (int nargs, char** args) { MY_TYPE indata; int my_rank; int block_lengths[3]; MPI_Aint displacements[3], addresses[4]; MPI_Datatype typelist[3], new_type; typelist[0] = typelist[1] = MPI_FLOAT; typelist[2] = MPI_INT; block_lengths[0] = block_lengths[1] = block_lengths[2] = 1; MPI_Init (&nargs, &args); MPI_Comm_rank (MPI_COMM_WORLD, &my_rank); indata.a = indata.b = 1.0*my_rank;

103

Advanced MPI Programming

indata.n = my_rank; /* calculate the displacements of the members relative to MY_TYPE */ MPI_Address (&indata,&addresses[0]); MPI_Address (&(indata.a),&addresses[1]); MPI_Address (&(indata.b),&addresses[2]); MPI_Address (&(indata.n),&addresses[3]); displacements[0] = addresses[1]-addresses[0]; displacements[1] = addresses[2]-addresses[0]; displacements[2] = addresses[3]-addresses[0]; /* create the derived type */ MPI_Type_struct (3, block_lengths, displacements, typelist, &new_type); /* commit it so that it can be used */ MPI_Type_commit (&new_type); if (my_rank==0) { MPI_Status status; MPI_Recv (&indata, 1, new_type, 1, 500, MPI_COMM_WORLD, &status); printf ("From Proc 1, a=%f, b=%f, n=%d\n", indata.a,indata.b,indata.n); } else if (my_rank==1) MPI_Send (&indata, 1, new_type, 0, 500, MPI_COMM_WORLD); MPI_Finalize (); return 0; }

104

Advanced MPI Programming Packing and unpacking An alternative approach to grouping data. The user explicitly packs data into a contiguous buffer before sending it, and unpacks it from a contiguous buffer after receiving it.

int MPI_Pack(void* inbuf, int incount, MPI_Datatype datatype, void *outbuf, int outsize, int *position, MPI_Comm comm) int MPI_Unpack(void* inbuf, int insize, int *position, void *outbuf, int outcount, MPI_Datatype datatype, MPI_Comm comm)

105

Advanced MPI Programming An example of using MPI Pack

int position, i, j, a[2]; char buff[1000]; .... MPI_Comm_rank(MPI_COMM_WORLD, &myrank); if (myrank == 0) { / * SENDER CODE */ position = 0; MPI_Pack(&i, 1, MPI_INT, buff, 1000, &position, MPI_COMM_WORLD); MPI_Pack(&j, 1, MPI_INT, buff, 1000, &position, MPI_COMM_WORLD); MPI_Send( buff, position, MPI_PACKED, 1, 0, MPI_COMM_WORLD); } else /* RECEIVER CODE */ MPI_Recv( a, 2, MPI_INT, 0, 0, MPI_COMM_WORLD)

Note that MPI PACKED matches any other type. 106

Advanced MPI Programming When to pack/unpack, when to use derived datatypes? If the data to be sent is already stored in consecutive entries of an array, use basic MPI datatypes. If there are a large number of elements that are not in contiguous memory locations, then build a derived datatype (less overhead than pack/unpack). If one is sending heterogeneous data only once or a few times, use MPI Pack and MPI Unpack. May avoid system buffering. 107

Advanced MPI Programming Parallel libraries Developing parallel libraries requires:

- safe communication space,

- group scope for collective operations, that allow libraries to

avoid unnecessarily synchronizing uninvolved processes,

- abstract process naming to allow libraries to describe their

communication in terms suitable to their own data structures and algorithms, - the ability to “adorn” a set of communicating processes with additional user-defined attributes, such as extra collective operations. 108

Advanced MPI Programming Communicators revisited The MPI communicators provide a unified mechanism for con- veniently denoting communication context, the group of com- municating processes, to house abstract process naming, and to store adornments. Communicators = appropriate scope for all communication oper- ations in MPI. Communicators are divided into two kinds: intra- communicators for operations within a single group of processes, and inter-communicators, for point-to-point communication be- tween two groups of processes. 109

Advanced MPI Programming Communicators revisited (cont’d) Groups define an ordered collection of processes, each with a rank used for sending and receiving. A scope for process names in point-to-point communication. In addition, groups define the scope of collective operations. Contexts (a property of communicators) provide the ability to have separate “universes” of message passing in MPI. The use

- f separate communication contexts by distinct libraries (or dis-

tinct library invocations) insulates communication internal to the library execution from external communication. 110

Advanced MPI Programming Communicator constructors & destructors

int MPI_Comm_dup(MPI_Comm comm, MPI_Comm *newcomm) int MPI_Comm_create(MPI_Comm comm, MPI_Group group, MPI_Comm *newcomm) int MPI_Comm_split(MPI_Comm comm, int color, int key, MPI_Comm *newcomm) int MPI_Comm_free(MPI_Comm *comm)

111

Advanced MPI Programming An example of constructing a new communicator

We construct a new communicator excluding only Process 0 in MPI COMM WORLD

main(int argc, char **argv) { int me, count, count2; void *send_buf, *recv_buf, *send_buf2, *recv_buf2; MPI_Group MPI_GROUP_WORLD, grprem; MPI_Comm commslave; static int ranks[] = {0}; MPI_Init(&argc, &argv); MPI_Comm_group(MPI_COMM_WORLD, &MPI_GROUP_WORLD); MPI_Comm_rank(MPI_COMM_WORLD, &me); /* local */ MPI_Group_excl(MPI_GROUP_WORLD, 1, ranks, &grprem); /* local */ MPI_Comm_create(MPI_COMM_WORLD, grprem, &commslave); if(me != 0) { /* compute on slave */ MPI_Reduce(send_buf,recv_buf,count, MPI_INT, MPI_SUM, 1, commslave); } /* zero falls through immediately to this reduce, others do later... */

112

Advanced MPI Programming

MPI_Reduce(send_buf2, recv_buf2, count2, MPI_INT, MPI_SUM, 0, MPI_COMM_WORLD); MPI_Comm_free(&commslave); MPI_Group_free(&MPI_GROUP_WORLD); MPI_Group_free(&grprem); MPI_Finalize(); }

113

Advanced MPI Programming Process topology MPI does not provide mechanisms to specify the initial allocation

- f processes to an MPI computation and their binding to physical

processors. A topology is an extra and optional attribute of a communi- cator; A convenient naming mechanism for the processes of a group (within a communicator), and additionally, may assist the runtime system in mapping the processes onto hardware. 114

Advanced MPI Programming Process topology (cont’d) The communication pattern of a set of processes can be repre- sented by a graph. The nodes stand for the processes, and the edges connect processes that communicate with each other. A “missing link” in the user-defined process graph means rather that this connection is neglected in the virtual topology. A large fraction of all parallel applications use process topolo- gies like rings, two- or higher-dimensional grids, or tori. These structures are completely defined by the number of dimensions and the numbers of processes in each coordinate direction. Also, the mapping of grids and tori is generally an easier problem then that of general graphs. Thus, it is desirable to address these cases explicitly. 115

Advanced MPI Programming Creating topologies The functions MPI Graph create and MPI Cart create are used to create general (graph) virtual topologies and Cartesian topolo- gies, respectively. These topology creation functions are collec- tive. The topology creation functions take as input an existing com- municator comm old, which defines the set of processes on which the topology is to be mapped. A new communicator comm topol is created that carries the topological structure. MPI Cart create can be used to describe Cartesian structures of arbitrary dimension. For each coordinate direction one specifies 116

Advanced MPI Programming whether the process structure is periodic or not. The local auxil- iary function MPI Dims create can be used to compute a balanced distribution of processes among a given number of dimensions. For Cartesian topologies, an MPI Sendrecv operation can be used along a coordinate direction to perform a shift of data. Func- tion MPI Cart shift provides identifiers, which can be passed to MPI Sendrecv.

int MPI_Cart_shift(MPI_Comm comm, int direction, int disp, int *rank_source, int *rank_dest)

117

Advanced MPI Programming Partitioning Cartesian topologies We can also partition a communicator (from MPI Graph create) into subgroups that form lower-dimensional Cartesian subgrids, and build for each subgroup a communicator with the associated subgrid Cartesian topology.

int MPI_Cart_sub(MPI_Comm comm, int *remain_dims, MPI_Comm *newcomm)

118

Advanced MPI Programming “Optimal” placement of processes MPI can compute an “optimal” placement of processes on the physical machine.

int MPI_Cart_map(MPI_Comm comm, int ndims, int *dims, int *periods, int *newrank) int MPI_Graph_map(MPI_Comm comm, int nnodes, int *index, int *edges, int *newrank)

119

Advanced MPI Programming Profiling Every MPI routine has two entry points: MPI ...

- r PMPI ...

Enables single level of low-overhead routines to interact MPI calls without any source code modifications.

static int totalBytes=0; static double totalTime=0; int MPI_Send(void * buffer, const int count, MPI_Datatype datatype, int dest, int tag, MPI_Comm comm) { double tstart = MPI_Wtime(); /* Pass on all the arguments */ int extent; int result = PMPI_Send(buffer,count,datatype,dest,tag,comm); MPI_Type_size(datatype, &extent); /* Compute size */ totalBytes += count*extent; totalTime += MPI_Wtime() - tstart; /* and time */ return result; }

120

Advanced MPI Programming The MPE toolbox

MPE_Init_log(); if (myid == 0) { MPE_Describe_state(1, 2, "Broadcast", "red:vlines3"); MPE_Describe_state(3, 4, "Compute", "blue:gray3"); MPE_Describe_state(5, 6, "Reduce", "green:light_gray"); } MPE_Start_log(); MPE_Log_event(1, 0, "start broadcast"); MPI_Bcast(&n, 1, MPI_INT, 0, MPI_COMM_WORLD); MPE_Log_event(2, 0, "end broadcast"); MPE_Log_event(3, 0, "start compute"); /* some computation */ MPE_Log_event(4, 0, "end compute"); MPE_Log_event(5, 0, "start reduce"); MPI_Reduce(&mypi, &pi, 1, MPI_DOUBLE, MPI_SUM, 0, MPI_COMM_WORLD); MPE_Log_event(6, 0, "end reduce"); MPE_Finish_log("cpilog");

121

Advanced MPI Programming Graphical MPE output

> mpirun -np 4 ./a.out > clog2alog cpilog > upshot cpilog.alog

x x x 0.015 0.044 0.074 0.103 0.133 0.162 0.192 Broadcast Compute Reduce Sync 1 2 3

122

Advanced MPI Programming Message passing programming Keep in mind that: Multiple processes with own memory co-exist. They need to work collaboratively to solve a common global problem. The collaboration is done by synchronization and data exchange in form of passing messages (with tags) between neighbors. There are certain non-deterministic features of a parallel simu- lation:

- The order of incoming messages from neighbors may vary from

execution to execution.

- Progress of one process may lag behind others.

123

Advanced MPI Programming Some tips on MPI programming Use predefined MPI reduction operations; Avoid MPI Barrier if possible; Make use of local disk to different processors when saving a large amount of data to files; When computing a global maximum/minimum entity, remember to call MPI Reduce or MPI Allreduce after finding the local maxi- mum/minimum value. 124

Advanced MPI Programming Exercises for Day 3 Exercise One: What is the output of the following program when executed on e.g. 6 CPUs?

#include <mpi.h> #include <stdio.h> int main (int nargs, char** args) { int size0, rank0, size1, rank1, size2, rank2, i; MPI_Comm comm1, comm2; MPI_Init (&nargs, &args); MPI_Comm_size (MPI_COMM_WORLD, &size0); MPI_Comm_rank (MPI_COMM_WORLD, &rank0); i = rank0%2; MPI_Comm_split (MPI_COMM_WORLD, i, rank0, &comm1); MPI_Comm_size (comm1, &size1); MPI_Comm_rank (comm1, &rank1); i = rank0/2; MPI_Comm_split (MPI_COMM_WORLD, i, rank0, &comm2);

125

Advanced MPI Programming

MPI_Comm_size (comm2, &size2); MPI_Comm_rank (comm2, &rank2); printf("size0=%d, rank0=%d, size1=%d, rank1=%d, size2=%d, rank2=%d\n", size0, rank0, size1, rank1, size2, rank2); MPI_Finalize (); return 0; }

126

Advanced MPI Programming Exercise Two: Parallelize an existing simulator for the 2D wave

- equation. Use 2D Cartesian process topologies.

∂2u ∂t2 = ∂2u ∂x2 + ∂2u ∂y2 in Ω = [0, 1]2, u(x, y, 0) = u0(x, y), ∂ ∂tu(x, y, 0) = 0, ∂u ∂n ≡ ∇u · n = 0 on ∂Ω.

#include <malloc.h> #include <stdio.h> #include <math.h> #define LaplaceU(i,j) \ (dt*dt/dx/dx)*(u[i+1][j]-2*u[i][j]+u[i-1][j]) \ +(dt*dt/dy/dy)*(u[i][j+1]-2*u[i][j]+u[i][j-1]) /* routine for carrying out one time step computational points are [1,2...nx]x[1,2...ny] where i=1,i=nx,j=1,j=ny are boundaries fulling no-slip conditions. */ void WAVE (const int nx, const int ny,

127

Advanced MPI Programming

double** up, double** u, double** um, double a, double b, double c, double dt, double dx, double dy) { int i,j; /* prepare values on ghost boundary nodes */ for (j=1; j<=ny; j++) { u[0][j] = u[2][j]; u[nx+1][j] = u[nx-1][j]; } for (i=1; i<=nx; i++) { u[i][0] = u[i][2]; u[i][ny+1] = u[i][ny-1]; } /* update computational points according to finite difference scheme: */ for (i=1; i<=nx; i++) for (j=1; j<=ny; j++) up[i][j] = a*2.*u[i][j]-b*um[i][j]+c*LaplaceU(i,j); } int main (int argc, const char* argv[]) { int nx=101, ny=101, i, j; double dx, dy, t, dt, tstop = 1.0;

128

Advanced MPI Programming

double **u, **up, **um; if (argc>1) nx = atoi(argv[1]); if (argc>2) ny = atoi(argv[2]); dx = 1.0/(nx-1); dy = 1.0/(ny-1); dt = 1.0/sqrt(1.0/dx/dx+1.0/dy/dy); /* allocate data storage */ u = (double**)malloc((nx+2)*sizeof(double*)); up = (double**)malloc((nx+2)*sizeof(double*)); um = (double**)malloc((nx+2)*sizeof(double*)); u[0] = (double*)malloc((nx+2)*(ny+2)*sizeof(double)); up[0] = (double*)malloc((nx+2)*(ny+2)*sizeof(double)); um[0] = (double*)malloc((nx+2)*(ny+2)*sizeof(double)); for (i=1; i<=nx+1; i++) { u[i] = u[i-1] +(ny+2); up[i] = up[i-1]+(ny+2); um[i] = um[i-1]+(ny+2); } /* velocity at t=0; */ for (i=1; i<=nx; i++)

129

Advanced MPI Programming

for (j=1; j<=ny; j++) u[i][j] = 0.25*cos(M_PI*(i-1)*dx); /* find the artifical um such that du/dt=0 at t=0 */ WAVE (nx, ny, um, u, um, 0.5, 0, 0.5, dt, dx, dy); t = 0.; while (t < tstop) { double** tmp; t += dt; WAVE (nx, ny, up, u, um, 1, 1, 1, dt, dx ,dy); tmp = um; um = u; u = up; up = tmp; } /* de-allocate data storage */ free (u[0]); free (up[0]); free (um[0]); free (u); free (up); free (um); }

130

Advanced MPI Programming References Message Passing Interface Forum. MPI: A Message-Passing In- terface Standard, 1995. William Gropp, Ewing Lusk and Anthony Skjellum. “Using MPI: Portable Parallel Programming with the Message Passing”, MIT Press, 1994. Peter S. Pacheco, “Parallel Programming With MPI”, Morgan Kaufmann, 1996. 131

Advanced MPI Programming Related MPI web pages

http://www.mpi-forum.org/ http://www-unix.mcs.anl.gov/mpi/ http://www.mpi.nd.edu/mpi_tutorials/ http://www.nas.nasa.gov/Groups/SciCon/Tutorials/MPIintro/toc.html http://www-unix.mcs.anl.gov/mpi/tutorial/snir/mpi/mpi.htm http://www.epm.ornl.gov/~walker/mpitutorial/ http://www-unix.mcs.anl.gov/dbpp/