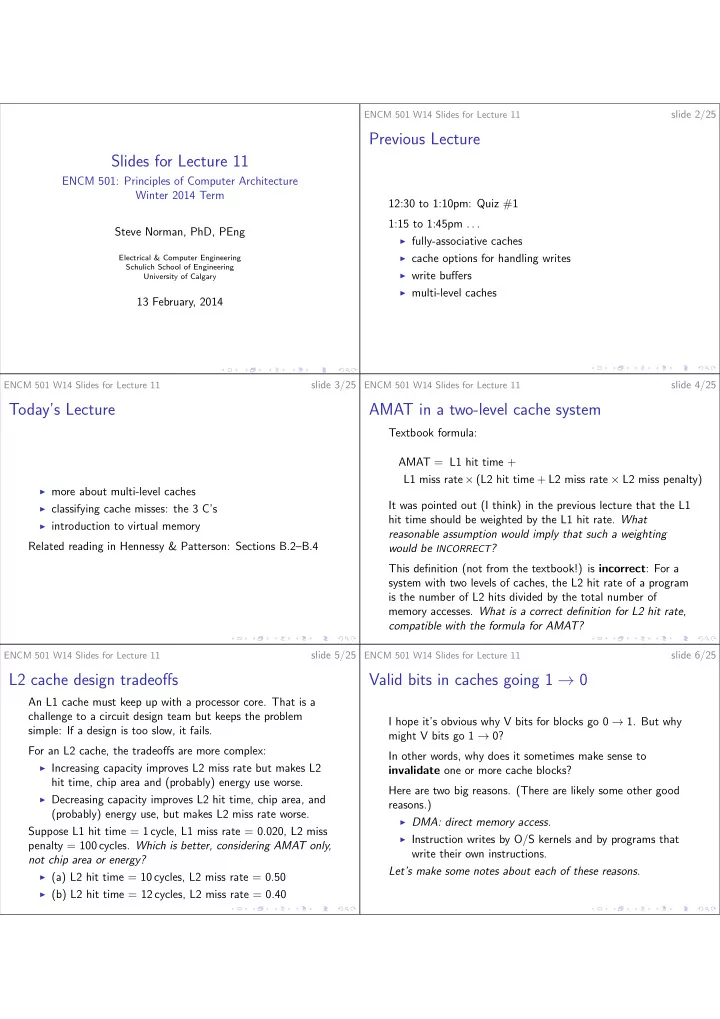

Slides for Lecture 11

ENCM 501: Principles of Computer Architecture Winter 2014 Term Steve Norman, PhD, PEng

Electrical & Computer Engineering Schulich School of Engineering University of Calgary

13 February, 2014

ENCM 501 W14 Slides for Lecture 11

slide 2/25

Previous Lecture

12:30 to 1:10pm: Quiz #1 1:15 to 1:45pm . . .

◮ fully-associative caches ◮ cache options for handling writes ◮ write buffers ◮ multi-level caches

ENCM 501 W14 Slides for Lecture 11

slide 3/25

Today’s Lecture

◮ more about multi-level caches ◮ classifying cache misses: the 3 C’s ◮ introduction to virtual memory

Related reading in Hennessy & Patterson: Sections B.2–B.4

ENCM 501 W14 Slides for Lecture 11

slide 4/25

AMAT in a two-level cache system

Textbook formula: AMAT = L1 hit time + L1 miss rate × (L2 hit time + L2 miss rate × L2 miss penalty) It was pointed out (I think) in the previous lecture that the L1 hit time should be weighted by the L1 hit rate. What reasonable assumption would imply that such a weighting would be INCORRECT? This definition (not from the textbook!) is incorrect: For a system with two levels of caches, the L2 hit rate of a program is the number of L2 hits divided by the total number of memory accesses. What is a correct definition for L2 hit rate, compatible with the formula for AMAT?

ENCM 501 W14 Slides for Lecture 11

slide 5/25

L2 cache design tradeoffs

An L1 cache must keep up with a processor core. That is a challenge to a circuit design team but keeps the problem simple: If a design is too slow, it fails. For an L2 cache, the tradeoffs are more complex:

◮ Increasing capacity improves L2 miss rate but makes L2

hit time, chip area and (probably) energy use worse.

◮ Decreasing capacity improves L2 hit time, chip area, and

(probably) energy use, but makes L2 miss rate worse. Suppose L1 hit time = 1 cycle, L1 miss rate = 0.020, L2 miss penalty = 100 cycles. Which is better, considering AMAT only, not chip area or energy?

◮ (a) L2 hit time = 10 cycles, L2 miss rate = 0.50 ◮ (b) L2 hit time = 12 cycles, L2 miss rate = 0.40

ENCM 501 W14 Slides for Lecture 11

slide 6/25

Valid bits in caches going 1 → 0

I hope it’s obvious why V bits for blocks go 0 → 1. But why might V bits go 1 → 0? In other words, why does it sometimes make sense to invalidate one or more cache blocks? Here are two big reasons. (There are likely some other good reasons.)

◮ DMA: direct memory access. ◮ Instruction writes by O/S kernels and by programs that