SLIDE 2 ENCM 501 W14 Slides for Lecture 25

slide 7/17

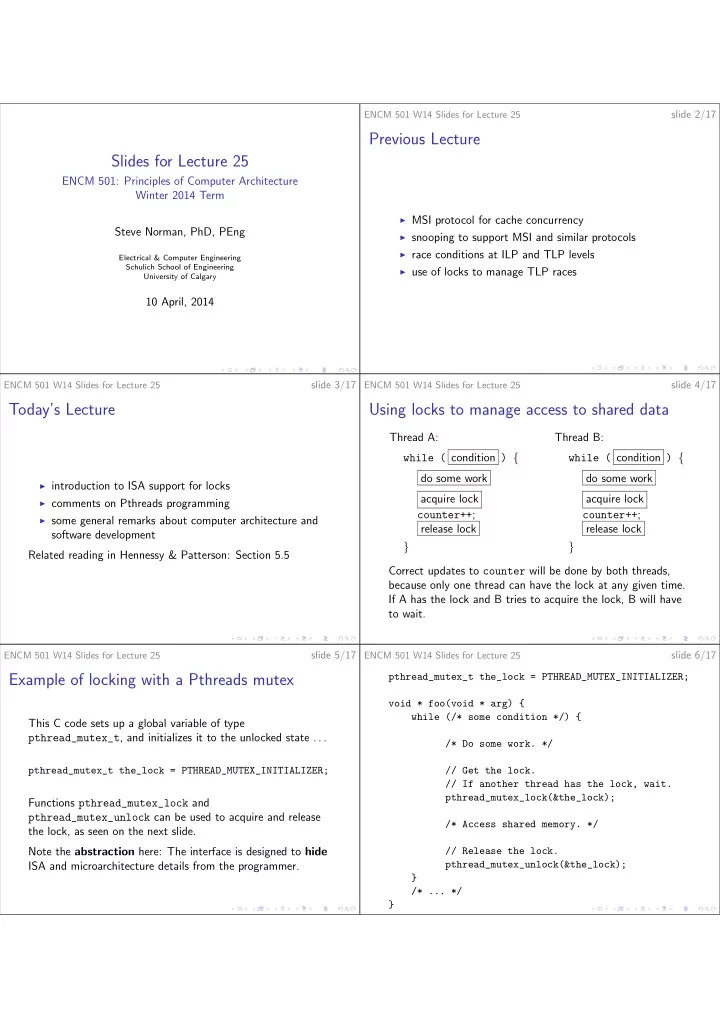

How NOT to set up a lock

int my_lock = 0; // 0: unlocked; 1: locked. void get_lock(void) { // Keep trying until my_lock == 0. while (my_lock != 0) ; // Aha! The lock is available! my_lock = 1; }

Why is this approach totally useless?

ENCM 501 W14 Slides for Lecture 25

slide 8/17

ISA and microarchitecture support for concurrency

Instruction sets have to provide special atomic instructions to allow software implementation of synchronization facilities such as mutexes (locks) and semaphores. An atomic RMW (read-modify-write) instruction (or a sequence of instructions that is intended to provide the same kind of behaviour, such as MIPS LL/SC) typically works like this:

◮ memory read is attempted at some location; ◮ some kind of write data is generated; ◮ memory write to the same location is attempted.

ENCM 501 W14 Slides for Lecture 25

slide 9/17

The key aspects of atomic RMW instructions are:

◮ the whole operation succeeds or the whole operation fails,

in a clean way that can be checked after the attempt was made;

◮ if two or more threads attempt the operation, such that

the attempts overlap in time, one thread will succeed, and all the other threads will fail.

ENCM 501 W14 Slides for Lecture 25

slide 10/17

MIPS LL and SC instructions

LL (load linked:) This is like a normal LW instruction, but it also gets the processor ready for upcoming SC instruction. SC (store conditional:) The assembler syntax is SC GPR1 ,

If SC succeeds, it works like SW, but also writes 1 into GPR1 . If SC fails, there is no memory write, and GPR1 gets a value

The hardware ensures that if two or more threads attempt LL/SC sequences that overlap in time, SC will succeed in

ENCM 501 W14 Slides for Lecture 25

slide 11/17

Use of LL and SC to lock a mutex

Suppose R9 points to a memory word used to hold the state of a mutex: 0 for unlocked, 1 for locked. Here is code for MIPS, with delayed branch instructions. L1: LL R8, (R9) BNE R8, R0, L1 ORI R8, R0, 1 SC R8, (R9) BEQ R8, R0, L1 NOP Let’s add some comments to explain how this works. What would the code be to unlock the mutex?

ENCM 501 W14 Slides for Lecture 25

slide 12/17

Spinlocks

The example on the last slide demonstrates spinning in a loop to acquire a lock. Suppose Thread A is spinning, waiting to acquire a lock. Then Thread A is occupying a core, using energy, and not really doing any work. That’s fine if the lock will soon be released. However, if the lock may be held for a long time, a more sophisticated algorithm is better:

◮ Thread spins, but gives up after some fixed number of

iterations.

◮ Thread makes system call to OS kernel, asking to sleep

until the lock is available.