CS 4100: Artificial Intelligence Hidden Markov Models

Jan-Willem van de Meent, Northeastern University

[These slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

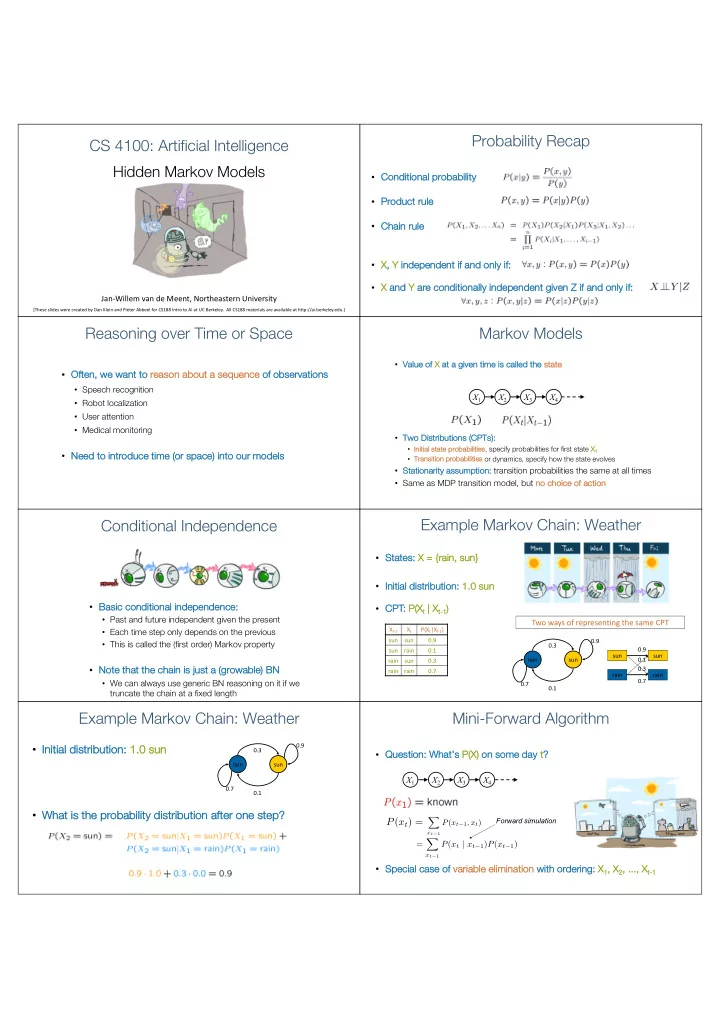

Probability Recap

- Co

Conditional probability

- Pr

Product rule

- Ch

Chain rule

- X,

, Y in independent if if and only ly if if:

- X an

and Y ar are co e conditional ally i indep epen enden ent g given en Z Z i if an and o

- nly i

if:

Reasoning over Time or Space

- Oft

Often, we we wa want to re reason

- n abou

bout a sequ quence of

- f obs

- bserv

rvation

- ns

- Speech recognition

- Robot localization

- User attention

- Medical monitoring

- Ne

Need to introduce time me (or sp space) into our mo models

Markov Models

- Va

Value of X at at a a given en time e is cal called ed the e st state

- Tw

Two

- Distr

tributi tion

- ns (CPTs

Ts):

- In

Initial s state p probabilities, specify probabilities for first state X1

- Tra

ransiti tion pr proba babi bilities or dynamics, specify how the state evolves

- St

Stationarity as assumption: transition probabilities the same at all times

- Same as MDP transition model, but no

no cho hoice of action X2 X1 X3 X4

Conditional Independence

- Ba

Basic ic condit itio ional l in independence:

- Past and future independent given the present

- Each time step only depends on the previous

- This is called the (first order) Markov property

- No

Note that the chain is s just st a (grow growabl ble) ) BN

- We can always use generic BN reasoning on it if we

truncate the chain at a fixed length

Example Markov Chain: Weather

- St

States: X X = {ra {rain, sun}

- In

Initial di distri ribu bution

- n: 1.

1.0 0 sun

rain sun 0.9 0.7 0.3 0.1

Two ways of representing the same CPT

sun rain sun rain 0.1 0.9 0.7 0.3 Xt-1 Xt P(Xt|Xt-1) sun sun 0.9 sun rain 0.1 rain sun 0.3 rain rain 0.7

- CP

CPT: P( P(Xt | | Xt-1)

Example Markov Chain: Weather

- In

Initi tial al di distr tribu buti tion: 1. 1.0 0 sun

- Wh

What i is t s the p probability d dist stribution a after o

- ne st

step?

rain sun 0.9 0.7 0.3 0.1

Mini-Forward Algorithm

- Qu

Question: What’s s P( P(X) on

- n some

- me da

day t?

- Sp

Special case of f va variable elimination wi with ordering: X1, X , X2, ..., X , ..., Xt-1

Forward simulation

X2 X1 X3 X4

P(xt) =

X

xt−1

P(xt−1, xt) = X

xt−1