Review

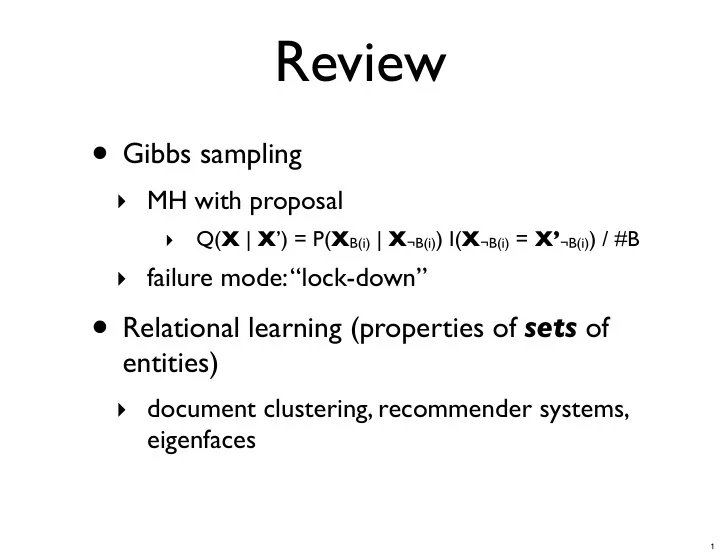

- Gibbs sampling

- MH with proposal

- Q(X | X’) = P(XB(i) | X¬B(i)) I(X¬B(i) = X’¬B(i)) / #B

- failure mode: “lock-down”

- Relational learning (properties of sets of

entities)

- document clustering, recommender systems,

eigenfaces

1

Review Gibbs sampling MH with proposal Q( X | X ) = P( X B(i) | X - - PowerPoint PPT Presentation

Review Gibbs sampling MH with proposal Q( X | X ) = P( X B(i) | X B(i) ) I( X B(i) = X B(i) ) / #B failure mode: lock-down Relational learning (properties of sets of entities) document clustering,

eigenfaces

1

2

3

4

if a random surfer is likely to land there

5

A B C D 0.1 0.2 0.3 0.4 0.5

6

7

the same places when starting from A or B

8

9

10

2 4 6 8 10 0.2 0.4 0.6 0.8 1 t=1 t=3 t=5 t=10

11

(Lusseau et al., 2003)

20 40 60 10 20 30 40 50 60

12

!!"# !!"$ ! !"$ !"# !"% !!"% !!"# !!"$ ! !"$ !"# !"%

13

!!"# !!"$ !!"% ! !"% !"$ !"# !!"# !!"$ !!"% ! !"% !"$ !"# !"& !!"# !!"$ ! !"$ !"# !!"# !!"% !!"$ !!"& ! !"& !"$ !"% !"#

14

!!"# !!"$ ! !"$ !"# !"% !!"% !!"# !!"$ ! !"$ !"# !"%

15

16

17

18

model

19

more and more Xij

20

21

22

23

24

25

26

(normal equations)

Wishart) distribution

27

28

29

30

31

32

33

fMRI fMRI fMRI Brain activity

Stimulus Voxels

Y

34

0.2 0.4 0.6 0.8 1 1.2 1.4 Mean Squared Error HBCMF HCMF CMF

Better Lower is

Y (fMRI data): Fold-in

Maximum a posteriori (fixed hyperparameters) Just using fMRI data Augmenting fMRI data with word co-occurrence

35