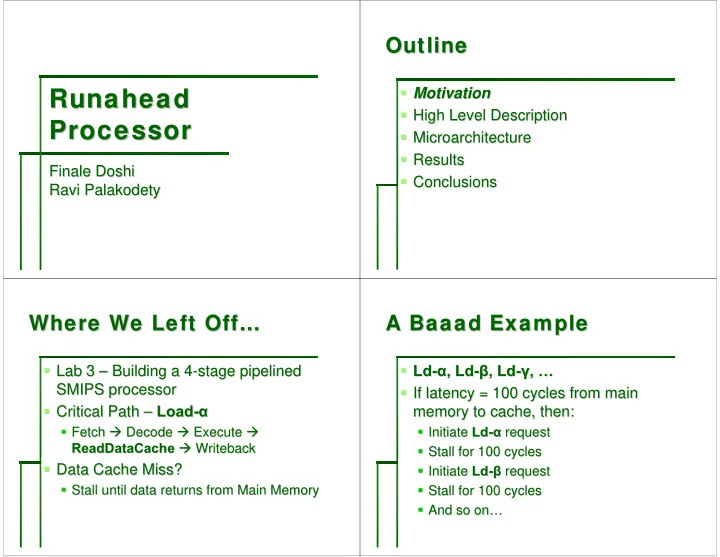

SLIDE 1

Runahead Runahead Runahead Runahead Processor Processor Processor Processor

Finale Finale Doshi Doshi Ravi Palakodety Ravi Palakodety

Outline Outline Outline Outline

- Motivation

Motivation

- High Level Description

High Level Description

- Microarchitecture

Microarchitecture

- Results

Results

- Conclusions

Conclusions

Where We Left Off… Where We Left Off… Where We Left Off… Where We Left Off…

- Lab 3

Lab 3 – – Building a 4 Building a 4-

- stage pipelined

stage pipelined SMIPS processor SMIPS processor

- Critical Path

Critical Path – – Load Load-

- α

α

- Fetch

Fetch Decode Decode Execute Execute

- ReadDataCache

ReadDataCache Writeback Writeback

- Data Cache Miss?

Data Cache Miss?

- Stall until data returns from Main Memory

Stall until data returns from Main Memory

A A A A Baaad Baaad Baaad Baaad Example Example Example Example

- Ld

Ld-

- α

α, , Ld Ld-

- β

β, Ld , Ld-

- γ

γ, , … …

- If latency = 100 cycles from main

If latency = 100 cycles from main memory to cache, then: memory to cache, then:

- Initiate

Initiate Ld Ld-

- α

α request request

- Stall for 100 cycles

Stall for 100 cycles

- Initiate

Initiate Ld Ld-

- β

β request request

- Stall for 100 cycles

Stall for 100 cycles

- And so on