SLIDE 5 25

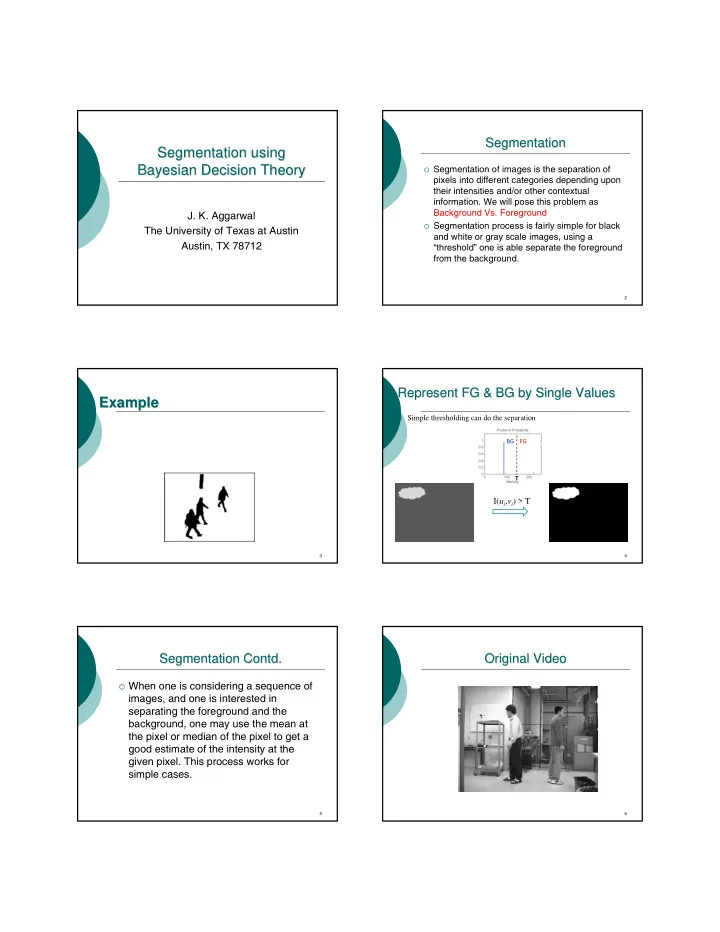

Represent FG & BG by Single Distributions Represent FG & BG by Single Distributions

FG and BG are generated from single 1D normal distributions We are able to estimate the parameters (μi, σi) from the training

sequences

p(I(u,v)|BG) p(I(u,v)|FG) 26

Represent FG & BG by Single Distributions Represent FG & BG by Single Distributions

Assume the prior probabilities are equal

( ( , ) | ) ( ) ( ( , ) | ) ( ) ( ( , )) ( ( , ))

i j i j i j i j

p I u v FG P FG p I u v BG P BG p I u v p I u v > ( , )

i j

I u v

T BG FG

27

Multivariate Normal Density (cont.) Multivariate Normal Density (cont.)

Loci of points of constant density are

hyper-ellipsoids of the form

distance (squared) from x to μ

Volume of hyperellipsoid

( ) ( )

1 t −

− − μ Σ μ x x

( ) ( )

2 1 t

r

−

= − − μ Σ μ x x ( )

( ) 1/2 /2 1 /2

/ / 2 ! even 1 2 !/ ! odd 2

d d d d d d

V V r d d V d d d π π

−

= ⎧ ⎪ = ⎨ − ⎛ ⎞ ⎜ ⎟ ⎪ ⎝ ⎠ ⎩ Σ

28

The Real Situations The Real Situations

In stead of the entire background, the values of a

background pixel over time can be modeled by a single or a mixture of Gaussians

Due to the motion of objects, by pixel foreground

model is usually not available

29

The Real Situations ( cont The Real Situations ( cont ’ ’d) d)

Given a controlled sequence with only background values,

we can train a Gaussian for each pixel location

Without the knowledge of priors and foreground conditional

probability, we can threshold on the Z-value to perform background subtraction

1 1/ 2 3/ 2 2 2

: 1 1 ( ( , ) | ) exp[ ( ( , ) ) ( ( , ) )] 2 (2 ) : ( , ) 1 1 ( ( , ) | ) exp[ ( ) ] 2 2

T i j i j ij i i j ij i j i j ij i j ij ij

Color image p u v BG u v u v Grayscale image I u v p I u v BG π μ σ πσ

−

= − − − − = − Σ Σ I I μ I μ

( , )

i j ij ij

I u v z μ σ − =

30

The Real Situations ( cont The Real Situations ( cont ’ ’d) d)

In the context of color image, the Mahalanobis distance is

defined as:

The Mahalanobis distance implies the probability of the test

pixel value belonging to the background model

ex: Illustration of BG subtraction in grayscale case

1

( ( , )) ( ( , ) ) ( ( , ) )

T M i i i j ij ij i j ij

D u v u v u v

−

= − − Σ I I μ I μ T BG FG T BG FG I( , )

i j

u v ?